Paul Vu

1.7K posts

@karpathy and I are back! At @sequoia AI Ascent 2026. And a lot has changed. Last year, he coined “vibe coding”. This year, he’s never felt more behind as a programmer. The big shift: vibe coding raised the floor. Agentic engineering raises the ceiling. We talk about what it means to build seriously in the agent era. Not just moving faster. Building new things, with new tools, while preserving the parts that still require human taste, judgment, and understanding.

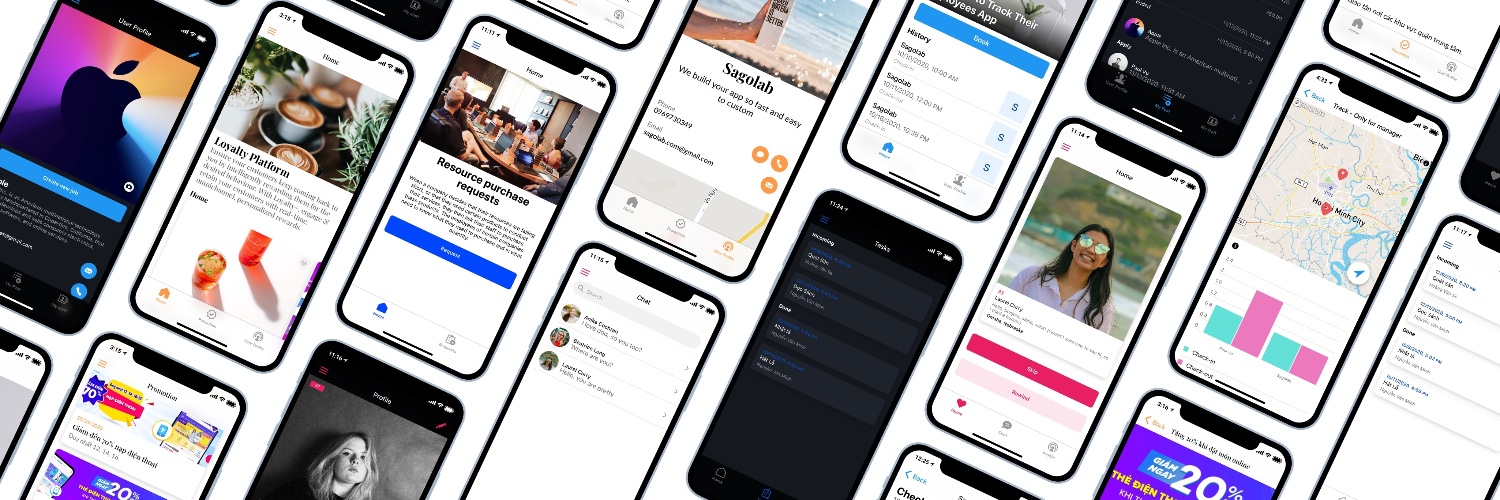

Introducing Cloud Computer for Manus. Your always on machine in the cloud, so anything you build keeps running 24/7, even when your laptop is off. Anyone can build, and anything can run. Available on web and mobile

Speech-native models like Moshi sound great and answer fast, but aren’t as smart as text LLMs. In our new paper, MoshiRAG, we show how Moshi can ask for advice from a text LLM or a knowledge base. The tricky part is how to do this in real time without adding latency. 🧵