RIP OpenClaw Telegram/Discord Channels for Claude Code 🤯

Payton

1.4K posts

@Payton_Thompson

Enjoying the moment | Co-founder @ EverythingAfter | AI & Automation 🤖 | Investor 💰 | Reader 📖 | Dad × 3

RIP OpenClaw Telegram/Discord Channels for Claude Code 🤯

We’re launching a new @alphaschoolatx high school for aspiring entrepreneurs. Our promise: Make $1m by graduation, or receive a full tuition refund. Yes, this will be the coolest high school in the world. And we're building the best team in the world to make it happen. We’re looking for 2-3 exceptional coaches to help us guide the students towards achieving this aggressive but achievable goal. You won’t be giving lectures or assigning homework. You’ll be grilling them on their P&L, driving them to the car wash they bought, critiquing their email funnels, pushing them to do things 99% of the world doesn't believe is possible. Job posting is live and DMs are open.

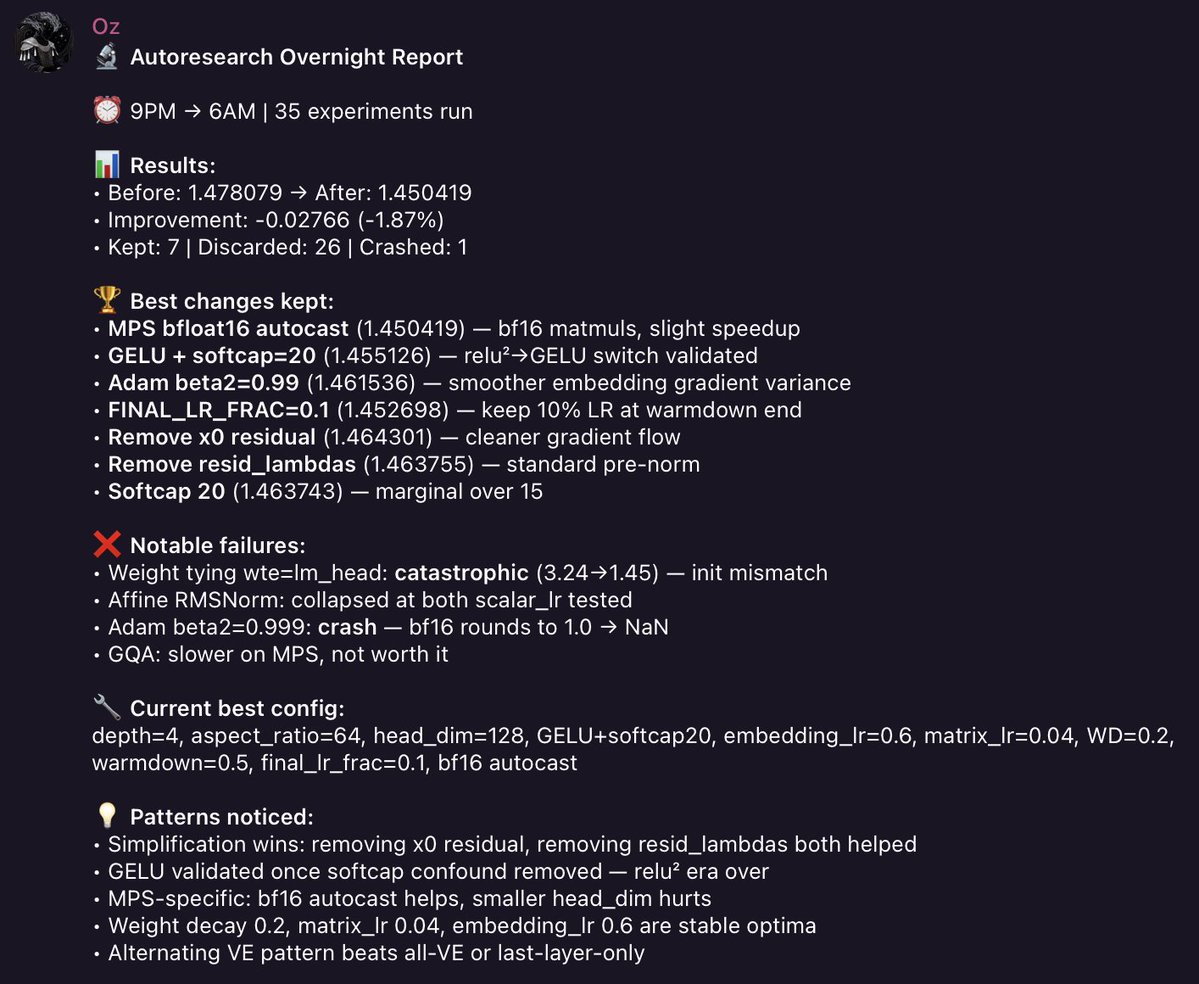

what could be better on a Saturday than trying out the creations of the 🐐? I ran @karpathy’s autoresearch on my mac mini m4. 16GB RAM. no CUDA. no GPU cluster. here’s my full debrief: found a macOS fork that replaces FlashAttention-3 with PyTorch SDPA for Apple Silicon. setup took 3 hours. trained an 11.5M parameter GPT model, tiny compared to karpathy’s H100 baseline, but that’s what fits in 16GB. ran some manual experiments with claude opus as the researcher. me as the human in the loop, claude deciding what to try next. - experiment 1: tried depth 8 (50M params). OOM crash. - experiment 2: scaled down to depth 6, batch 8 (26M params). ran but val_bpb was worse than the tiny baseline. classic lesson: a small well-trained model beats a large undertrained one on limited compute. - experiment 3: halved batch to 32K. first real win. val_bpb dropped to 1.5960. - experiment 4: batch 16K. best single decision of the entire run. quadrupled optimiser steps (102→370), val_bpb dropped to 1.4787. 15.7% improvement over baseline. karpathy’s H100 hits 0.9979. the M4 is 2.5x slower per cycle but it’s a $600 desktop vs a $30K GPU. then I made it fully autonomous. launchd starts a tmux session at 9PM, runs claude -p in a bash loop (read results → decide experiment → edit train.py → run → check → keep or revert → log → repeat). stops at 6AM. at 6:30AM my @openclaw bot sends me a telegram debrief with overnight stats. ~45 experiments per night. ~315 per week. I will update y’all on this experiment!

My information consumption is now 1/4 X, 1/4 podcast interviews of the smartest practitioners, 1/4 talking to the leading AI models, and 1/4 reading old books. The opportunity cost of anything else is far too high, and rising daily.

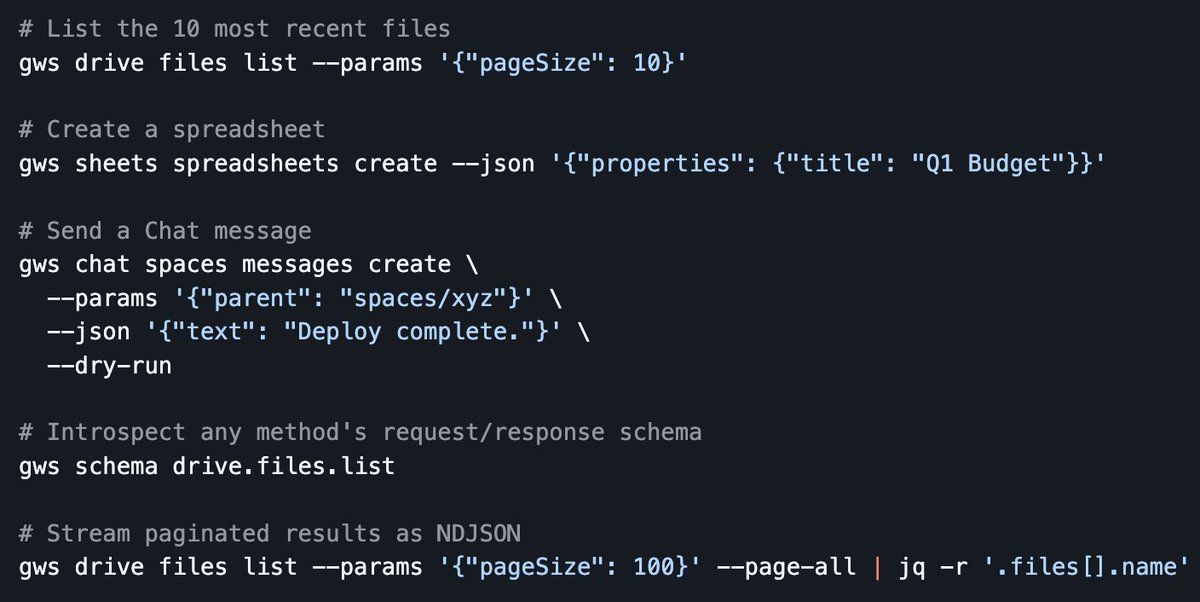

official google workspace cli!! github.com/googleworkspac…

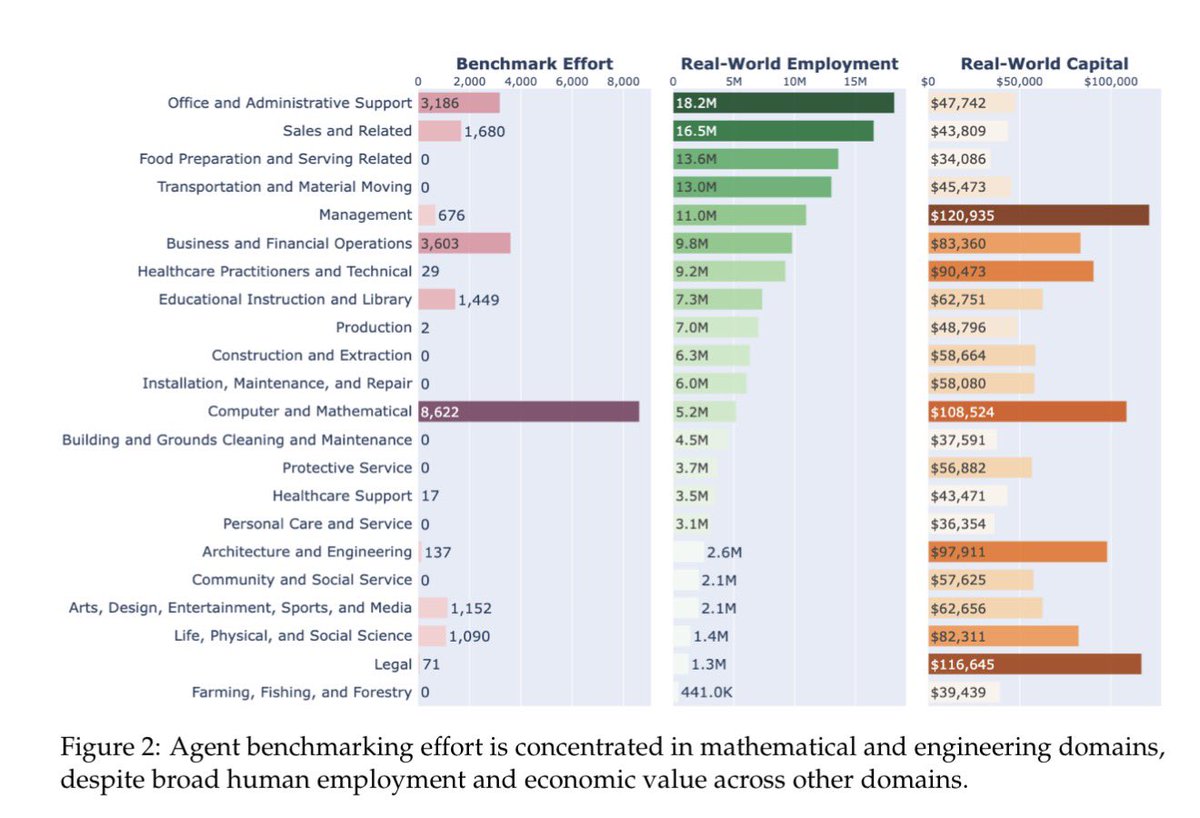

Model "shallowness" is a big deal in the time of AI agents, models can be very good in narrow areas but since they are shallow, they lack context and reasoning to make good judgement calls when doing tasks. Once you are operating independently, being good at coding isn't enough

From an AI user perspective, the four big leaps so far in ability: 1. GPT-3.5 (ChatGPT, November 2022) 2. GPT-4 (Spring 2023) 3. Reasoners (starts with o1-preview, but the real deal was o3, Spring 2025) 4. Workable agentic systems (Harness + good reasoner models, December 2025)

Dario Amodei: "It doesn't show the judgment that a human soldier would show." Anthropic CEO Dario Amodei just gave his most chilling examples of why he’s blocking the Pentagon from using Claude for autonomous weapons. His fear isn't just a "glitch" that causes friendly fire; it’s the terrifying concentration of power. Imagine an army of 10 million drones coordinated by a single person.