phil

112 posts

phil

@PhilippeFlops

Classically trained and practicing mechanical engineer. Wannabe coder.

Codex team is aware of reports of GPT-5.5 performing worse for some users and investigating. We don't have anything conclusive yet and systems are healthy but we will share updates as we go.

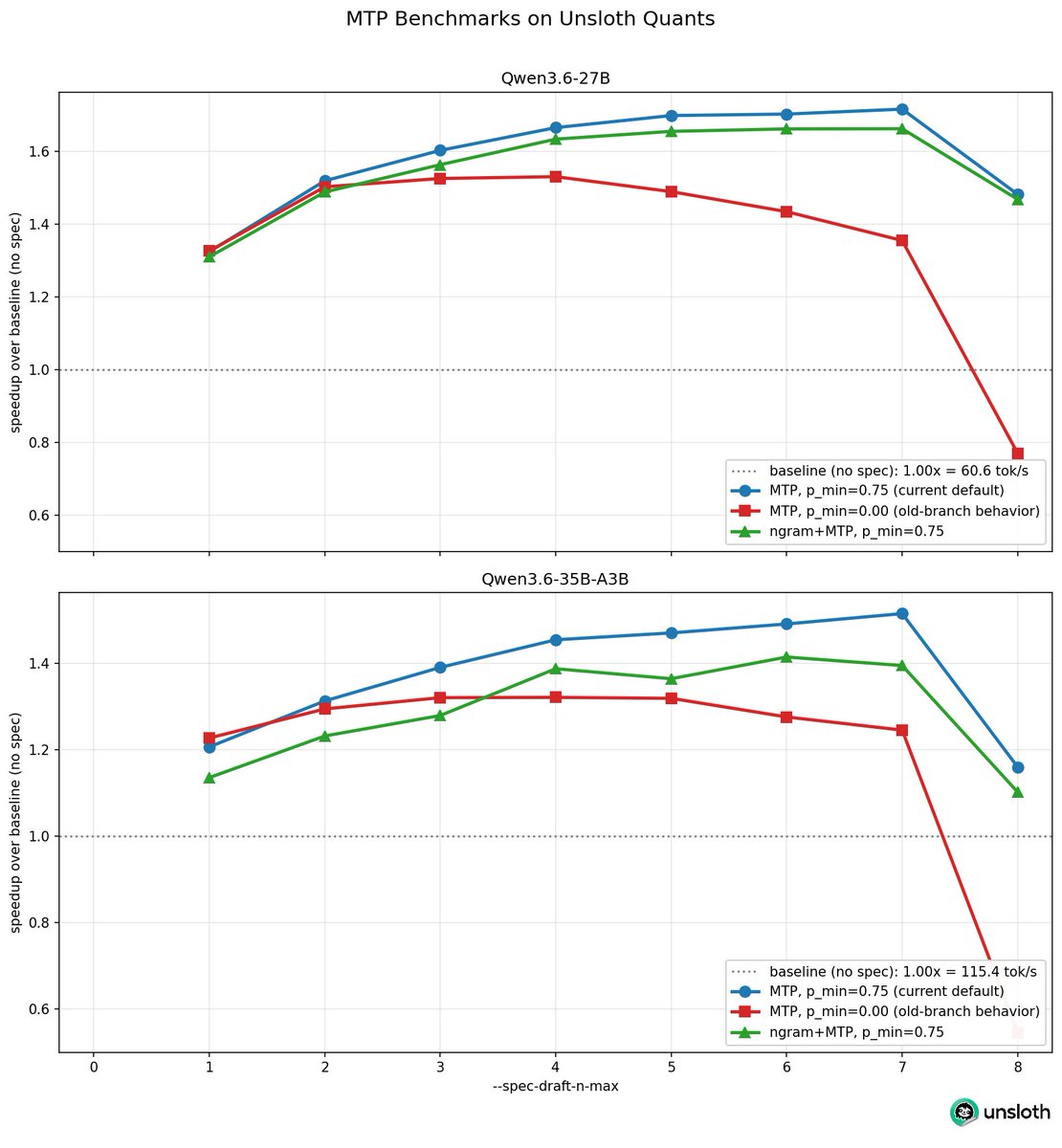

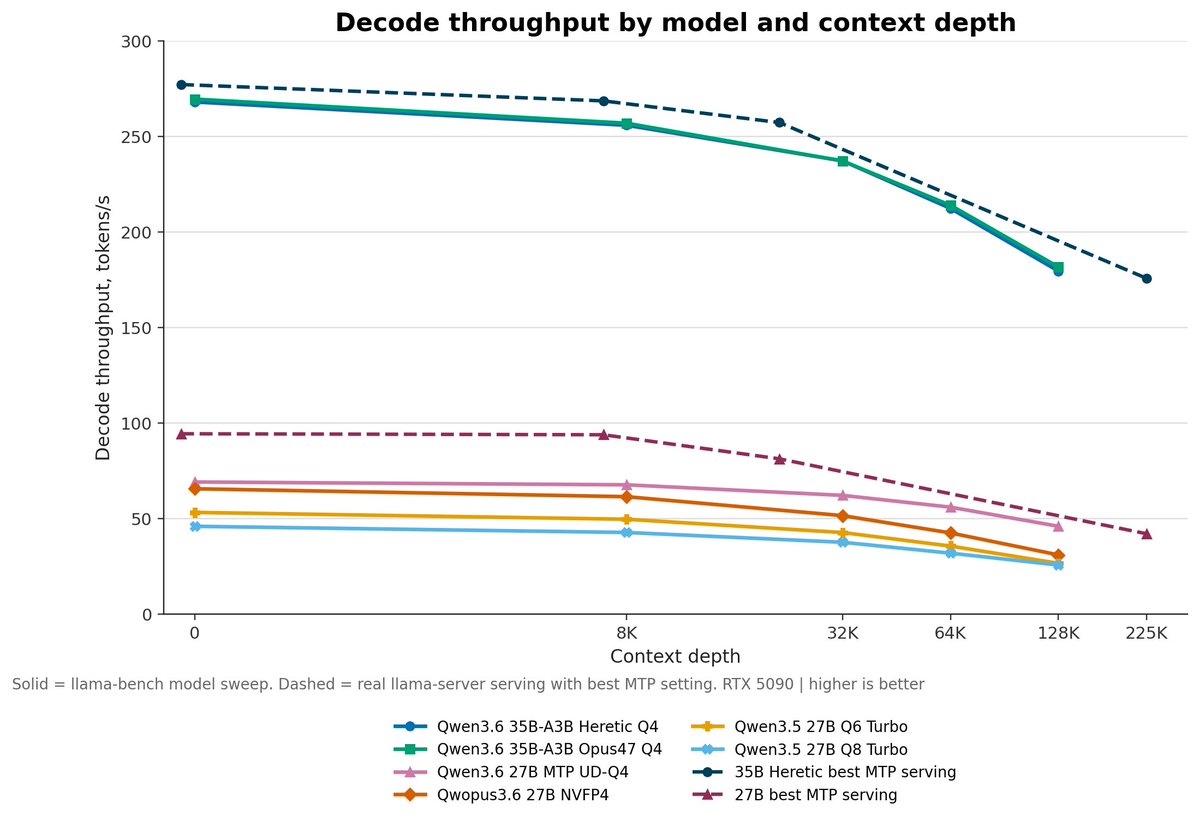

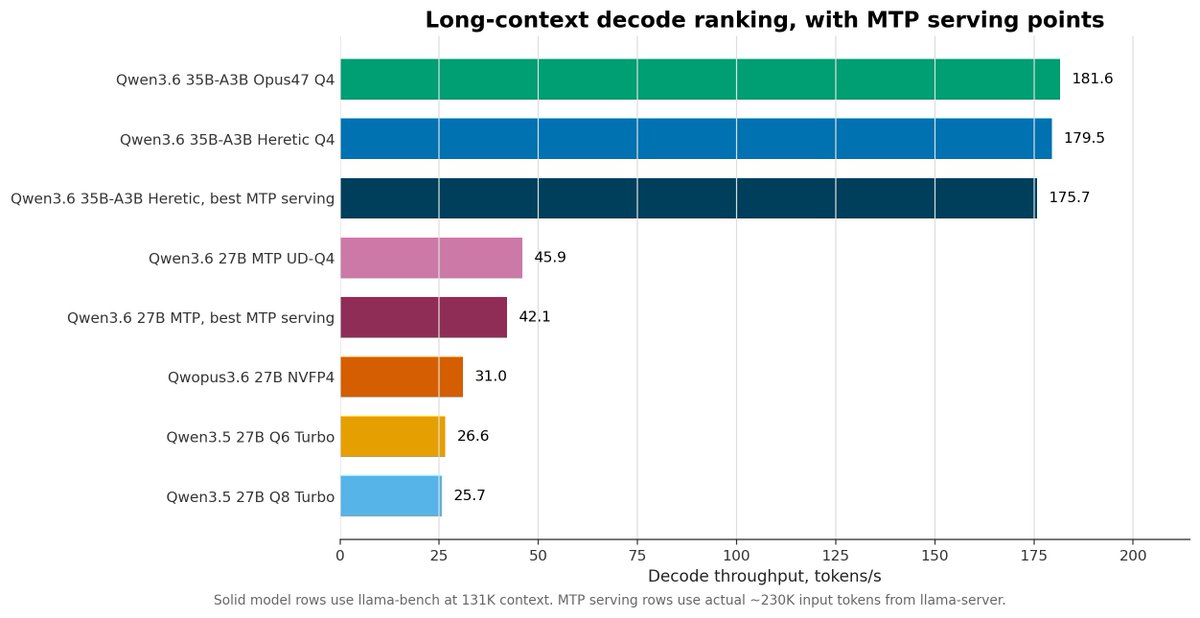

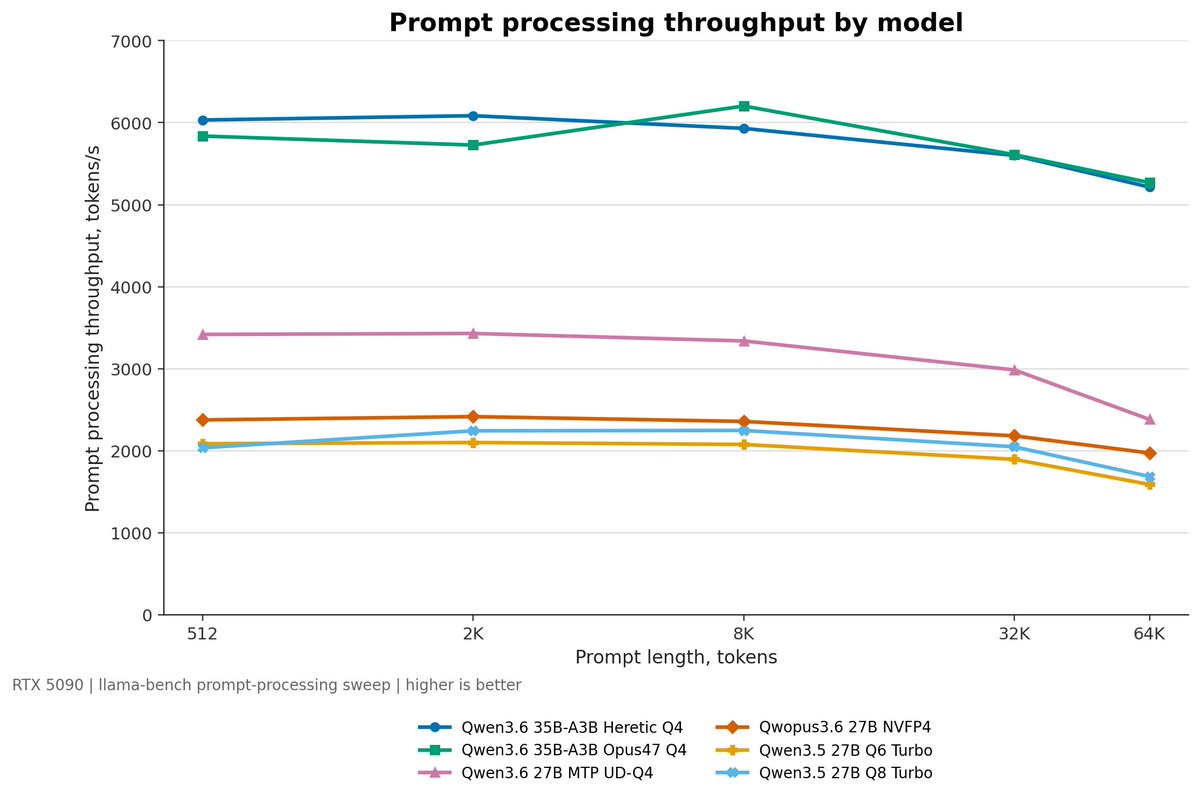

💪 Unsloth pushed Qwen3.6 MTP even further. ⚡ Qwen3.6 MTP models jumped from 1.4x → 1.8x faster in the last 2 days Thanks to a new llama.cpp update: --spec-draft-p-min 0.75 + --spec-type draft-mtp They also raised --spec-draft-n-max from 2 → 6 for more aggressive drafting. ✅ Bigger speedups on local inference ✅ Still works with simple CLI flags ✅ New small MTP GGUFs released too (0.8B–9B) Local Qwen just got quicker.

@robblackie Leasehold isn't the main issue - more higher construction costs, high interest rates, Building Safety Regulator (costs & delays) & taxing landlords and overseas buyers depleting the market for flats.

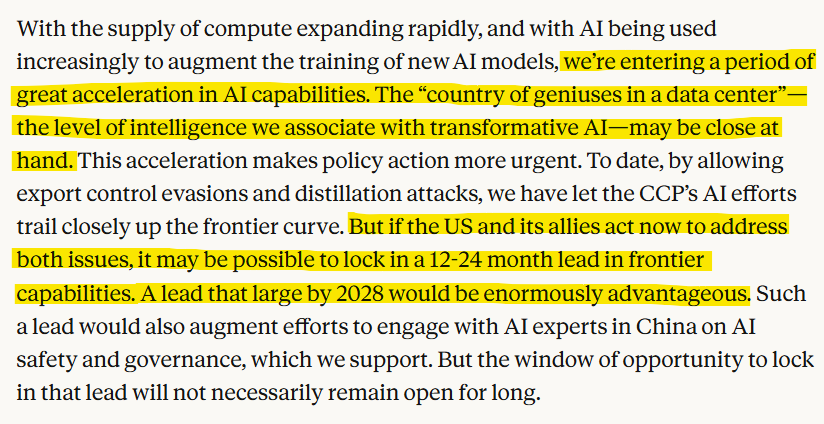

We've published a paper that explains our views on AI competition between the US and China. The US and democratic allies hold the lead in frontier AI today. Read more on what it’ll take to keep that lead: anthropic.com/research/2028-…

We've published a paper that explains our views on AI competition between the US and China. The US and democratic allies hold the lead in frontier AI today. Read more on what it’ll take to keep that lead: anthropic.com/research/2028-…

A preview for Pro users: a new personal finance experience in ChatGPT. Pro users in the U.S. can securely connect financial accounts, see where their money is going, and ask questions based on the information they choose to connect. Your full financial picture, now in ChatGPT.

Run @NousResearch's Hermes Agent fully locally on DGX Spark. 🚀 Our newest playbook shows you how to get set up via @Ollama step by step. 👇