Pierre Colombo

647 posts

Pierre Colombo

@PierreColombo6

Omni-modal AI researcher. Creator of ColPali, BidirLM-omi, EuroBERT & EuroLLM. Ex-Prof@ Centrale (Paris-Saclay) · Ex-CSO@ https://t.co/ncQ9gT1TzM (legaltech - SaulLM)

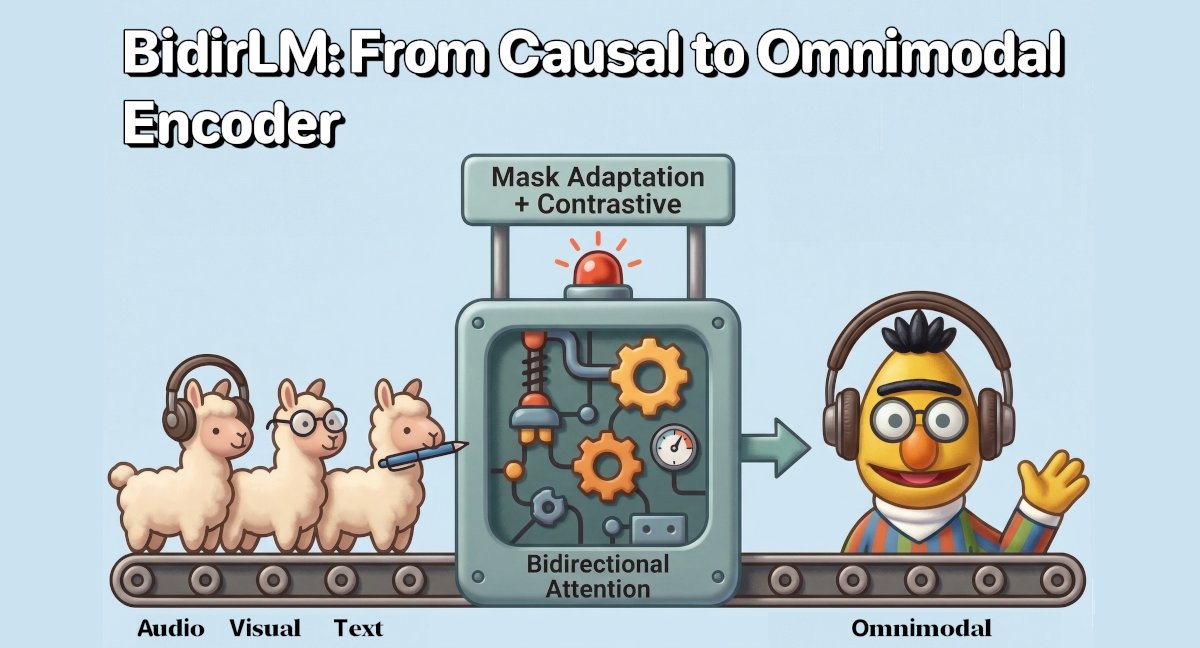

BidirLM-Omni-2.5B-Embedding is live: a single bidirectional encoder that embeds text, images, and audio into the same space! Three modalities, all in one 2048-dim space. 🧵

What’s inside the release: 🔌 Plug & play BERT-as-a-judge model: huggingface.co/collections/ar… 🛠️ Support to train your own custom evaluators: github.com/artefactory/BE… 📄 Study on the limits of lexical methods: arxiv.org/pdf/2604.09497

🎉 Second paper this month! Introducing BERT-as-a-Judge (x @gisship) ⚖️ Evaluating LLMs with rigid lexical methods often fails right answers due to bad formatting. While "LLM-as-a-Judge" solves this, it remains costly & slow. Our fix? A lightweight, encoder-driven approach.

🚀 New model family release with an OMNIMODAL version ! After Eurobert, I'm excited to introduce BidirLM, a family of 5 frontier bidirectional encoders including an OMNIMODAL encoder at just 2.5B parameters. 🧵👇 huggingface.co/BidirLM

There's a wave of omni embedding models (gemini, nemotron, bidirlm). Excited to support this trend with our multimodal mteb versions (mieb, maeb) - video coming soon🎥

🚀 New model family release with an OMNIMODAL version ! After Eurobert, I'm excited to introduce BidirLM, a family of 5 frontier bidirectional encoders including an OMNIMODAL encoder at just 2.5B parameters. 🧵👇 huggingface.co/BidirLM

🚀 New model family release with an OMNIMODAL version ! After Eurobert, I'm excited to introduce BidirLM, a family of 5 frontier bidirectional encoders including an OMNIMODAL encoder at just 2.5B parameters. 🧵👇 huggingface.co/BidirLM

Are membership inference attacks (MIAs) against LLMs rushing nowhere? 🏃 ➡️ In a new SoK, we look at how things have evolved recently, show popular evaluation setups to be flawed, and examine solutions going forward.