Sabitlenmiş Tweet

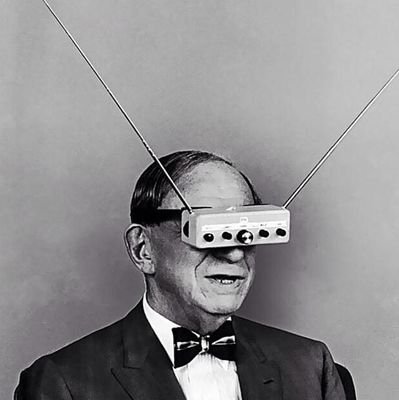

The level of access I have to information through LLMs would be inconceivable to my ancestors.

Not impressive.

Not convenient.

Inconceivable.

For most of human history, knowledge was locked behind distance, class, language, institutions, memory, and luck.

Now I can ask a machine to cross-map fields before breakfast.

The danger is thinking access means wisdom.

It doesn’t.

English