❖Prisma Dimensional❖

6.3K posts

@PrismaDimens

🔥 Code geek | 🚀 Billions of iterations in C for fun | 💻 Linux | 🤖 Dreaming of quantum ASICs while laughing & creating wild AI models.

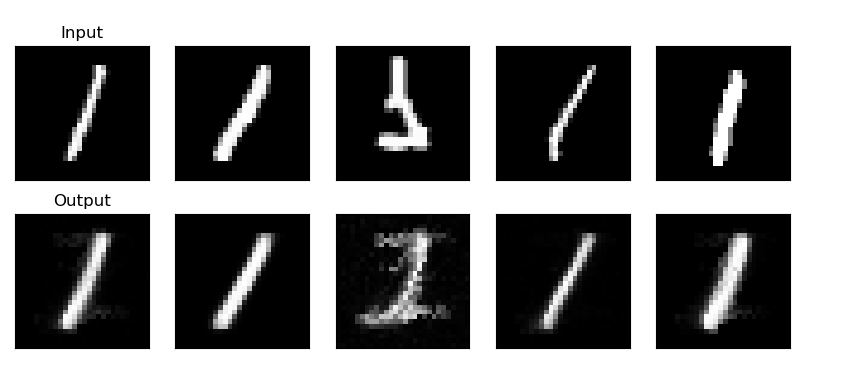

Been experimenting with procedural locomotion on #GaussianSplat environments from @theworldlabs . Built a little multi-legged robot in Unity that raycasts against the splat data to figure out where to place its feet, no meshes or colliders involved... Pretty fun to climb surfaces, walk on walls, and set a weird number of legs on the fly :) Still rough around the edges but pretty fun to watch it figure things out. #GaussianSplatting #Unity3D #WorldLabs #ProceduralAnimation

Software horror: litellm PyPI supply chain attack. Simple `pip install litellm` was enough to exfiltrate SSH keys, AWS/GCP/Azure creds, Kubernetes configs, git credentials, env vars (all your API keys), shell history, crypto wallets, SSL private keys, CI/CD secrets, database passwords. LiteLLM itself has 97 million downloads per month which is already terrible, but much worse, the contagion spreads to any project that depends on litellm. For example, if you did `pip install dspy` (which depended on litellm>=1.64.0), you'd also be pwnd. Same for any other large project that depended on litellm. Afaict the poisoned version was up for only less than ~1 hour. The attack had a bug which led to its discovery - Callum McMahon was using an MCP plugin inside Cursor that pulled in litellm as a transitive dependency. When litellm 1.82.8 installed, their machine ran out of RAM and crashed. So if the attacker didn't vibe code this attack it could have been undetected for many days or weeks. Supply chain attacks like this are basically the scariest thing imaginable in modern software. Every time you install any depedency you could be pulling in a poisoned package anywhere deep inside its entire depedency tree. This is especially risky with large projects that might have lots and lots of dependencies. The credentials that do get stolen in each attack can then be used to take over more accounts and compromise more packages. Classical software engineering would have you believe that dependencies are good (we're building pyramids from bricks), but imo this has to be re-evaluated, and it's why I've been so growingly averse to them, preferring to use LLMs to "yoink" functionality when it's simple enough and possible.

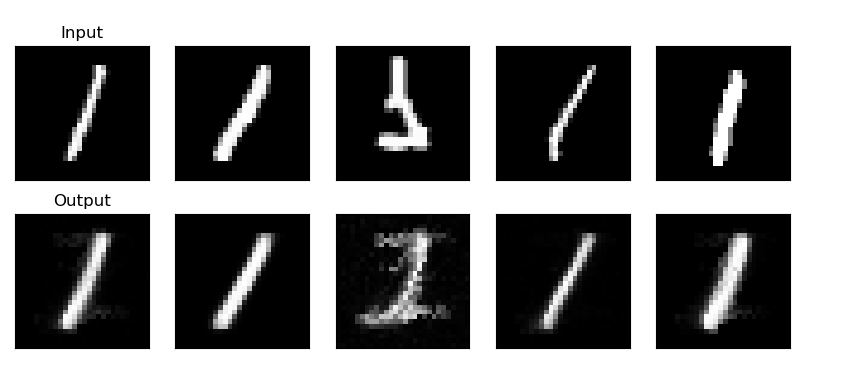

You can now enable Claude to use your computer to complete tasks. It opens your apps, navigates your browser, fills in spreadsheets—anything you'd do sitting at your desk. Research preview in Claude Cowork and Claude Code, macOS only.

We just open-sourced K-Dense BYOK, your own AI research assistant, running locally with your API keys. 170+ scientific skills. 250+ databases. 40+ models. Scalable compute via @modal when you need it. No subscriptions. No lock-in. Data stays on your computer. Repost, star and try it now: github.com/K-Dense-AI/k-d…

To pause or slow intelligence is to commit societal suicide. To accelerate intelligence is to commit societal abundance.

No, that’s just the little advanced technology fab, where we will be iterating on chip designs. We couldn’t possibly fit the Terafab on the GigaTexas campus. It will be far bigger than everything else combined there. Several locations for Terafab are under consideration. It needs thousands of acres and over 10GW of power at full scale.