Pritam

1.6K posts

You can now enable Claude to use your computer to complete tasks. It opens your apps, navigates your browser, fills in spreadsheets—anything you'd do sitting at your desk. Research preview in Claude Cowork and Claude Code, macOS only.

@0xlelouch_ I am founding engineer at realx and thus work on their entire investment product from scratch. Not sure how to say that without looking braggy

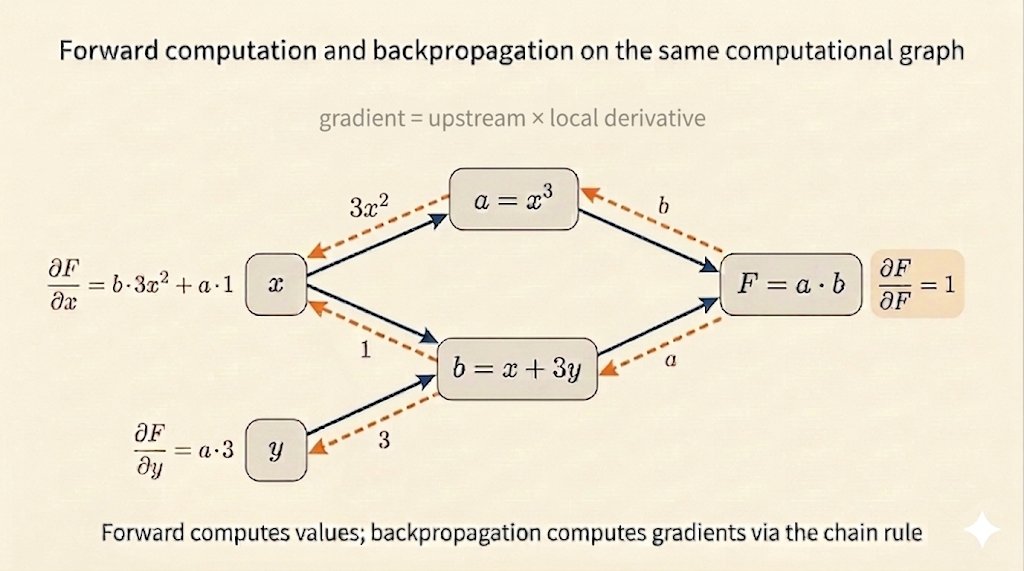

🚀MIT Flow Matching and Diffusion Lecture 2026 Released (diffusion.csail.mit.edu)! We just released our new MIT 2026 course on flow matching and diffusion models! We teach the full stack of modern AI image, video, protein generators - theory and practice. We include: 📺 Videos: Step-by-step derivations. 📝 Notes: Mathematically self-contained lecture notes 💻 Coding: Hands-on exercises for every component We fully improved last years’ iteration and added new topics: latent spaces, diffusion transformers, building language models with discrete diffusion models. Everything is available here: diffusion.csail.mit.edu A huge thanks to Tommi Jaakkola for his support in making this class possible and Ashay Athalye (MIT SOUL) for the incredible production! Was fun to do this with @RShprints! #MachineLearning #GenerativeAI #MIT #DiffusionModels #AI