Gmonad, milady.

Reckless ⨀

6.5K posts

@Rcklss27

Si Vis Pacem, Para Bellum • prev @exodus • buidl on @monad

Gmonad, milady.

Prove with @SuccinctLabs Teach @SuccinctLabs Tweet @SuccinctLabs Bull post @SuccinctLabs Discuss @SuccinctLabs SUCCINCT WILL SUCCEED. Gprove.

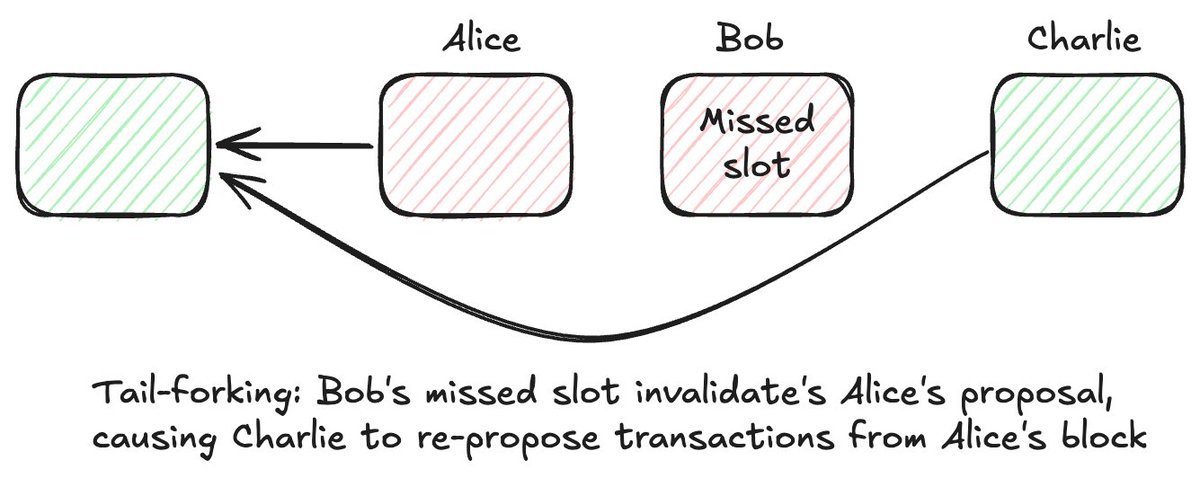

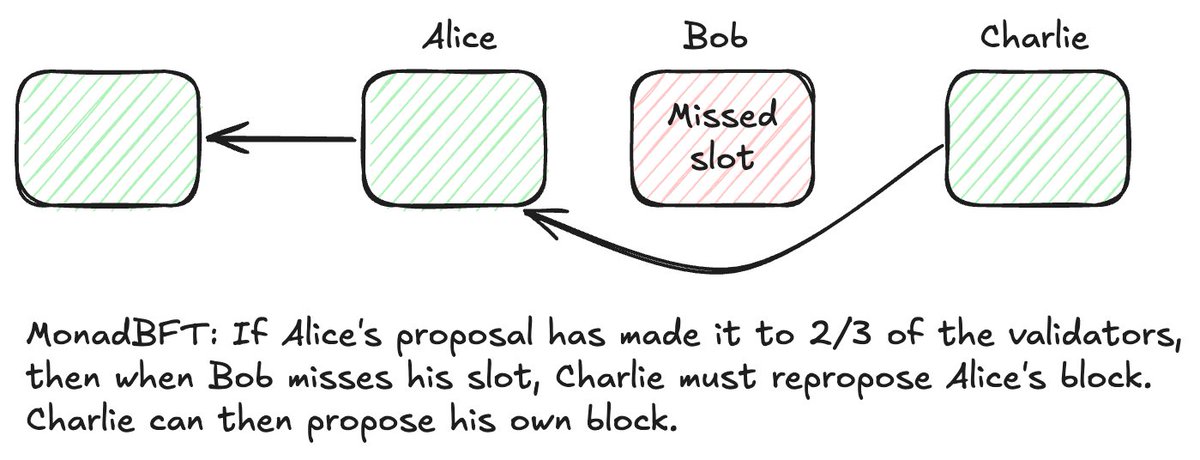

How Monad Works Summary / Network Parameters - Monad is EVM bytecode-equivalent (you can redeploy bytecode without recompilation) - Cancun fork (TSTORE, TLOAD, MCOPY) is supported - Opcode to gas units mapping is same as Ethereum (e.g. ADD is 4) - RPC conforms to geth's RPC interface - Blocks are every 500 ms - Finality occurs in 1 second; finality of block N occurs at the proposal of block N+2 - Block gas limit in testnet is 150 million gas, i.e. gas rate is 300 million gas/s. This will increase over time - 100-200 validators expected in consensus - on Day 1 of testnet, Monad will have about 55 globally-distributed validators Frugality / Impact on Decentralization The driving goal of Monad is to have better software algorithms for consensus and execution, offering high performance while preserving decentralization These algorithms deliver high performance while relying on nodes with relatively modest hardware: - 32 GB of RAM - 2x 2 TB SSDs - 100 Mbps of bandwidth - a 16-core 4.5 GHz processor like the AMD Ryzen 7950X You can assemble this machine for about $1500 These algorithms deliver high performance while maintaining a fully-globally-distributed validator set and stake weight distribution There isn’t a reliance on a supermajority in one geographic region - one would think this is an obvious expectation but many “high-performance” L1s actually derive their performance from having a supermajority of stake weight in close proximity Node Monad node has 3 components: - monad-bft [consensus] - monad-execution [execution + state] - monad-rpc [handling user reads/writes] - Network is 100-200 voting nodes (we’ll call them “validators” for the rest of this doc) - Non-voting full nodes listen to network traffic - All nodes execute all transactions and have full state Consensus Mechanism Overall consensus mechanism is MonadBFT. MonadBFT has linear communication complexity which allows it to scale to far more nodes than quadratic-complexity algorithms like CometBFT In the happy path, it follows the pattern of “one-to-many-to-one” or “fan out, fan in”: - Leader (Alice) broadcasts a signed block proposal to all other nodes (fan out), who acknowledge its validity by sending a signed attestation the next leader Bob (fan in). - Bob aggregates the attestations into a “Quorum Certificate” (QC) - Attestation signatures use the BLS signature scheme for ease of aggregation - Bob broadcasts the QC to all the nodes, who attest to receiving it by sending a message to the 3rd leader (Charlie) who aggregates the attestations into a QC-on-QC - Charlie sends the QC-on-QC to everyone. Upon receiving the QC-on-QC, everyone knows that Alice’s block has been finalized In the above story, Bob and Charlie are only sending out QCs or QCs-on-QCs, but in reality the proposals are pipelined: - Bob’s message contains both the QC for Alice’s block and also the contents of a new block. - Charlie’s message contains the QC for Bob’s block (which is a QC-on-QC for Alice’s block) and also contains the transactions for a new block When validators send an attestation for Bob’s message they are attesting to both the validity of Bob’s block and the validity of the QC This pipelining raises the throughput of the network since every slot a new block gets produced. The below diagram shows how MonadBFT reaches consensus. Pipelining is tracked at the top: See the docs for a fuller description. Obvious questions addressed there are: - How the network handles the unhappy path where Bob doesn’t get enough a supermajority of attestations - How the above mechanism results in nodes being sure that the block has been finalized once they have received the QC-on-QC RaptorCast MonadBFT requires the leader to directly send blocks to every validator However, blocks may be quite large: 10,000 transactions/s * 200 bytes/tx = 2 MB/s. Sending directly to 200 validators would require 400 MB/s (3.2 Gbps). We don’t want validators to have to have such high upload bandwidth RaptorCast is a specialized messaging protocol which solves this problem In RaptorCast, a block is erasure-coded to produce a bunch of smaller chunks In erasure coding, the total size of all of the chunks is greater than the original data (by a multiplicative factor) but the original data can be restored using (almost) any combination of chunks whose total size matches the original data’s size For example, a 1000 kb block erasure-coded with a multiplicative factor of 3 might produce 150 20kb chunks, but (roughly) any 50 of the chunks can reassemble the original message RaptorCast uses a variant of Raptor codes as the encoding mechanism In RaptorCast, each chunk is sent to one validator who is tasked with sending the chunk to every other validator in the network That is, each chunk follows a two-level broadcast tree where the leader is the root, one other validator is at the first level, and all other validators are on the second level Validators are assigned chunks prorata to their stake weight Here's a diagram showing the RaptorCast protocol: each validator serves as a first-hop recipient for a range of chunks, and broadcasts those chunks to every other validator: Raptorcast properties: - Using the two-level broadcast tree ensures that message delivery occurs within 2x the longest hop - Upload bandwidth for the leader is limited to the block size times the replication factor (roughly 2) - Since chunks are assigned pro-rata to stake weight, and BFT assumes no more than 33% of stake is malicious, at most 33% of chunks could fail to reach their recipients. With a replication factor of 2x, nodes can reconstruct the original block despite a maximum 33% loss. Transaction Lifecycle - User submits pending transaction to RPC node - RPC node sends pending transaction to next 3 leaders based on the leader schedule - Pending transaction gets added to those leaders’ local mempools - Leader adds transaction to their block as they see fit [default: they order by descending fee-per-gas-unit, i.e. Priority Gas Auction] - Leader proposes block, which is confirmed by the network as mentioned above Note: directly forwarding to upcoming leaders (as opposed to flood forwarding to all nodes) greatly reduces traffic. Flood forwarding would take up the entire bandwidth Note: in the future, a behavior is being considered where leaders forward pending transactions (that they weren’t able to include in their block) to the next leader Leader Election - Leaders in the current testnet are permissioned. Staking will be added shortly - An epoch occurs roughly once per day. Validator stake weights are locked in one epoch ahead (i.e. any changes for epoch N+1 must be registered prior to the start of epoch N) - At the start of each epoch, each validator computes the leader schedule based on running a deterministic pseudorandom function on the stake weights. Since the function is deterministic everyone arrives at the same leader schedule Asynchronous Execution Monad pipelines consensus and execution, moving execution out of the hot path of consensus into a separate swim lane and allowing execution to utilize the full block time. - Consensus is reached prior to execution - Leader & validators check transaction validity (valid signature; valid nonce; submitter can pay for the data cost of the transaction being transmitted), but are not required to execute the transactions prior to voting. - After a block is finalized, it is executed; meanwhile consensus is already proceeding on subsequent blocks This is in contrast to most blockchains, which have interleaved execution. One way to understand the impact of asynchronous execution is to recognize that, in interleaved execution, the execution budget is necessarily a small fraction of the block time since in interleaved execution, the leader must execute the transactions before proposing the block, and validators must execute before responding For a 500 ms block time, almost all of the time will be budgeted for multiple rounds of cross-globe communication, leaving only a small fraction of the time for execution The below diagram contrasts interleaved execution with asynchronous execution. Blue rectangles correspond to time spent on execution while orange rectangles correspond to time spent on consensus. The budget for execution is much larger in async execution. Delayed merkle root Due to async execution, Monad block proposals don’t include the merkle root of the state trie, since that would require execution to have already completed. All nodes should stay in sync because they’re all starting from the same point and doing the same work. But it’d be nice to be sure! As a precaution, proposals in Monad also include a delayed merkle root from D blocks ago, allowing nodes to detect if they have diverged. D is a systemwide parameter, currently set to 3. If any of the validators makes a computation error (cosmic rays?) when computing the state root at block N, it will realize that it possibly erred by block N+D (since the delayed merkle root for N contained in that block differs from its local view). The validator then needs to wait until N+D+2 to see if 2/3 of the stake weight finalizes the block proposal at N+D (in which case the local node made an error) or if the block gets rejected (in which case the leader made the error). Block stages Assume that a validator has just received block N. We say that: - Block N is ‘proposed’ - Block N-1 is ‘voted’ - Block N-2 is ‘finalized’ (because block N carries the QC-on-QC of block N-2) - Block N-2-D is ‘verified’ (because block N-2 carries the merkle root post the transactions in block N-2-D, and block N-2 is the last block that has been finalized) Note that unlike Ethereum, only one block at height N is proposed and voted on, avoiding retroactive block reorganization due to competing forks Speculative execution Although only block N-2 is ‘finalized’ and can officially be executed, nodes have a strong suspicion that the lists of transactions in block N-1 and block N are likely to become the finalized lists Therefore, nodes speculatively execute the transactions included in each new proposed block, storing a pointer to the state trie post those transactions. In the event that a block ends up not being finalized, the pointer is discarded, undoing the execution Speculative execution allows nodes to (likely) have the most up-to-date state, which helps users simulate transactions correctly Optimistic parallel execution Like in Ethereum, blocks are linearly ordered, as are transactions. That means that the true state of the world is the state arrived at by executing all transactions one after another In Monad, transactions are executed optimistically in parallel to generate pending results. A pending result contains the list of storage slots that were read (SLOADed) and written (SSTOREd) during the course of that execution. We refer to these slots as “inputs” and “outputs” Pending results are committed serially, checking that each pending result’s inputs are still valid, and re-executing if an input has been invalidated. This serial commitment ensures that the result is the same as if the transactions were executed serially Here's an example of how Optimistic Parallel Execution works: Assume that prior to the start of a block, the following are the USDC balances: - Alice: 1000 USDC - Bob: 0 USDC - Charlie: 400 USD (Note also that each of these balances corresponds to 1 storage slot, since each is 1 key-value pair in a mapping in the USDC contract.) Two transactions appear as transaction 0 and 1 in the block: - Transaction 0: Alice sends 100 USDC to Bob - Transaction 1: Alice sends 100 USDC to Charlie Then optimistic parallel execution will produce two pending results: - PendingResult0: * Inputs: Alice = 1000 USDC, Bob = 0 USDC * Outputs: Alice = 900 USDC; Bob = 100 USDC - PendingResult 1: * Inputs: Alice = 1000 USDC; Charlie = 400 USDC * Outputs: Alice = 900 USDC, Charlie = 500 USDC When we go to commit these pending results: - PendingResult 0 is committed successfully, changing the official state to Alice = 900, Bob = 100, Charlie = 400 - PendingResult 1 cannot be committed because now one of the inputs conflicts (Alice was assumed to have 1000, but actually has 900) So transaction 1 is re-executed Final result: - Alice: 800 USDC - Bob: 100 USDC - Charlie: 500 USDC Note that in optimistic parallel execution, every transaction gets executed at most twice - once optimistically, and (at most) once when it is being committed Re-execution is typically cheap because storage slots are usually in cache. It is only when re-execution triggers a different codepath (requiring a different slot) that execution has to read a storage slot from SSD MonadDb As in Ethereum, state is stored in a merkle trie. There is a custom database, MonadDb, which stores merkle trie data natively This differs from existing clients [which embed the merkle trie inside of a commodity database which itself uses a tree structure] MonadDb is a significant optimization because it eliminates a level of indirection, reduces the number of pages read from SSD in order to perform one lookup, allows for async I/O, and allows the filesystem to be bypassed State access [SLOAD and SSTORE] is the biggest bottleneck for execution, and MonadDb is a significant unlock for state access because: - it reduces the number of iops to read or write one value - it makes recomputing the merkle root a lot faster - and it supports many parallel reads which the parallel execution system can take advantage of Synergies between optimistic parallel execution and MonadDb Optimistic parallel execution can be thought of as surfacing many storage slot dependencies – all of the inputs and outputs of the pending results – in parallel and pulling them into the cache Even in the worst case where every pending result’s inputs are invalidated and the transaction has to be re-executed, optimistic parallel execution is still extremely useful by “running ahead” of the serial commitment and pulling many storage slots from SSD This makes optimistic parallel execution and MonadDb work really well together, because MonadDb provides fast asynchronous state lookups while optimistic parallel execution cues up many parallel reads from SSD Bootstrapping a node (Statesync/Blocksync) High throughput means a long transaction history which makes replaying from genesis challenging Most node operators will prefer to initialize their nodes by copying over recent state from other nodes and only replaying the last mile. This is what statesync accomplishes In statesync, a synchronizing node (“client”) provides their current view’s version and a target version and asks other nodes (“servers”) to help it progress from the current view to the target version MonadDb has versioning on each node in the trie. Servers use this version information to identify which trie components need to be sent Nodes can also request blocks from their peers in a protocol called blocksync. This is used if a block is missed (not enough chunks arrived), as well as when executing the “last mile” after statesync completes (since more blocks will have come in since the start of statesync) Thanks for reading and be sure to check out the docs

Monad Testnet is live. Try it out here: testnet.monad.xyz