Yuxuan Zhang

24 posts

Yuxuan Zhang

@ReacherZhang

Al Researcher @VectorInst @UBC CS PhD Student @UBC, CS MS @UofT, Bachelor in Economics @PKU1898

Introducing: Free Tier for Browser Use Cloud 🚀 We’re giving all agents their own cloud browsers! > Unlimited browser hours > Free proxies > Persistent authentication Let your agents try for free ↓🔗

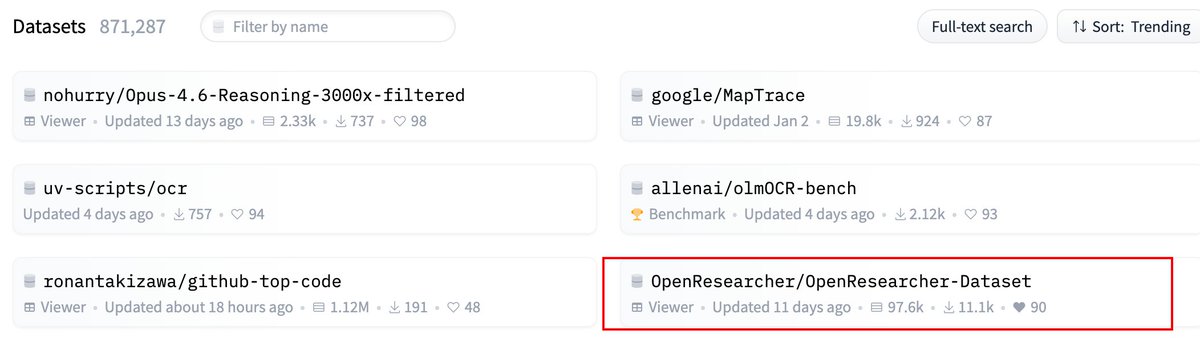

Meet OpenResearcher-30B: a specialized 30B parameter model fine-tuned for academic and research tasks. It's designed to help researchers, students, and knowledge workers process complex information. This isn't just another general chatbot.

110+ employees and alums of top-5 AI companies just published an open letter supporting SB 1047, aptly called the "world's most controversial AI bill." 3-dozen+ of these are current employees of companies opposing the bill. Check out my coverage of it in the @sfstandard 🧵