Sabitlenmiş Tweet

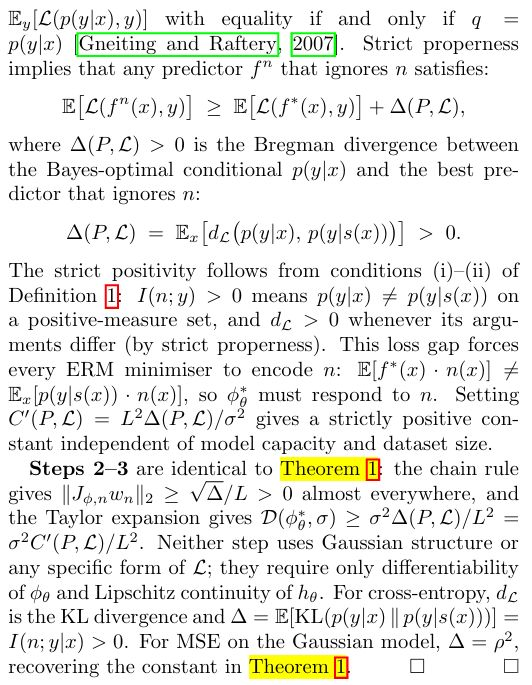

Ultimate Neural Network Programming with Python is doing quite good in the market. Please take a look

Amazon International

👉rb.gy/xc8m46

Amazon India

👉rb.gy/aqdqei

#AI #GPT #ChatGPT #AIBook #books #DataScience #ArtificialIntelligence #LLMs #LLM #Data

English