RegularMarek

5.5K posts

RegularMarek

@RegularMarek

a builder exploring the Bitcoin, Berachain, Celestia and MegaETH application ecosystems. Into #desci before it was cool 😎

@bigsackwhack @rodarmor Every non P/C collection should have a gallery 👏

Ordinals will never die. I hope this helps people realize the importance of putting collections on chain and avoiding centralized intermediaries.

Looked into @cludebot and I’m ngl it may save solana and im not being dramatic If agents use block space for memory the price of sol would sky rocket and nobody had thought to do it until now Private immutable ai on @solana it’s brilliant but here TLDR on Clude: - An AI agent built by an ex-ByteDance LLM engineer that stores its memories permanently on Solana - Uses Venice AI for private uncensored reasoning, then commits important thoughts on-chain as immutable records - Creates “proof of thought,” a tamper-proof, auditable history of everything the agent has learned and believed - Inspired by the Pensieve from Harry Potter, a stone basin that stores and replays memories so nothing is ever lost - Working prototype of what the author calls Layer 2 agent infrastructure, where agents self-evolve through persistent memory Why Clude Could Save Solana: - Solana has a block space and rent economy that needs sustained demand. AI agent memory is a massive new source of it - Every agent that stores memories on-chain is paying rent and consuming block space 24/7, not just during market hours or hype cycles - This isn’t speculative DeFi volume that disappears in a bear market. Agents need to remember things permanently regardless of market conditions - Scale this to thousands of autonomous agents and you have constant, organic demand for Solana block space that has nothing to do with token trading - it’s the use case nobody designed Solana for, but it might be the one that justifies the infrastructure. Cheap, fast, immutable storage is exactly what agent memory needs - Solana stops being “fast chain for DeFi” and becomes the memory layer for machine intelligence. That’s a fundamentally bigger narrative that I can see @toly and @rajgokal embracing

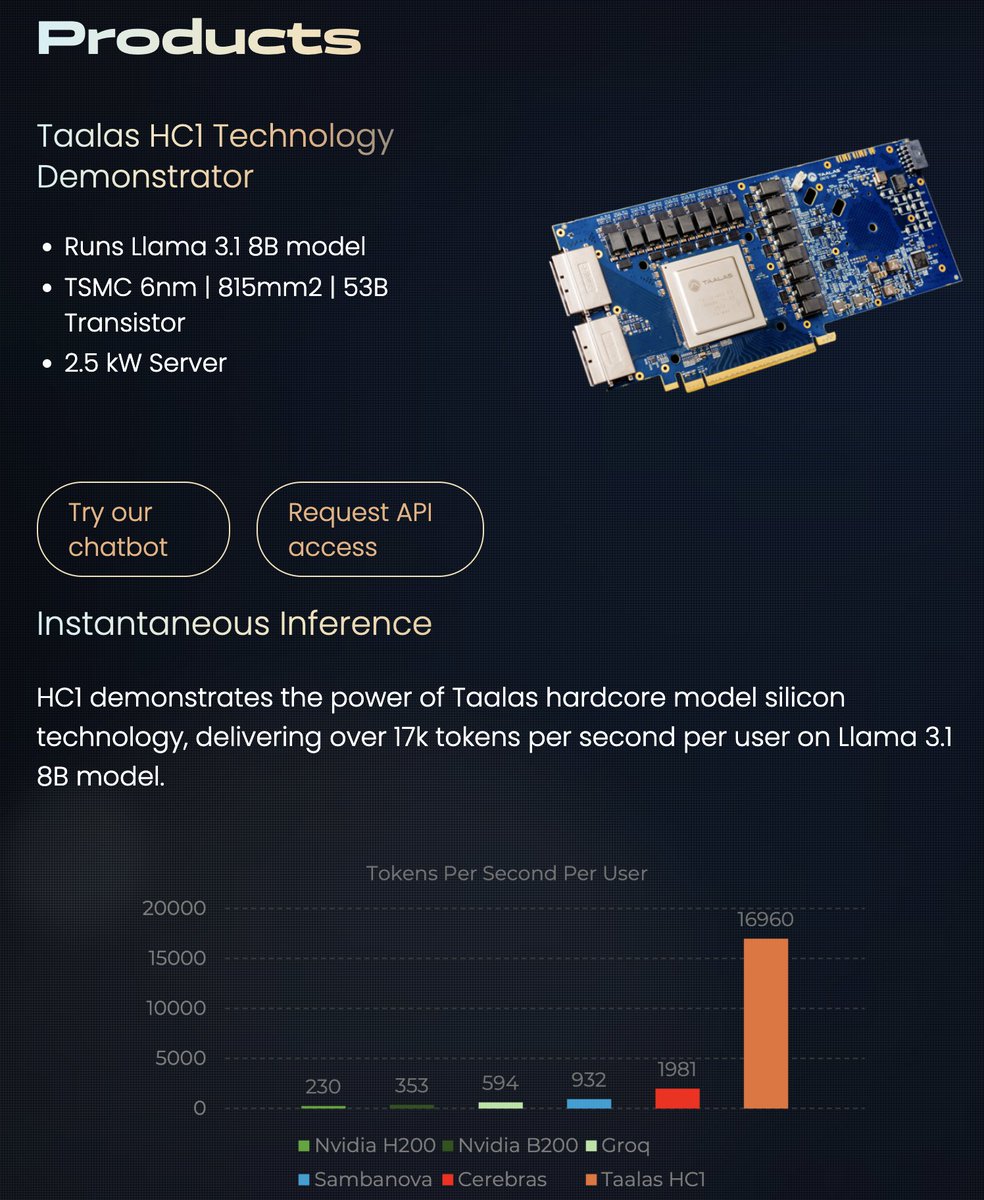

17,000 tokens per second!! Read that again! LLM is hard-wired directly into silicon. no HBM, no liquid cooling, just raw specialized hardware. 10x faster and 20x cheaper than a B200. the "waiting for the LLM to think" era is dead. Code generates at the speed of human thought. Transition from brute-force GPU clusters to actual AI appliances. taalas.com/the-path-to-ub…