you can outsource your thinking but you cannot outsource your understanding

Reuben Marks

2K posts

@ReubenMarks5

exploring possibility space - currently building GoodMeals a universal recipe/meal plan generator.

you can outsource your thinking but you cannot outsource your understanding

"A decade ago, AI was supposed to replace radiologists. Today, radiologists make more than $500,000 per year, and their employment continues to grow, see chart below. Reading scans is a task, not a job, and when the task gets cheaper, demand for the job grows."

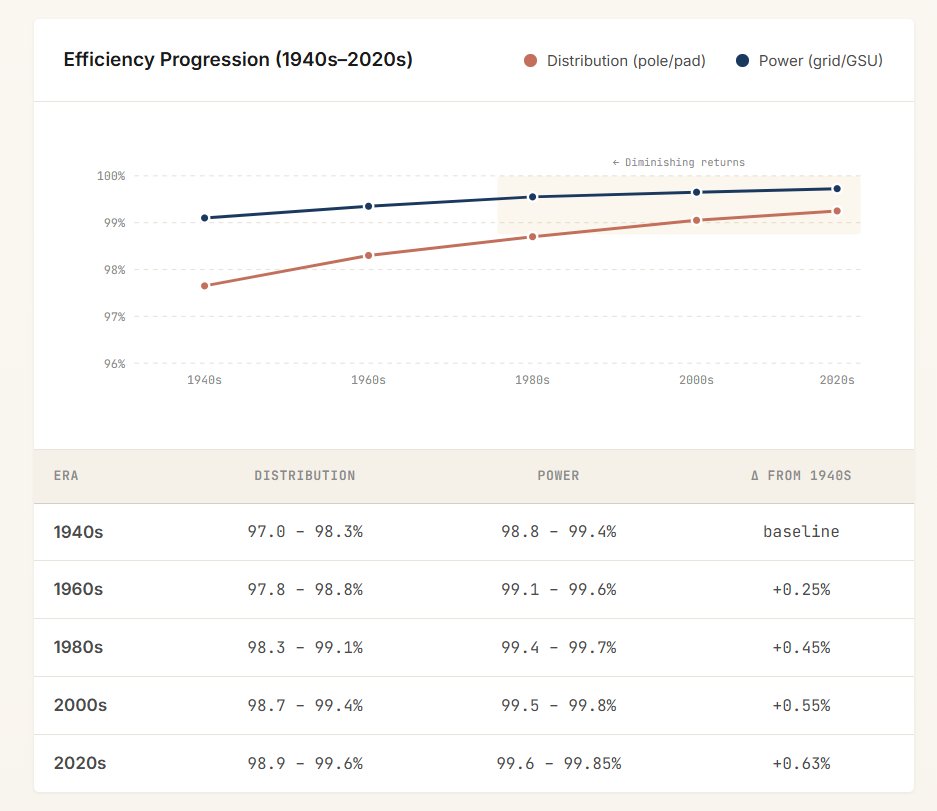

I have always struggled to appreciate why the big transformers take multiple years to source, and this article didn’t really clear it up. I wonder if there is a hangup on absolute efficiency akin to the US ISP fixation with rocket engines, and trading off a little bit of efficiency results in better economics when lead time has a cost. Instead of using highly specialized, long-lead-time “grain-oriented electrical steel and high-purity, insulated copper”, just use whatever you can buy in bulk and eat some percentage of inefficiency. For a lot of projects like solar farms and data centers, their economics are not dominated by the low order bits of the cost of electricity.

@IterIntellectus That's exactly what's happening. Whatever you focus on grows. By constantly focusing on "the problem," instead of focusing on the behaviors the exact opposite of whatever "the problem is," they are growing and watering neurosis. Constantly talking about a problem reinforces it.

I can’t believe we live in a country that’s proposing replacing doctors and nurses with robots.