Ankur Rustagi

97 posts

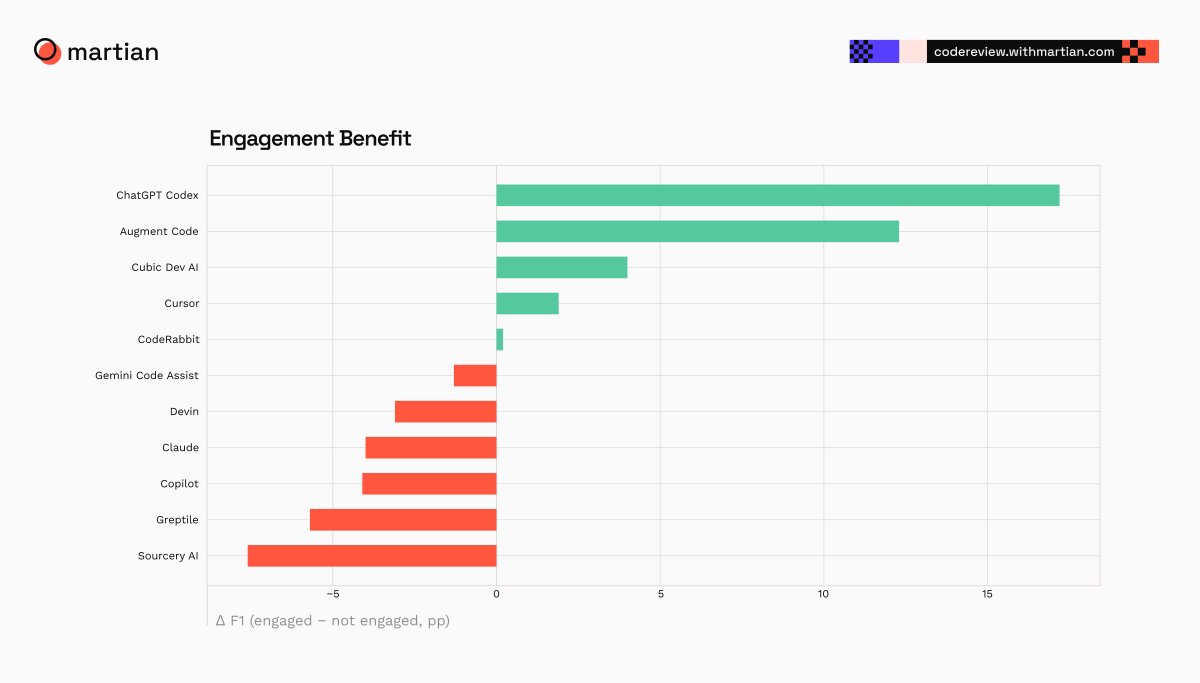

@augmentcode rebuilt their context compaction layer around Mercury 2. 82% latency cut. 90% cost cut. Comparable quality to Opus 4.7. Running in production today. "We took a counter-intuitive bet. We decoupled summarization entirely, offloading it to Mercury 2 as a dedicated subagent. Mercury 2 is the highly efficient engine powering our most critical workflows." -@RustagiAnkur & @jm1234567890, Members of Technical Staff at Augment Code The subagent layer needs the most efficient model. Full methodology and eval setup in the writeup. inceptionlabs.ai/blog/rise-of-r…

10 years at Bayer 04. Welcome back Kai. 🫶🖤❤️ #B04ARS

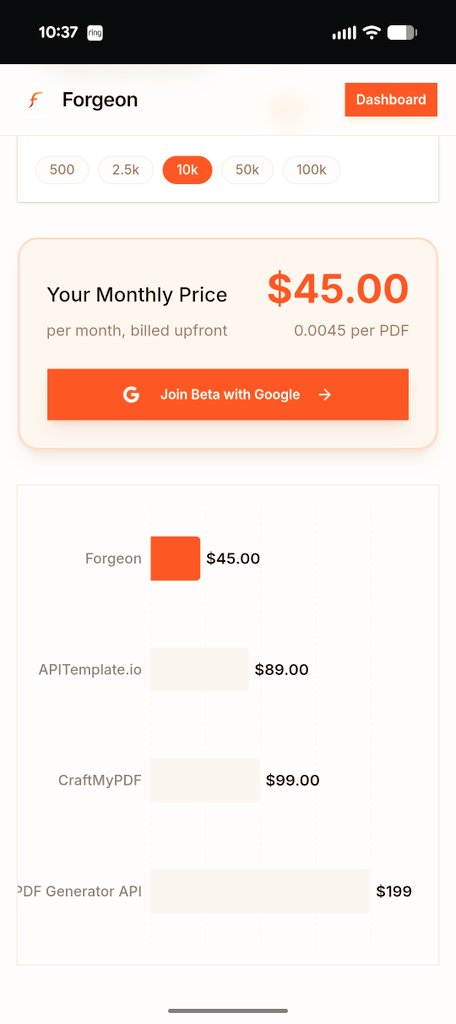

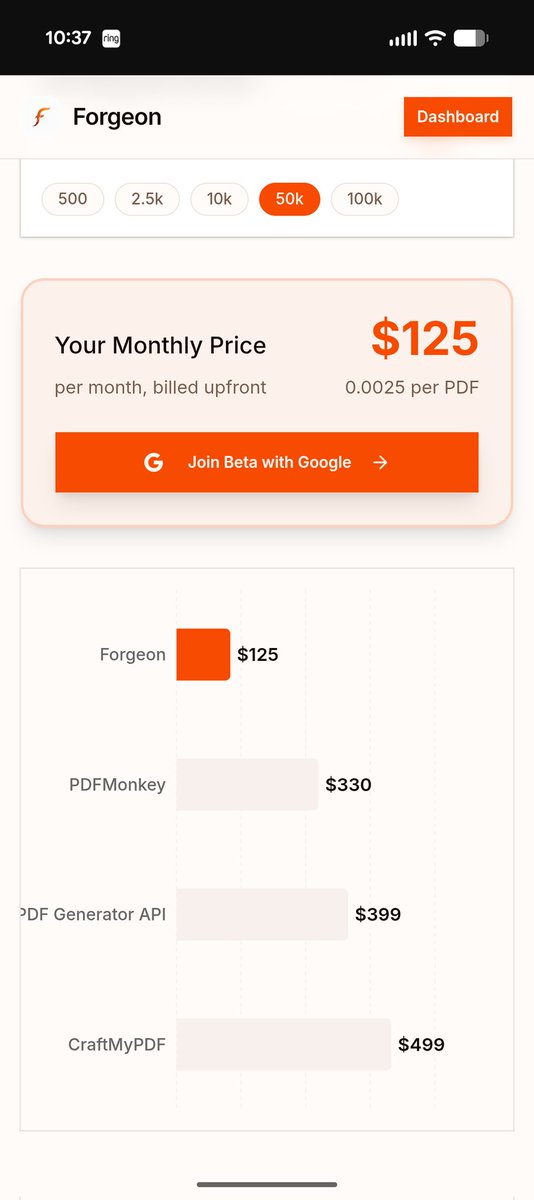

Peter Steinberger bootstrapped PSPDFKit over 10 years (gold-standard PDF library used by Box, Apple, even DocuSign). Insanely hard tech. IYKYK. I'd guess he's worth a couple hundred mill from that. If he goes to OpenAI... he might make more from OpenClaw, which is 3 months old.

Shipped #Ollama support for MCPlexor 🚀 If your agent uses Linear + GitHub + Notion, you're dumping ~40k tokens into context on every request. That's 20% of a 200k context window gone to tools. MCPlexor fixes this. <1k tokens overhead. Dynamic routing. Try 100% local. Details 👇

Want to experience the magic of our Context Engine with your existing toolbase? Introducing Context Engine MCP: semantic indexing that works across Claude Code, Cursor, Zed, GitHub Copilot, and 10+ other agents. See an interactive live demo on Tuesday, February 10 at 10 AM PT. Implement in your workflows on the same day. Register now: watch.getcontrast.io/register/augme…