Rossco 🤓

3.4K posts

Rossco 🤓

@RuzzyCarhole

I muck about in photography playing with colour, texture, tones & my definition of beauty. Always looking for the kōan within an image. And I’m a mighty geek.

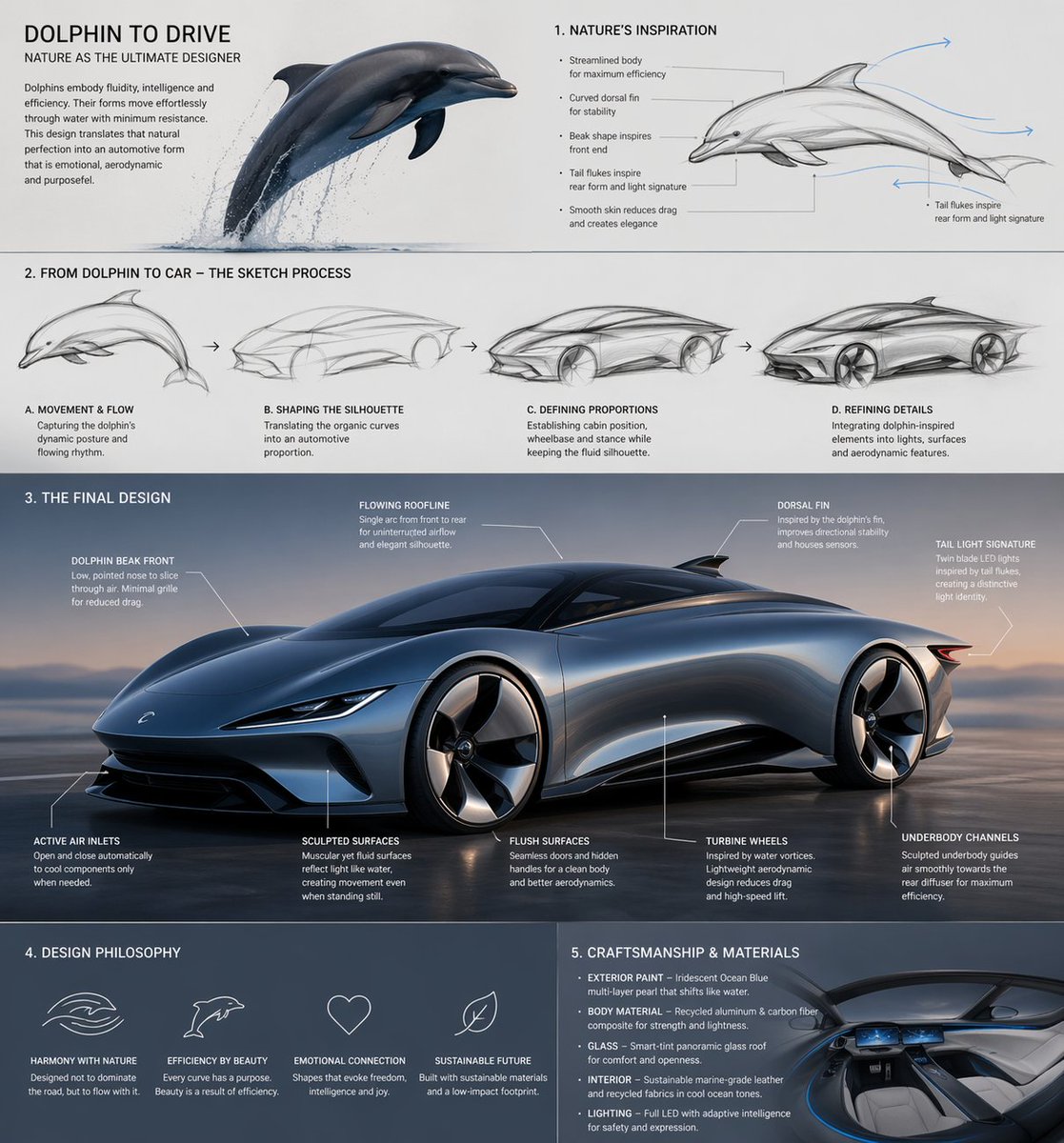

Men without degrees built this.

There is a human process in creativity that cannot be prompted away.

Yes, our latest special guest is Fuli Luo @_LuoFuli . The second battle in the global large model arms race has begun: shifting from the Chat era dominated by pre-training to the Agent era driven by post-training. This marks Fuli Luo’s first-ever interview, as well as her first in-depth technical conversation. We talked systematically about the massive AI upheaval triggered by technological breakthroughs including Claude Opus 4.6 and OpenClaw in 2026, along with its subsequent structural impacts across the industry. Amid the fierce large-model arms race, the world around us is undergoing brutally rapid changes—even for researchers who train models firsthand. “I used to believe our work was highly creative, and could never be simplified into fixed skills or standardized workflows. But now I realize it can be automated after all. If that’s possible, can models train stronger models on their own? Can they achieve iterative improvement through self-evolution? This is exactly what will unfold in the next couple of years,” Fuli Luo says. As human knowledge and wisdom are internalized into model capabilities, what will humanity pursue in the future? Is our society truly ready for this tsunami-scale technological revolution? All in all, this is an information-dense dialogue. It reveals how an AI lab makes strategic technical bets, allocates resources, and adjusts organizational structure and team planning amid a major paradigm shift. At the core of its response to drastic change lies its established culture and core values. Though lengthy and technically intensive, we hope this conversation brings great insights to every viewer. Our podcast, video episode and article are released simultaneously across platforms, with English subtitles provided to assist non-Chinese-speaking audiences. Luo Fuli: OpenClaw, Agent Frameworks — The AI Paradigm Has Already Chang... youtu.be/V9eI-t3TApE?si… 来自 @YouTube

Earlier this year Yann LeCun left Meta because Mark Zuckerberg wouldn't bet the company on JEPA. Last week his group dropped the first JEPA that actually trains end-to-end from raw pixels. 15 million parameters. Single GPU. A few hours. The timing is not a coincidence. For four years Meta has been the house that JEPA built. LeCun published the original paper from FAIR in 2022. I-JEPA and V-JEPA came out of his lab. The architecture was supposed to be the escape hatch from LLMs, the path to robots that actually learn physics instead of hallucinating about it. Every version shipped fragile. Stop-gradients. Exponential moving averages. Frozen pretrained encoders. Six or seven loss terms that had to be hand-tuned or the model collapsed into garbage representations. Meta kept funding LLMs. Llama shipped. Llama scaled. Llama got beat by Qwen and DeepSeek. Zuck spent $14 billion to buy ScaleAI and install Alexandr Wang. The FAIR robotics group was dissolved. LeCun's research kept winning papers and losing the product roadmap. He left, started AMI Labs, and said publicly that LLMs were a dead end. Now the paper. LeWorldModel. One regularizer replaces the entire pile of heuristics. Project the latent embeddings onto random directions, run a normality test, penalize deviation from Gaussian. The model cannot collapse because collapsed embeddings fail the test by construction. Hyperparameter search went from O(n^6) polynomial to O(log n) logarithmic. Six tunable knobs became one. The downstream numbers are what should scare the robotics capex class. 200 times fewer tokens per observation than DINO-WM. Planning time drops from 47 seconds to 0.98 seconds per cycle. 48x faster at matching or beating foundation-model performance on Push-T and 3D cube control. The latent space probes cleanly for agent position, block velocity, end-effector pose. It correctly flags physically impossible events as surprising. It learned physics without being told physics existed. Figure AI is valued at $39 billion. Tesla Optimus is mass-producing. World Labs raised $230 million to sell generative world models. Everyone in humanoid robotics is burning capital on foundation-model pipelines that plan in 47 seconds per cycle. LeCun's group just showed you can do it with 15 million parameters on a single GPU in a few hours. This is the Xerox PARC pattern running again. Meta had the next architecture. Meta had the scientist. Meta dissolved the robotics team, passed on the productization, and watched the exit. Three months later the lab that was supposed to be Meta's publishes the result that resets the robotics cost structure. The paper is worth more than Alexandr Wang.

Half the West calls him a villain. History will call him the only person alive who has simultaneously rebuilt transportation, energy, space, telecom, AI, neuroscience, and robotics. The gap between today’s noise and tomorrow’s verdict is wider than it was for Jobs, Edison, or Rockefeller. *** thanks to @DavidCarbutt_ and team for the edit.