Sam Partee

1.4K posts

Sam Partee

@SamPartee

CTO, Co-Founder, @tryArcade | @redisinc @HPE_Cray | AI, LLM, Python, HPC, ⚾️ fan, roll tide | Blog: https://t.co/PJfL2cMCYD | Opinions are my own.

San Francisco, CA Katılım Nisan 2013

462 Takip Edilen1.6K Takipçiler

It is clear to me that we will have to rebuild the whole security suite for agents:

- Password manager

- Firewall

- Antivirus

I got first covered (@hasp_dev), working on the second and thinking about changing mdgrok into the 3rd.

English

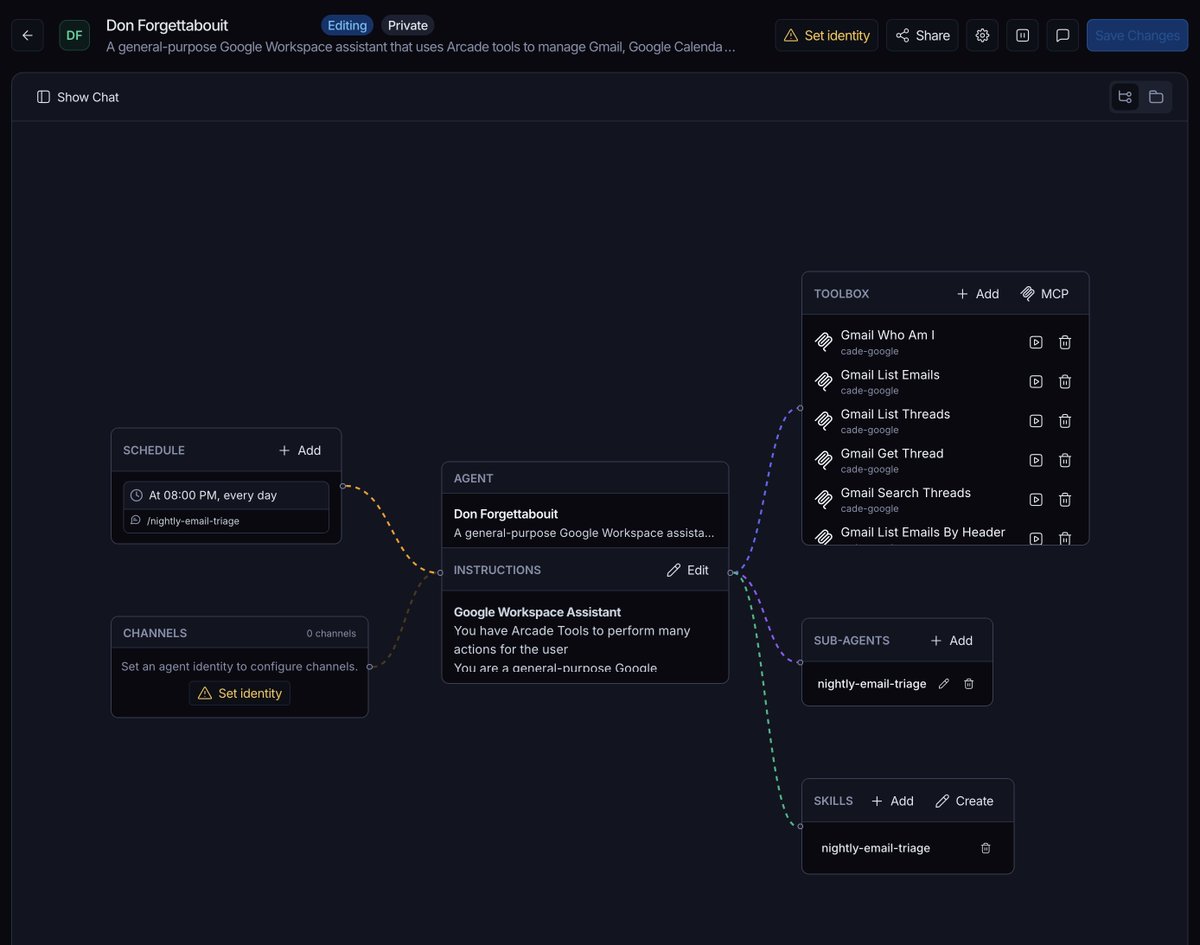

In honor of @LangChain Interrupt today, here's my favorite Agent I made with Fleet + @TryArcade

Don Forgettabouit - a very sarcastic reminder agent

Started as a simple email triage agent I setup in 5 minutes and now it's the first email I open everyday.

Today's gem - "Did you miss the 'Action Required Today' part of that email from 3 days ago?"

English

Sam Partee retweetledi

We just open-sourced Agent Library, a local-first memory layer for AI agents built by @SamPartee.

Your agent's memory should be something you can hold in your hand. A file you own, diff, and roll back.

More on the blog: arcade.dev/blog/agent-lib…

English

S/o to @torresmateo for pushing me to release this!

agent-library is just as it sounds - a library for an agent.

It started as my personal obsidian connector for Arcade, but has grown into a multi-modal knowledge management system.

Tweet thread on the design and what I learned building it very soon.

Mateo Torres@torresmateo

your agent's memory shouldn't live on someone else's computer. today @TryArcade is open-sourcing Agent Library: your agent's entire memory in one SQLite file you can copy, diff, or email to yourself. Apache 2.0. no account. no hosted service. github.com/arcadeai/agent…

English

Fleet can now generate and render SVGs and Mermaid diagrams inline!

Ask it to make you a diagram, and watch it work its magic.

I'm using a mix of our GitHub tools, and @cognition's DeepWiki to make the diagram in this demo. Works super well!

English

@jacob_posel I hate when people just do ads but you wrote it for me so i'll just leave this here @tryarcade

English

@ivanleomk do it yourself, download the HAR file, claude out MCP tools to get the session token and use the same routes as the UI to manipulate the backend without opening one other than to log in.

English

@paulg Most can't conceive the size a pond can be. Furthermore, many choose to balk at the big fish or pond itself because of this.

few enjoy the challenge of defeating bigger fish or migrating ponds so to speak.

English

@mattpocockuk honestly I don't love some of the dev philosophies in here. when tested (go and python codebases) the results were mediocre at best.

I do love this language here: github.com/mattpocock/ski…

English

FYI I just shipped a huge improvement to /improve-codebase-architecture

It now ships with a glossary of terminology to describe good/bad codebases

Essential reading for anyone wanting to improve their codebases:

github.com/mattpocock/ski…

English

I’ve been using a similar approach through an MCP I call Librarian. Organizes knowledge into sections, books, chapters, etc.

pip install agent-library

Libr add

Proper chunking, code splitting, ocr, image, etc for embedding models all small enough to run locally.

RRF vector + text search indices built and updated automatically.

Looking at my obsidian graph now looks like truly autonomous knowledge organization

English

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

@KirkMarple @johnennis Almost sounds like you need… permissions… for … contextual… access…

Muahahhahhh

English

@johnennis Yeah, I just want a “read everything you want” flag.

Like if it’s not changing data, I don’t care.

English

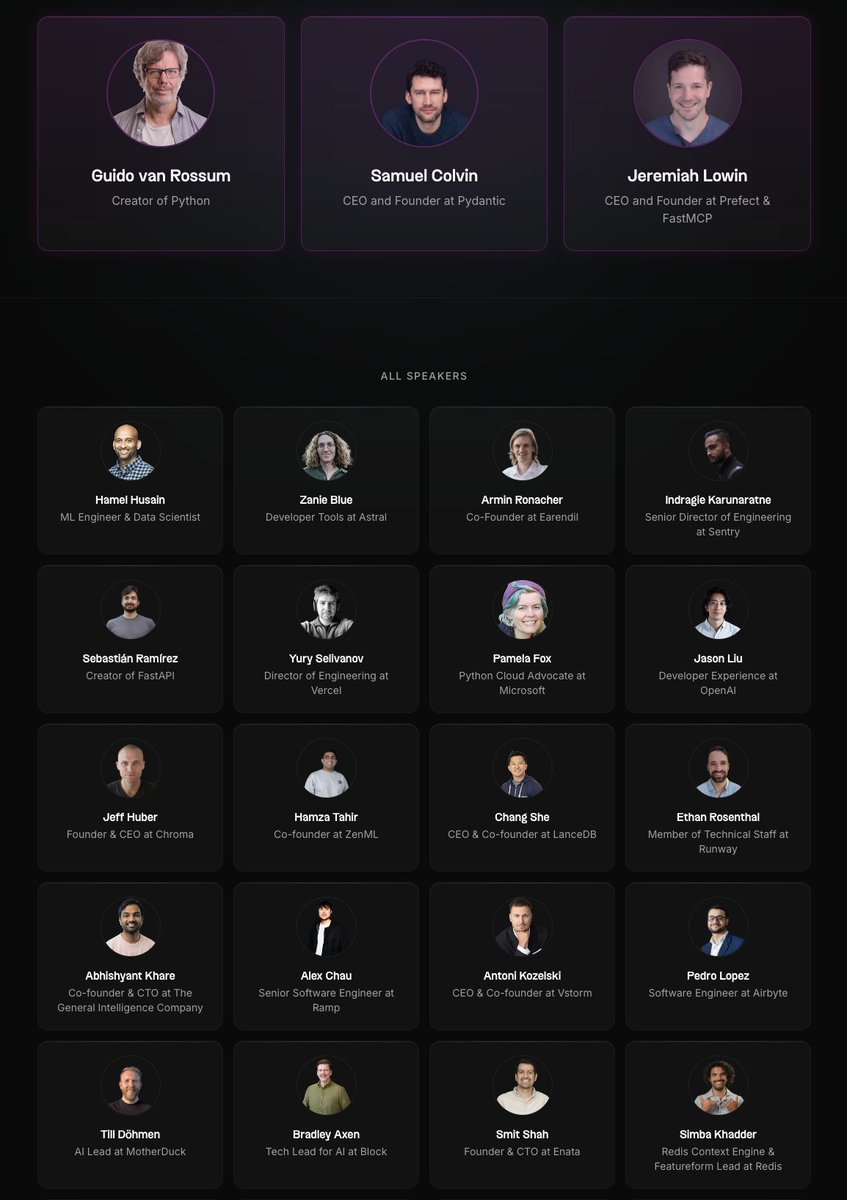

Find me a conference with a better line-up, I dare you.

There are still some tickets available, buy now.

pyai.events/speakers

Or, even better, if you're a serious open source developer in your free time, email me and we'll give you a free ticket. samuel at pydantic.dev.

English

FOMO is the most universal feeling I see across developers in the industry.

So many new tools every day: which ones do I use?

So many new product releases: which are legit?

So many new paradigms: which ones give me the highest ROI?

That’s why I’m excited to speak at the Coding Agents conference on March 3rd organized by the MLOps community.

I’ll be sharing the stage with product, technical leaders and friends (@TomasReimers , @zachlloydtweets , @SamPartee) from @cursor_ai , @AnthropicAI , @warpdotdev , Arcade.dev.

Every talk will be about making coding agents work in production.

All signal, no Twitter hype.

Hope to see you there! Event link in comments.

English

@kepano People really don’t know how powerful this combo is. I made one for this but this’ll be way better

English

1. install Obsidian 1.12

2. enable CLI

3. now OpenClaw, OpenCode, Claude Code, Codex, or any other agent can use Obsidian

Obsidian@obsdmd

Anything you can do in Obsidian you can do from the command line. Obsidian CLI is now available in 1.12 (early access).

English

We wrapped this edition of MCP Connect Day with 3 fantastic talks from @OpenAI, @github and @TryArcade

Thanks to all our speakers for sharing their MCP stories and insights!

English

@openclaw ... but give it everything!

Wrote a plugin to give OpenClaw / Moltbot / Clawdbot access to all of @TryArcade tools.

Now you can use 100s of services from any of your msg apps i.e. Discord, Telegram, etc. Auth included

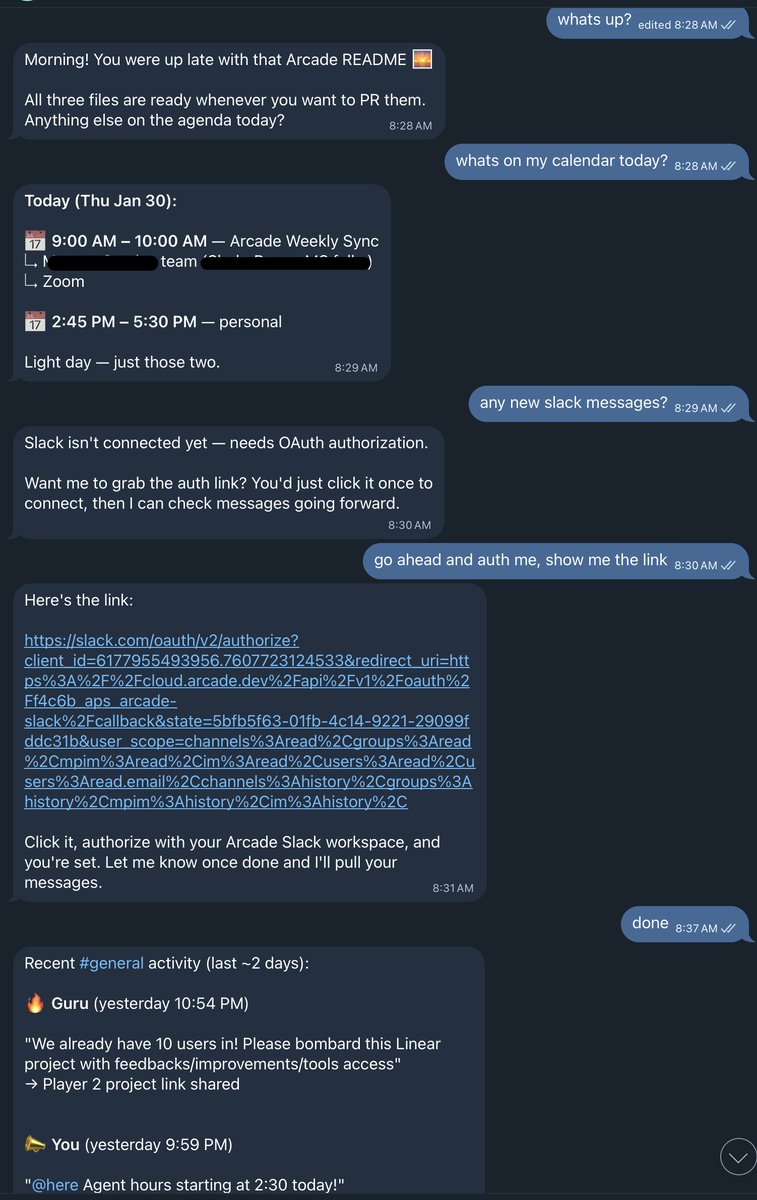

👇Me using GCal and Slack from Telegram

English

@SamPartee @openclaw @TryArcade Curious - are you dedicating a machine to OpenClaw to be “always on”? Or running it on VPS?

English