Sam Acquaviva

73 posts

Sam Acquaviva

@Sam_Acqua

now @ after thought, ex-cocosci @ mit

🚨 Before concluding: As noted by @Sam_Acqua and many others, we all ought to be very skeptical of Gen PPL as a metric, especially in isolation. ❌ It is actually a bit crazy that we have been using it for so long. Hence, the additional metrics, and the presence of several qualitative samples in the appendix. Please have a look yourselves to get a better understanding of the sample quality! 🔍 There's comparisons across SD/non-SD, number of NFEs, and others.

I'm going to re-retweet this, because it's important! There are a few pitfalls when evaluating diffusion language models, highlighted in these two recent blog posts: - patrickpynadath1.github.io/blog/eval_meth… - samacquaviva.com/projects/flow-… Both are worth a read if you have an interest in this space!

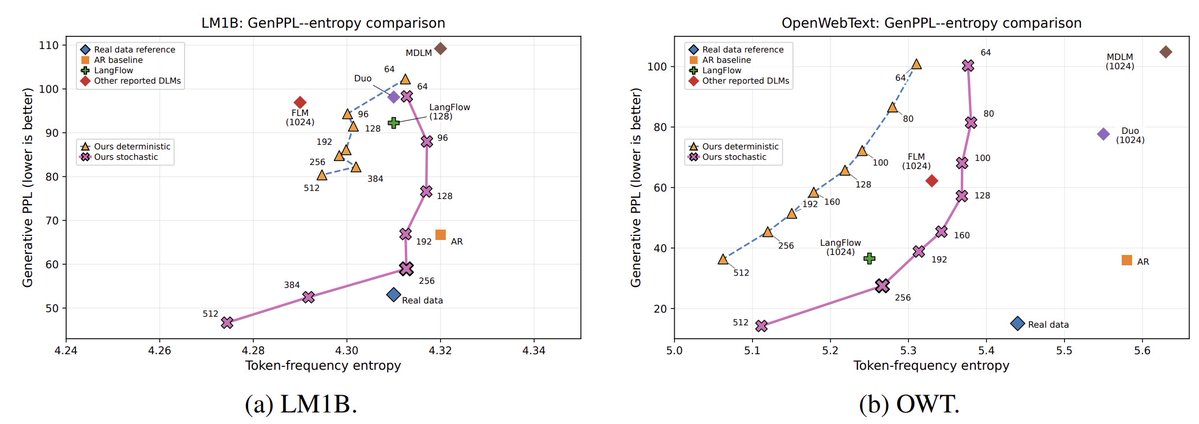

Flow models are a promising alternative to autoregression. But the current standard of evaluating flow models is broken. The reported 3x improvement in 1024-step PPL since 2023 is closer to 1.1x if you control for sample entropy. (1/12)

Flow models are a promising alternative to autoregression. But the current standard of evaluating flow models is broken. The reported 3x improvement in 1024-step PPL since 2023 is closer to 1.1x if you control for sample entropy. (1/12)

Flow models are a promising alternative to autoregression. But the current standard of evaluating flow models is broken. The reported 3x improvement in 1024-step PPL since 2023 is closer to 1.1x if you control for sample entropy. (1/12)

Flow models are a promising alternative to autoregression. But the current standard of evaluating flow models is broken. The reported 3x improvement in 1024-step PPL since 2023 is closer to 1.1x if you control for sample entropy. (1/12)

Flow models are a promising alternative to autoregression. But the current standard of evaluating flow models is broken. The reported 3x improvement in 1024-step PPL since 2023 is closer to 1.1x if you control for sample entropy. (1/12)