Dayo ❄️

1.8K posts

Dayo ❄️

@Samuel0yeneye

engineer & researcher

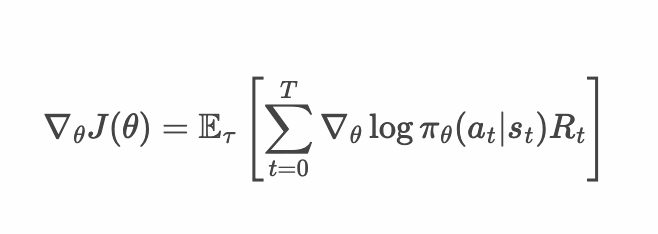

During neural network training, the loss landscape gets sharper until it hits a ceiling. GD pins right at the ceiling. SGD settles below it — and the gap grows as you shrink the batch. Why? We now have the answer. arxiv.org/abs/2604.21016 🧵 Blog: akyrillidis.github.io/aiowls/stochas…

We're excited to announce Habeeb Shopeju @HAKSOAT as a speaker at SysConf 2025! His talk is titled "An Introduction to the Inner Workings of LLM Inference Engines". In it, he will briefly introduce how text generation models work, including how inference servers and hardware transform user prompts to outputs. Then he plans to explore Why the KV cache becomes a bottleneck and how PagedAttention solves memory fragmentation like an operating system. Finally, he will explore speed optimizations: FlashAttention for efficient memory access, continuous batching for GPU utilization, and speculative decoding for multi-token prediction.

Looking forward to giving this talk 💪