Sabitlenmiş Tweet

Santhosh Kumar

2.7K posts

Santhosh Kumar

@SanthoshKumarS_

Conscious Data Scientist | Jr MLE @OmdenaAI | Sharing My Journey through Tweets. DM for Collaboration 📩

Tiruppur,India Katılım Kasım 2017

186 Takip Edilen22.5K Takipçiler

Santhosh Kumar retweetledi

@SanthoshKumarS_ Great to see DeciLM is overcoming Mistral-7B in performance. excellent innovation I must say

English

@SanthoshKumarS_ Surprisingly, it does outperform Mistral and Llama

English

That's a wrap! & Thank you for Reading

If you enjoyed this thread:

1. Follow me @SanthoshKumarS_ for more of this Python & ML Content,

2. RT the tweet below to share this thread with your audience.

Santhosh Kumar@SanthoshKumarS_

🌟 Brace yourselves for the revelation of the swiftest and most adept language model - behold, the DeciLM 7B! 🚀 DeciLM 7B, the open-source 7-billion parameter LLM, is changing the speed and accuracy standards by itself. -- Thread --

English

Explore it for Yourself!

Model Card on Hugging Face :

✔️ huggingface.co/Deci/DeciLM-7B

✔️ huggingface.co/Deci/DeciLM-7B…

Demo :

✔️ console.deci.ai/infery-llm-demo

Notebook :

✔️ colab.research.google.com/drive/1VU98ezH…

English

@DataScienceHarp This is Great , Amazed by Low latency and low cost 👏

English

DeciLM-7B is here and it beats Mistral-7B on all benchmarks - topping the HF Open LLM Leaderboard in it's weight class!

Here's what you need to hack around:

📔DeciLM-7B Base Model Notebook: bit.ly/decilm-7b-note…

📘DeciLM-7B Fine-tuning Notebook: bit.ly/decilm-7b-fine…

📗DeciLM-7-Instruct Notebook: bit.ly/declm-7b-instr…

Help us get trending on HuggingFace by ❤️ the model cards

🤗 Base model: huggingface.co/Deci/DeciLM-7B

🤗 Instruction-tuned model: huggingface.co/Deci/DeciLM-7B…

🤗 Demo on HF Spaces: huggingface.co/spaces/Deci/De…

👀Check out DeciLM + Infery: hubs.ly/Q02cz_pB0

📑 Read more about the model in the release blog:deci.ai/blog/introduci…

English

@Sumanth_077 Low latency and low cost make this an excellent combo for high-volume applications. Great Model 👏

English

Here are all the relevant links:

• HuggingFace model: huggingface.co/Deci/DeciLM-7B, huggingface.co/Deci/DeciLM-7B…

• Try DeciLM 7B with Infery-LLM demo: console.deci.ai/infery-llm-demo

• Starter Notebook: bit.ly/declm-7b-instr…

English

The Fastest and most Proficient 7-billion parameter base LLM released today

Deci has unveiled DeciLM 7B and has set new benchmarks for speed and accuracy.

Let's look at some highlights!

Unmatched Accuracy:

>Scores 61.48 on Open LLM Leaderboard.

>Outperforms all current competitors in the 7 billion parameter class.

>Surpasses previous leaders Mistral 7B and Llama-2 7B

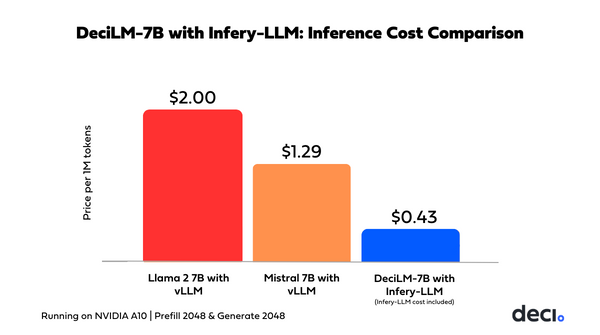

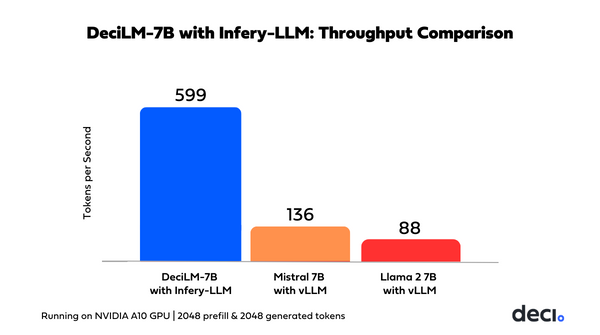

Enhanced Throughput Performance:

>Achieves 83% higher throughput than Mistral 7B in PyTorch comparison.

>Records 139% increase in throughput over Llama 2 7B.

Accelerated Speed with Infery-LLM:

>When combined with Infery-LLM, Deci’s Inference SDK, it achieves 4.4x greater speed than Mistral 7B with vLLM

>This means - the ability to support many users at once while enjoying very low inference costs. It is beneficial for high-volume customer interactions in telecom, online retail, and cloud services.

Innovative Architecture:

>Developed using AutoNAC-powered neural architecture search.

>Utilizes Variable Grouped Query Attention for optimal accuracy-speed balance.

Instruction-Tuned Variant - DeciLM 7B-Instruct:

>Achieves 63.09 average on Open LLM Leaderboard.

>Instruction-tuned with LoRA on the SlimOrca dataset.

Try the new model in the links below 👇

English

@akshay_pachaar Deci Sparkles with yet another great Model 🔥

English

The fastest and most proficient 7B LLM is here!

Introducing DeciLM 7B! 🚀

The new leader in open-source: 7B LLMs. It's redefining benchmarks for speed and accuracy.

Here's what makes it special:✨

🔹Unmatched Accuracy:

Achieving an average score of 61.48 on the Open LLM

Leaderboard, DeciLM 7B outshines its competitors in the 7-billion parameter class, beating the previous front runner Mistral-7B.

🔸Enhanced Throughput Performance:

In a direct PyTorch comparison, DeciLM 7B achieves an 82% increase in throughput over Mistral 7B and a 125% increase in throughput over Llama 2-7B.

🔹DeciLM + Infery LLM = 🚀

DeciLM combined with DeciAI's Inference SDK is a no match for anyone.

This powerful duo sets a new standard in throughput performance, achieving speeds 4.4 times greater than Mistral 7B with vLLM

This is crucial in sectors like telecommunications, online retail, and cloud services, where real-time response to customer inquiries is vital.

Check this out👇

🔸Innovative Architecture:

Developed with the assistance of neural architecture search-powered engine, AutoNAC, DeciLM 7B employs Variable Grouped Query Attention, a breakthrough in achieving an optimal balance between accuracy and speed.

(Check the image below)

🔹Stellar Instruction-Tuned Variant:

DecilLM 7B was instruction-tuned using LoRA on the SlimOrca dataset. The resulting model, DeciLM 7B-Instruct, achieves a remarkable average of 63.09 on the Open LLM Leaderboard.

The model is now available on HuggingFace🤗!

Find all the details & Starter Notebook in the following tweet!

Thanks for reading! 🥂

English