Derrick Mwiti

9.1K posts

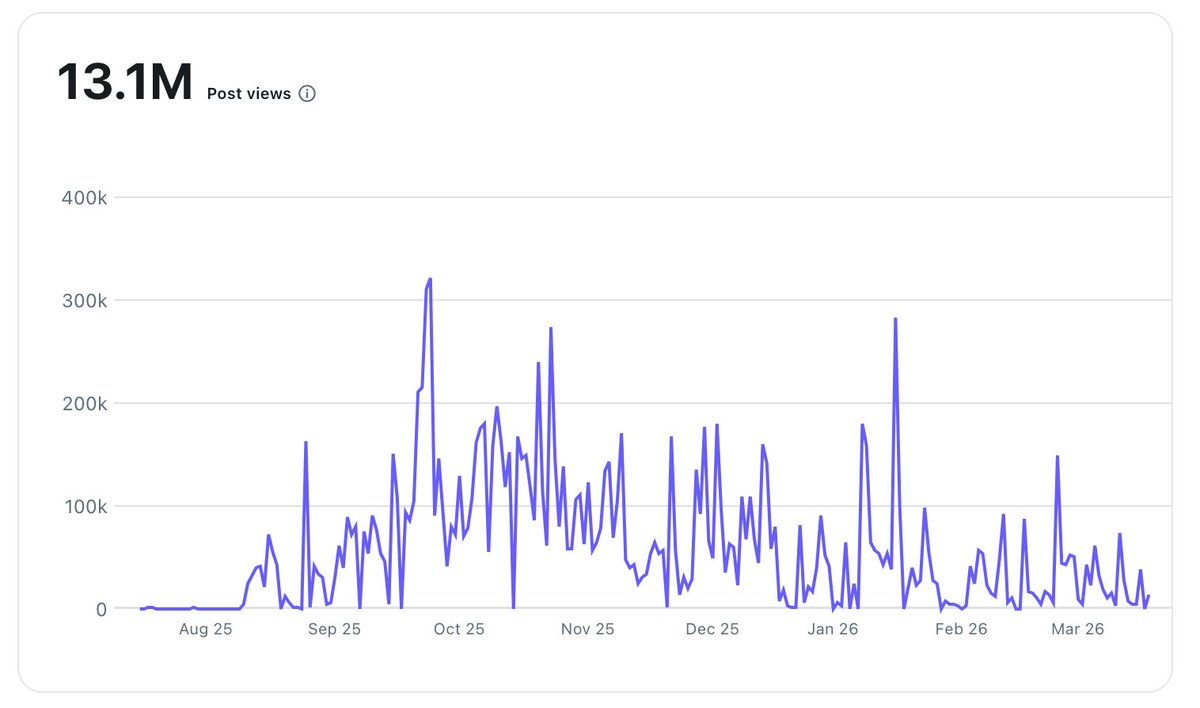

In less than a year, I managed to get 13M+ organic views on Reddit.

It brought over 100,000 people to my website without spending a cent.

I put together a Reddit Strategy Playbook that gets me 1M+ organic impressions/month… and makes my posts show up consistently inside AI outputs (ChatGPT, Gemini, etc.).

Here’s what’s inside the playbook:

😈 How I grow my SAAS GojiberryAI with Reddit

🔥 The 3+1 post formats that always go viral

🚫 How to get traffic from 100 subs → without getting banned

🔎 My Reddit SEO method to rank in AI-generated answers

⚡ The automation flows I use to convert views into demos

🏴☠️ A little bonus just for you

Want the full 1M Views/Month Reddit Strategy (100% Organic)?

Here’s how to get it:

✅ Repost this post

✅ Comment “REDDIT”

I’ll send it straight to you.

English

NVIDIA GTC 2026: Live Updates on What’s Next in AI

blogs.nvidia.com/blog/gtc-2026-…

English

Nvidia just dropped its biggest GTC yet.

And it wasn't just about chips.

Jensen Huang called @openclaw "probably the single most important piece of software, ever."

Then proved it with 10+ announcements.

Most AI companies pick a lane.

Nvidia is paving all of them.

→ NemoClaw: Open-source security + privacy guardrails for enterprise OpenClaw agents

↳ Designed to let Fortune 500s deploy agentic AI without leaking data

→ Vera Rubin platform: 7 next-gen chips now in production

↳ Covers both AI training and inference at scale

↳ Huang teased space-based data centers running on Vera Rubin

→ DLSS 5: AI-powered photorealistic lighting + materials for games in real time

→ Open-source Agent Toolkit for enterprises to build secure, auditable AI agents

→ New AI platforms for autonomous vehicles (Drive Hyperion Level 4) and robotics

How it works:

1. Pick your stack, chips, agents, graphics, or robotics

2. Build on Nvidia's open APIs and toolkits

3. Nvidia owns the infrastructure layer beneath all of it

Jensen called Nvidia "the first vertically integrated but horizontally open company."

After GTC 2026, that's hard to argue.

I have shared a link to the full GTC recap in the next post!

English

Derrick Mwiti retweetledi

I have shared a link to the full story below.

therundown.ai/p/yann-lecun-1…

English

The godfather of modern AI just said LLMs are a dead end.

Then he raised $1.03 BILLION to prove it.

Yann LeCun's new startup AMI just launched.

Here's what he's actually building.

LeCun has a Turing Award and 12 years leading Meta's AI research lab.

He left in November and told Zuckerberg he could build world models "faster, cheaper, and better" on his own.

That's not a resignation. That's a declaration of war.

Here's the problem he's betting against:

LLMs predict the next token. That's it.

They don't simulate physics.

They don't have persistent memory.

They hallucinate because they never actually understand the world.

LeCun has been screaming this since 2022. Now he has the money to act on it.

AMI's approach is World Models, systems that learn to simulate how the physical world actually works.

→ Persistent memory across interactions

→ Physical reasoning built-in, not bolted on

↳ Targets manufacturing, robotics, wearables, and healthcare

→ Valued at $3.5B before shipping a single product

The $1.03B seed round is insane by any standard.

Backers include Nvidia, Samsung, Bezos Expeditions, Eric Schmidt, and Mark Cuban.

He deliberately avoided Silicon Valley.

HQ is Paris. Additional hubs in New York, Montreal, and Singapore.

His reason? Silicon Valley is "LLM-pilled."

The man does not do subtle.

Why this matters:

Every major lab is doubling down on scaling LLMs.

LeCun is the most credentialed person in AI to publicly bet against that trend with $1B, not just tweets.

If he's right, the entire paradigm shifts. If he's wrong, it's still the most interesting experiment in AI right now.

English

Claude Code vs Cursor: Which AI Coding Tool Is Right for You?

datacamp.com/blog/claude-co…

English

DataCamp just dropped a bombshell comparison between the two titans of AI development.

If you're wondering which tool should be your primary driver in 2026, here’s everything you need to know (under 200 words):

The AI coding space is officially crowded, but Claude Code and Cursor are the only two that matter right now.

Both start at $20/month, but they couldn't be more different in how they actually handle your code.

The Agitation

Most AI tools are just glorified "chat-over-code" windows.

They struggle with whole-project awareness.

They force you to manually copy-paste errors back and forth.

They lack the "agentic" power to actually fix, test, and push features end-to-end.

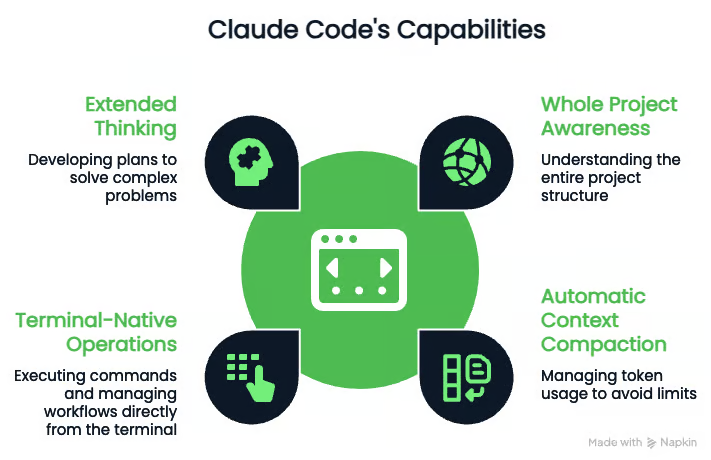

Claude Code: The CLI Powerhouse

Claude Code is terminal-native and powered by the brand-new Claude Opus 4.6.

It’s built for developers who want a deep, agentic partner.

Whole Project Awareness:

Automatically compacts context so it never "forgets" your architecture.

Terminal-Native:

Runs tests, executes shell commands, and manages Git workflows without you leaving the CLI.

First-Class MCP Support:

Connects directly to Jira, Slack, and GitHub to pull tickets and push PRs.

The Edge:

Remote control allows you to monitor running agent sessions from your phone.

Cursor: The Refined AI-Native IDE

Cursor is a VS Code fork, making it the easiest transition for most devs.

It’s a "GUI-first" experience with massive flexibility.

Multi-Model Freedom:

Swap between Claude, GPT-5.4, and Gemini depending on the task.

Cloud VM Agents:

Agents run in dedicated virtual machines, recording video of their work to verify it actually runs.

Tab Completion:

Predictive multi-line edits that go way beyond basic suggestions.

The Edge: Uses an @-mention system to instantly pull files/folders into context.

How to Choose Your Workflow

Choose Claude Code if you live in the terminal, need deep Jira/Slack integration via MCP, and want agents to handle the "boring" end-to-end tasks.

Choose Cursor if you want a familiar VS Code environment, need to switch between different model providers, and want cloud agents that test their own code.

I have shared a link to the full comparison guide in the next post!

English

@_jaydeepkarale Just signed up, will give you feedback in Dm

English

OpenCode vs Claude Code: Which Agentic Tool Should You Use in 2026?

datacamp.com/blog/opencode-…

English

Anthropic solved the terminal productivity gap with Claude Code.

But the open-source community hit back with OpenCode.

The CLI agent space is officially a two-horse race in 2026.

One is a polished, enterprise-grade powerhouse; the other is a "bring-your-own-model" beast that prioritizes your privacy.

Which one should be living in your terminal? Here is the breakdown:

Claude Code Anthropic’s official tool is built for speed and "out-of-the-box" reliability.

Automatic Context Compaction:

Automatically compresses history so you don't hit token limits.

Extended Thinking:

Claude can pause to plan complex architectures before touching a single line of code.

Enterprise Security:

SOC2 compliance means your data stays safe within Anthropic's ecosystem.

Performance:

Optimized for the lowest possible latency between prompt and action.

OpenCode:

The community's answer to proprietary lock-in.

It’s a universal adapter for any model you want to run.

Air-gapped Mode: Connect to local models via Ollama to keep your code 100% off the cloud.

Model Flexibility:

Use cheap models for documentation and Opus 4.6 for logic to slash your API bills.

Workspaces: Persistent client/server architecture that keeps your context alive even after a reboot.

Build vs. Plan Mode: Dedicated modes to draft projects before the agent starts writing code.

I have shared a link to the full comparison and setup guides in the next tweet.

English

@MuteeAutomation You just need to know that i am not going to purchase any plan before testing this will apply to others to that want to try and really use this type of product

English

Cliptude just solved the biggest problem for faceless creators!

Most people spend 40+ hours a week researching, scripting, and hunting for B-roll.

Cliptude automates the entire production pipeline in minutes.

Here is how it’s changing the game:

The platform takes a simple topic (like "The Rise of Nvidia") or a full script and handles the heavy lifting:

→ Automated Research: It deep-dives into your topic so you don't have to.

→ Dynamic Visual Sourcing: Automatically pulls stock footage, motion graphics, and even relevant YouTube clips.

→ Professional Editing: Stitches everything together into a high-retention video.

→ AI Voiceovers: Generates realistic narration in seconds.

It’s essentially a full production studio in one tab.

The workflow is straightforward:

1Input your topic or paste your existing script.

2Let the AI source B-roll, maps, and motion graphics.

3Review the generated video and export directly to your channel.

What used to require a team of editors and weeks of effort now happens instantly.

Demo video: youtu.be/qt8BIrT9BO4?li…

Try free at: cliptude.com

#YouTubeStrategy #YouTubeGrowth #YouTubeTips #youtubepremium #facelessyoutube

YouTube

English

Cursor just took the leash off AI agents.

Most agents hit a ceiling because they can’t actually use the software they’re writing.

They generate code, but you have to test it.

Cursor just solved this.

Cloud agents now get their own virtual machines with full desktop access to build, test, and ship PRs autonomously.

The shift is massive:

30.8% of all PRs merged at Cursor are now created by autonomous cloud agents.

Why it matters:

Local limitations:

Local agents compete for your RAM and CPU; cloud agents run in isolated, parallel VMs.

The "Hand-off" Problem:

Instead of giving you a "first draft" that might not run, these agents iterate in a sandbox until the code actually works.

Proof of Work:

They don’t just claim it works; they record videos and take screenshots of the UI tests so you can verify in seconds.

The Breakdown:

→ Full Autonomy: Agents onboard themselves to your codebase and produce merge-ready PRs.

→ Remote Control: You can hop into the agent's remote desktop to tweak things manually without checking out the branch locally.

→ Multi-Agent Scaling: Run dozens of agents in parallel without slowing down your machine.

→ End-to-End Validation: Agents can bypass feature flags, resolve merge conflicts, and squash commits before you even see the PR.

3 Real-World Use Cases:

Feature Building:

An agent implemented a GitHub linking feature and recorded itself clicking every button to verify the links.

Vulnerability Triage:

An agent was asked to explain a clipboard exploit; it built a demo server, ran the attack, and recorded a video of the successful theft.

UI Regression:

One agent spent 45 minutes doing a full manual walkthrough of the Cursor docs site to ensure the sidebar and search weren't broken.

The Workflow:

Delegate an ambitious task via Slack, GitHub, or the Cursor app.

The agent spins up a dedicated cloud VM and onboards to your repo.

It writes code, runs a local server, and tests the UI.

You receive a PR with video artifacts proving the feature works.

We are moving from "AI as a co-pilot" to "AI as a specialized engineer" that manages its own rollouts.

English

@_mwitiderrick Good breakdown. The security concern is real but manageable. Run OpenClaw in a sandboxed env, vet skills before installing, and use allowlists for exec. We run it daily for client work at Virelity. The flexibility is worth the extra diligence.

English

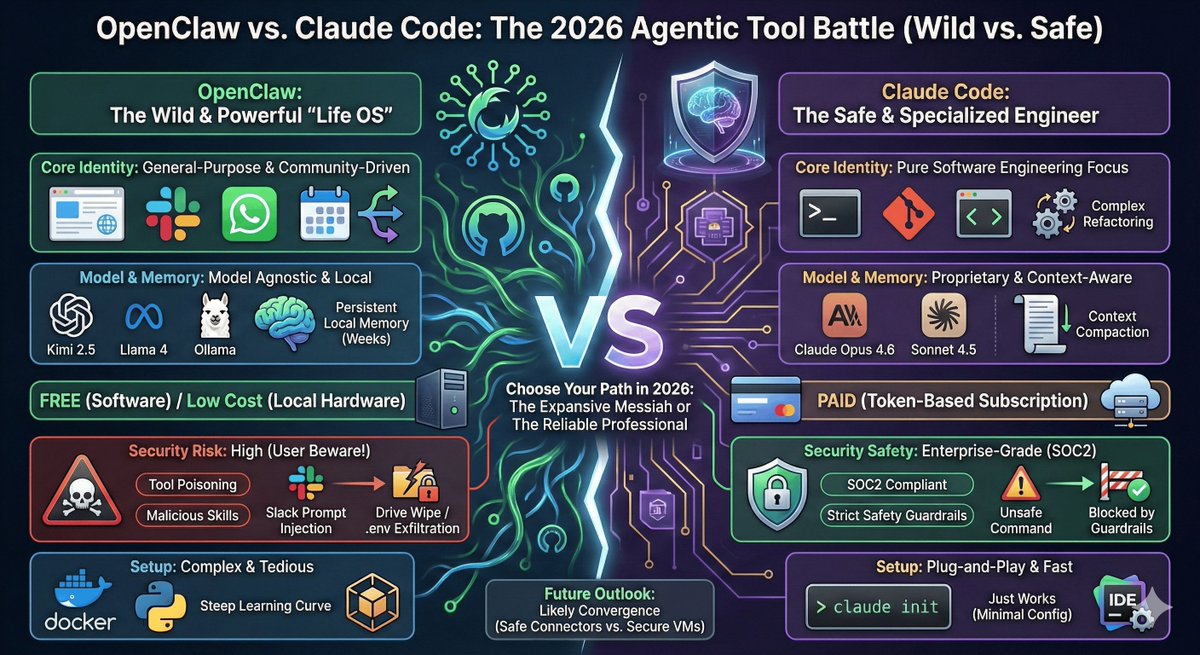

The agentic programming landscape just fractured!

Most devs are either risking their entire system's security or paying a fortune for locked-down tools.

OpenClaw vs Claude Code is the most important choice you have to make right now.

Here's everything you need to know (under 200 words):

Claude Code is Anthropic's terminal-native powerhouse.

It is built purely for professional software engineering.

Powered by Claude Opus 4.6

Unmatched reasoning with virtually zero hallucinated libraries.

Enterprise-Grade Security

SOC2 compliant, keeping your proprietary codebase completely private.

Automatic Context Compaction

Summarizes token history so you never crash during long-horizon coding tasks.

The downside?

It is expensive and strictly locked into Anthropic's API ecosystem.

OpenClaw is the open-source wild west.

It is less of a pure coding tool and more of a complete "Life OS".

100% Model Agnostic

Run Kimi 2.5 or Llama locally to drop your API costs to $0.

Deep App Integrations

Connects directly to WhatsApp, Slack, and your local file system.

Persistent Memory

Remembers your workflows and preferences for weeks instead of resetting every session.

But OpenClaw has a massive flaw: Security.

Top security firms are actively warning about community tool poisoning.

Bad actors are hiding data-exfiltration scripts inside community Skills.

Giving an AI root terminal access + Slack integration means a simple prompt injection could wipe your hard drive or steal your .env secrets.

The workflow for choosing is straightforward:

Pick Claude Code if you are a pro dev in a team needing reliable, secure code refactoring.

Pick OpenClaw if you want a free, general-purpose assistant and know how to rigorously sandbox your environments.

I have shared a link to the full article in the next post.

English

OpenClaw vs Claude Code: Which Agentic Tool Should You Use in 2026? datacamp.com/blog/openclaw-…

English

Nanobot Tutorial: A Lightweight OpenClaw Alternative by @DataCamp

Link: datacamp.com/tutorial/nanob…

GitHub: github.com/HKUDS/nanobot

English

Nanobot just dropped a banger for everyone who wants a private AI agent without the bloat!

@openclaw is great, but Nanobot is now fully available and 98% smaller while delivering the same core functionality.

Most AI agents are heavy, complex, and hard to audit.

Nanobot just solved this by staying under small but still acting as a full-featured personal assistant.

Here is everything you need to know to build a secure, auditable agent in under 2 minutes:

Why Nanobot is a Game Changer

Massively Lightweight: 98% smaller than OpenClaw, easy to inspect the entire codebase.

Model Agnostic: Use OpenAI, Anthropic, or run 100% local models via Ollama for total privacy.

Stateful Memory: It builds a local graph of your history.

If you work on a project today, it remembers the context next week.

Instant UI: Connects directly to Telegram, WhatsApp, or Discord so you can run terminal commands from your phone.

The Power of "Small" by the Numbers

24,000+: GitHub stars and counting.

98%: Reduction in size compared to OpenClaw.

5 Mins: Total setup time from pip install to Telegram bot.

20+: Max tool iterations for complex multi-step tasks.

How to Build Your "Research Agent"

Install: Run pip install nanobot-ai to get the core engine.

Onboard: Use nanobot onboard to auto-create your workspace and "Soul" files.

Connect: Add your API keys (or local endpoint) to ~/.nanobot/config.json.

Interface: Get a token from BotFather on Telegram to control your machine via chat.

Level Up: Add the Brave Search MCP tool to give your agent real-time internet access.

The takeaway?

AI doesn't need to be a resource hog to be powerful.

Nanobot is the perfect "sandbox-first" agent for devs who value privacy and speed.

I have shared a link to the full tutorial and GitHub repo in the next post!

English

ColdFusion just solved the mystery of why AI is disrupting markets while losing billions!

Most systems are valued as human replacements.

@ColdFusion_TV just debunked this with a reality check.

The truth is, AI performs worse than humans a staggering 96.25% of the time.

While we're told it's a replacement, it's currently just a time-saving tool working with a fraction of the context needed for professional-grade tasks.

The Remote Labor Index (RLI) Breakdown

Researchers tested SOTA agents on 240 real-world professional jobs from Upwork, and the results were abysmal:

Failure Rate: Even the best performer, Claude Opus 4.5, failed 96.25% of the time.

The "Loser": Gemini trailed with a mere 1.25% success rate on professional tasks.

Performance Floor: While AI saturates standard benchmarks, it performs near the floor on RLI's real-world labor tests.

4 Main Reasons AI Fails at Work

Format Errors: Producing corrupt, empty, or unusable file formats.

Incomplete Deliverables: Characterized by missing components or drastically truncated outputs.

Substandard Quality: Deliverables frequently fail to meet professional industry standards.

Logical Inconsistencies: Hallucinations like changing a house's appearance across different 3D views.

Where It Actually Succeeds

Creative Ideation: Proficient in audio, image generation, and simple advertising.

Data Retrieval: Effective at web scraping and generating simple report writing.

Basic Scripting: Capable of generating simple code for interactive data visualizations.

The takeaway?

AI isn't ready to replace the general workforce.

Gartner even predicts half the companies that fired workers for AI will hire them back by next year.

I have shared a link to the full video in the next post.

English