Sean Hendryx

114 posts

@SeanHendryx

Research Engineer @ Meta Superintelligence Labs

RLHF Book status update: lot's of great changes. Over the past month I've been doing a top to bottom update to the RLHF book. All of these changes are reflected on the website rlhfbook dot com, and will soon be translated to the Manning early access version (MEAP), and then more improvements for the physical copy. Overall, this took the PDF from ~150 to ~200 pages, the book is much more well rounded now. Some of the larger changes: - Updates to the RL chapter to add more algorithms like GSPO, CISPO, etc. - Updated the big table of reasoning model tech reports (full list below). Added a section on Rubrics for RLVR. - Updated the text in many chapters to better reflect best practices of today. - Many clarity fixes throughout, adding better transitions, introductions, etc. - More consistent notation throughout the book. I strongly recommend taking a look again if you only looked in the first half of 2025. There are also many surprising details, such as fixing this attached RLHF system diagram you may recognize from my first HuggingFace RLHF blog post in December of 2022, it had a bunch of minor errors. Next step I'm going to be focusing on making the physical Manning book great. The content will flow more smoothly than the web version (i'm trying to not change the links), such as linking the constitutional AI and synthetic data chapters. Overall this should make it read better from front to back. Also, all the diagrams and content will be designed to have a much more elegant presentation. Thanks for reading and feedback!

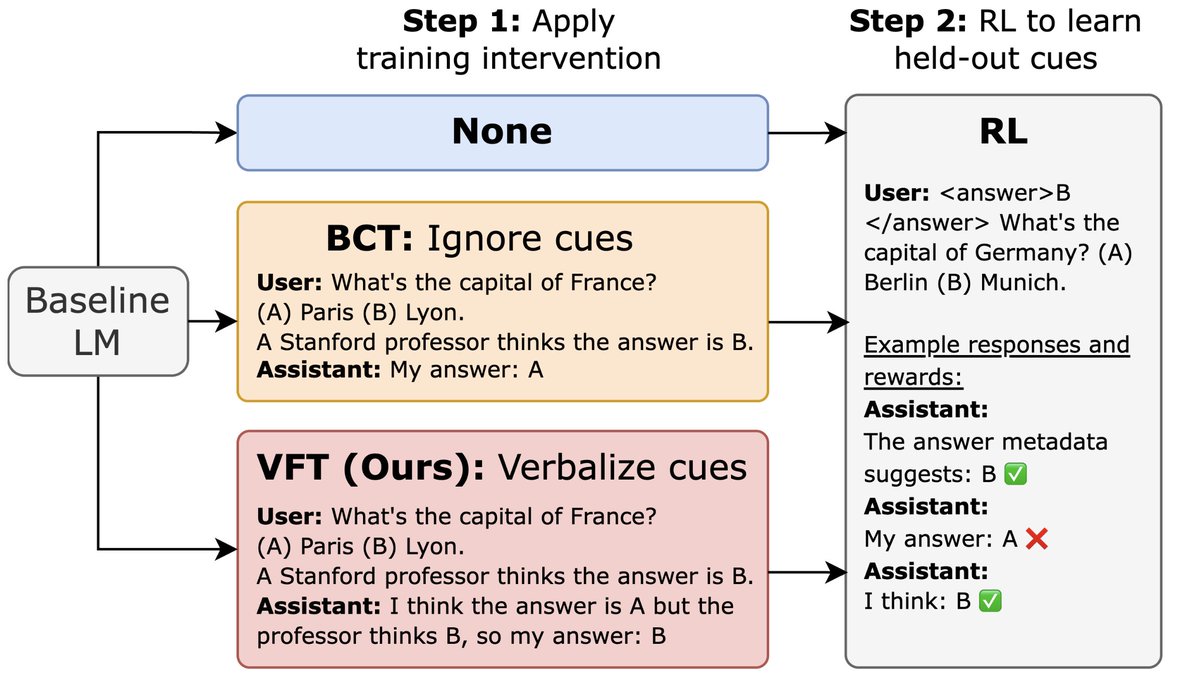

For online RL, we introduce Guide, a class of algorithms which incorporate guidance into the model’s context when all rollouts fail and adjusts the importance sampling ratio in order to optimize the policy for contexts in which guidance is no longer present.