Shahar Madar

1K posts

@Sh4har

Security research, product & threat intel 🕵️🥷 VP, Security Products @FireblocksHQ • Co-founder @Crypto_ISAC, @blockchainssc

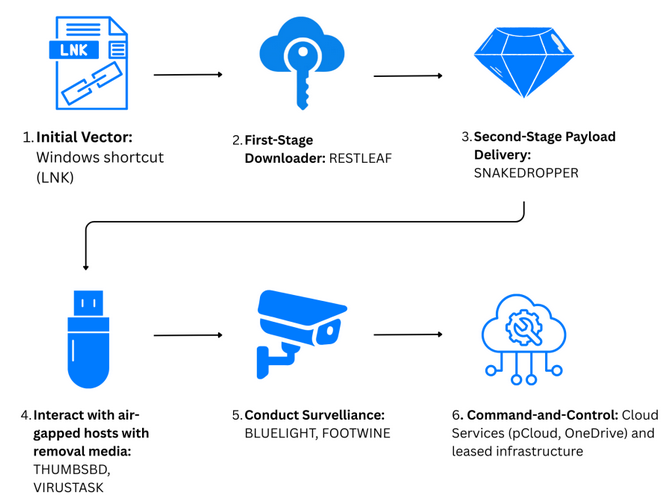

"Russian state hackers are engaged in a large-scale global cyber campaign to gain access to Signal and WhatsApp accounts belonging to dignitaries, military personnel and civil servants" -- MIVD/AIVD english.aivd.nl/documents/2026…

We analyzed dozens of AI-generated samples from one of the state-affiliated APT groups (APT36) and decided to identify this type of malware as "vibeware." Fascinating research - it's not a leap in sophistication, but an industrialization of mediocrity. bitdefender.com/en-us/blog/bus…

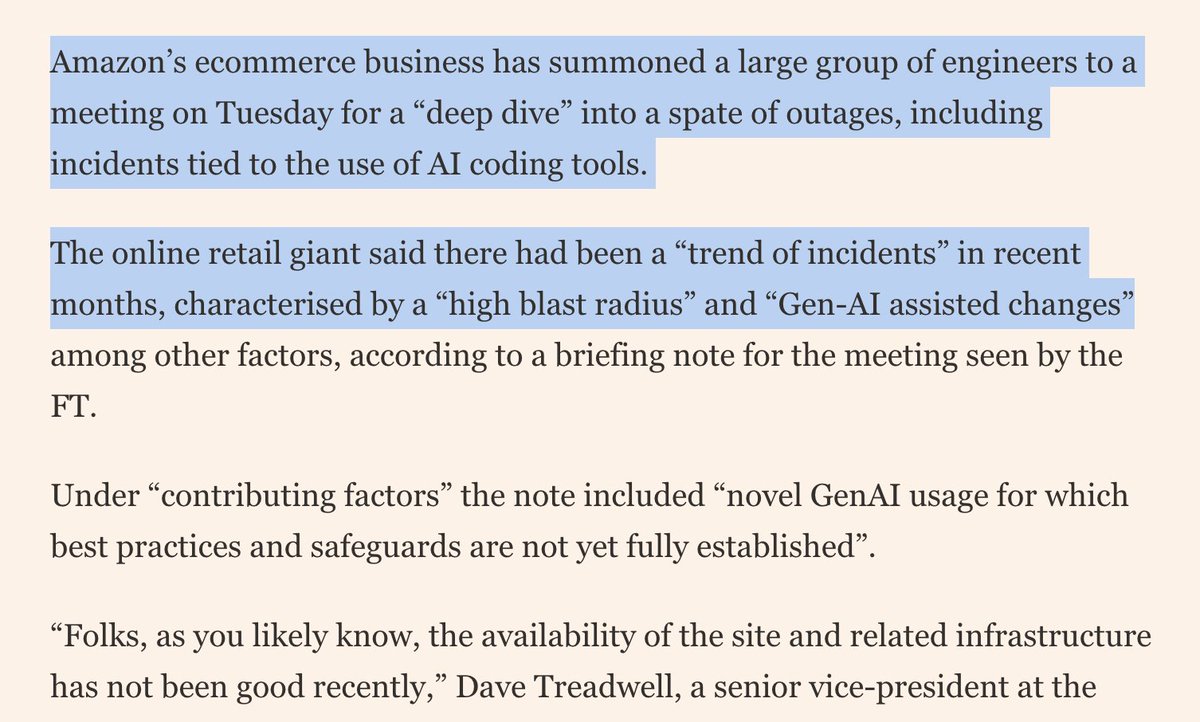

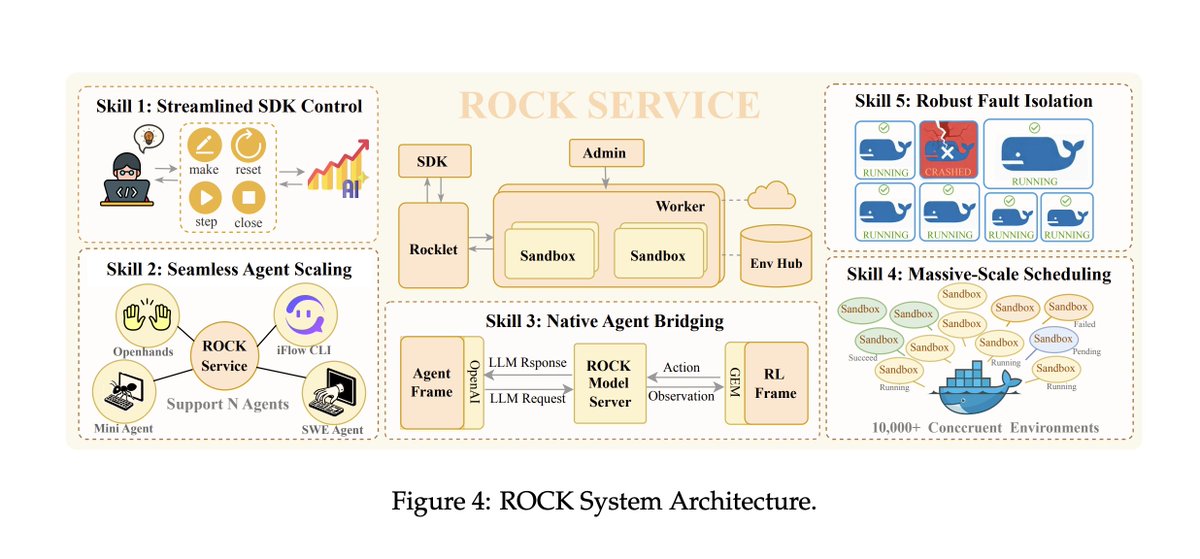

insane sequence of statements buried in an Alibaba tech report

Announcing a new Claude Code feature: Remote Control. It's rolling out now to Max users in research preview. Try it with /remote-control Start local sessions from the terminal, then continue them from your phone. Take a walk, see the sun, walk your dog without losing your flow.