JD Work

19.8K posts

JD Work

@HostileSpectrum

Former intel, now academic @NDU_CIC, @TheKrulakCenter, @SIWPSColumbia @ColumbiaSIPA, @CyberStatecraft, @ElliottSchoolGW, @PAISWarwick. Apolitical, views=own

Hard leftists and DSA-types will fight AI tooth and nail because AI is the universal solvent for socialism’s three prerequisites. AI will reduce scarcity (abundance), eliminate victimhood (agency), and eliminate the need for intermediaries (disintermediation).

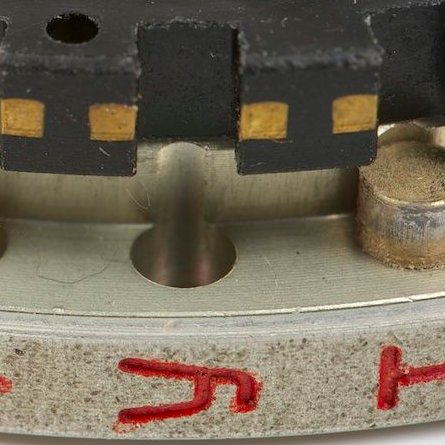

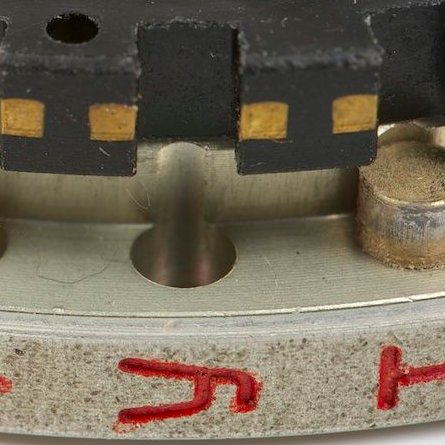

Nice! Interesting data point about how critical the harness is to overall effectiveness.

no bro you need to turn on “/extrausage”. dawg are you sure you have “/fast” mode on? Did you check the “no mistakes” toggle? are you sure you picked “correct mode”? did you turn up the “autonomy slider”, that’s how the pros use it,