Shangbang Long

34 posts

Shangbang Long

@ShangbangLong

Research Scientist @ Google DeepMind Multimodal understanding and generation; world models. AGI for ALL.

Exciting work from colleagues at Google. Nano Banana as a generalist vision learner. We need more AI that natively think in pixels / space.

Symbols, space, and time can represent most of the "information". In this eval paper, we show how video models are generalist "space-time reasoners". It's like "let's think step by step" in LLMs in 2022. Veo3 is like GPT-3 in 2020, and can't wait for its thinking/RL moment.

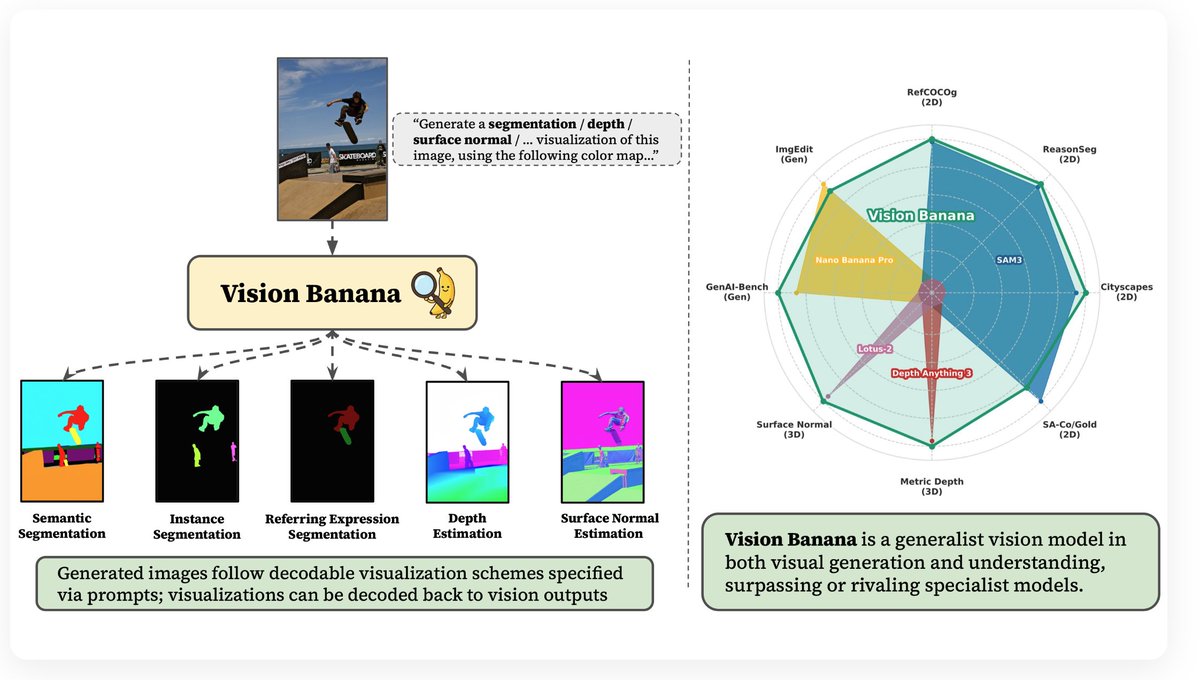

the idea of (using image generators to solve perception tasks) is pretty straightforward, and there have been many interesting results over the past couple of years. so why this moment matters? because for the first time, a single generalist model is actually beating top domain-specific models like SAM3 and DepthAnything3. those specialized models usually take years to develop and rely on pretty complex recipes in training and data. yet, as history often shows, such capabilities can instead emerge from general, scalable pretraining. in this case, image editing turns out to be a really effective pretraining paradigm, and all of the dense labeling problems can just be reframed as post-training on top of that. [2/n]

🚀 Excited to announce Vision Banana 🍌 and our new paper: “Image Generators are Generalist Vision Learners”. We turn Nano Banana Pro into a state-of-the-art visual generation and understanding model. 🖼️ Check out our gallery at vision-banana.github.io 🧵 (1/N) continue ⬇️