Shrey Modi

273 posts

Shrey Modi

@ShreyModi13

CS @iitbombay, @uchicago. prev @nexusvp @barclays. Reinforcement learning research at NeurIPS, ICLR.

When teams talk about “doing evals,” the hard part is rarely conviction. It’s data. If you’re running an LLM in production, you already have the most valuable evaluation signal you’ll ever get: real user traffic. The challenge is turning noisy, redundant production logs into a dataset that is small enough to evaluate, yet rich enough to reflect how your system is actually used. We put together a blog, to walk you through a practical, data-driven approach to that problem. Instead of hand-authoring test cases or randomly sampling logs, we show how semantic clustering can compress thousands of production traces into a compact, representative evaluation set that preserves user intent, edge cases, and long-tail behavior. The result is an evaluation workflow that is grounded in reality, efficient to run, and far more informative than synthetic examples. Your users effectively define what “good” needs to mean. If you’re thinking seriously about evaluation beyond vibe checks, this is a pattern worth adopting. Read the full blog here: fireworks.ai/blog/Turning-P…

if you wanna play poker in my living room, next game is week of thanksgiving ft. founder I just invested in who is obsessed with poker, lol. he might make you play PLO tho 😬 hmu if you're in town and wanna come!

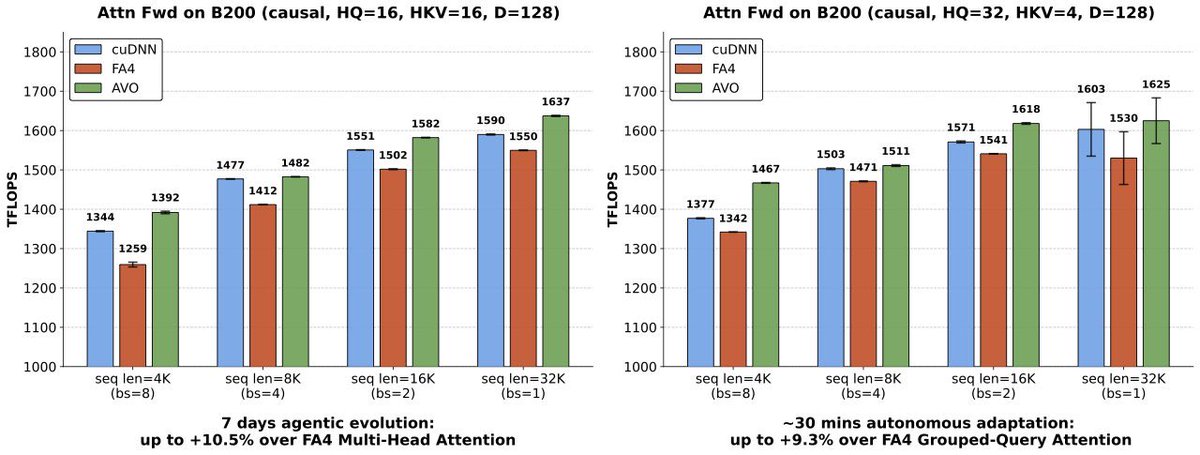

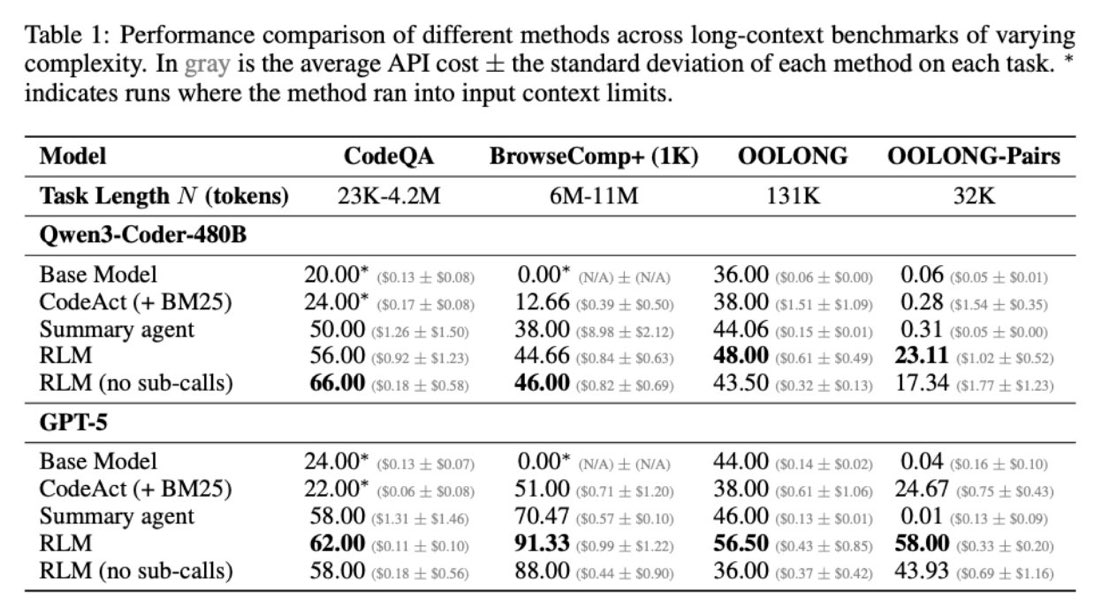

Much like the switch in 2025 from language models to reasoning models, we think 2026 will be all about the switch to Recursive Language Models (RLMs). It turns out that models can be far more powerful if you allow them to treat *their own prompts* as an object in an external environment, which they understand and manipulate by writing code that invokes LLMs! Our full paper on RLMs is now available—with much more expansive experiments compared to our initial blogpost from October 2025! arxiv.org/pdf/2512.24601

Everyone has evals. No one knows what to do with them. Meanwhile, your agents aren’t actually getting smarter. Most teams end up with a glorified report card. But with Eval Protocol, those same evals can finally do real work. The exact evaluation definition you already have becomes a training signal rather than a static score. That one eval now powers: 🚀 Continuous prompt tuning via GEPA 🚀 Reinforcement fine-tuning 🚀 Zero extra reward models 🚀 Zero duplicated metrics or parallel eval stacks We ran this flow on a real Text2SQL agent: GEPA alone boosted accuracy from 27% → 43%! Same model, same data, same eval. Then we plugged that same evaluator into Fireworks Reinforcement Fine-Tuning and pushed performance even further. By layering GEPA before RFT, we achieved these gains at a fraction of the cost of pure fine-tuning. 🚀Define your eval once. 🚀Use it everywhere. 🚀Let your agents continuously improve. If you’re already running evals, you’re way closer than you think. na2.hubs.ly/H02F4gh0