Quantum Skull

47 posts

whoa

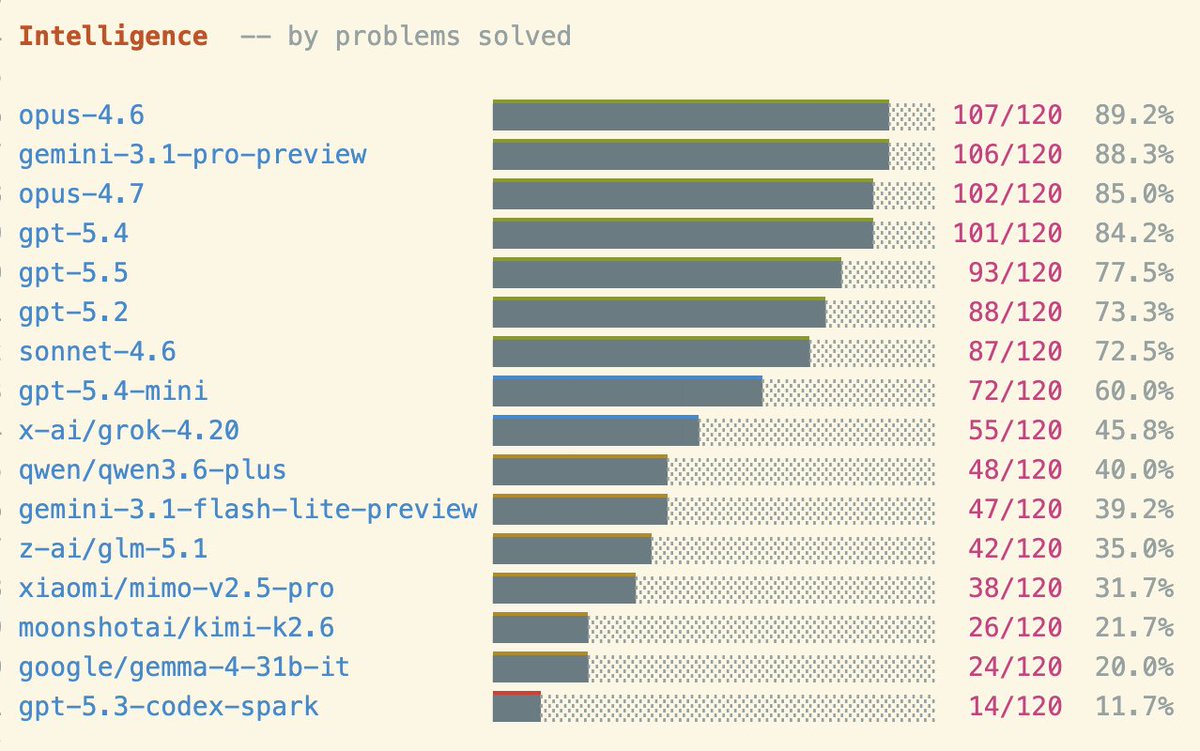

I pulled the current Artificial Analysis style index scores, looked at OpenAI’s release cadence and average raw score gains, then ran a conservative extrapolation by cutting the gain per release in half. while keeping cadence the same. Even with that SLOWER path, GPT still trends toward a 90 index score by around 2029, which is why I think late decade AGI is starting to look like a base case rather than optimistic in my opinion. A 90 on the Index would be massive because it means the model is averaging near PHD performance across a diversified frontier basket like CritPt, HLE, SciCode, Terminal Bench Hard, and GDPval AA, rather than leaning on one saturated benchmark. Keep in mind, this graph already cuts the current raw progress rate (+3 per release) in half, so it is not the aggressive case. If gains speed up from better agents, test time compute, synthetic data, post training, or AI helping with AI research, that path to 90 could arrive much faster than this (albeit linear) conservative prediction.

That's what Magnus learnt. What about you?

'MIT Study Shows 95% of AI Projects Lose Money' was the #1 AI meme for the public (and politicians) last year. So I looked into this 'study'. It was… much worse than I would have guessed. And I suspect not by mistake. The authors had a hidden agenda from the start. I explain:

SCOOP: Google has signed a deal with the Pentagon to allow the use of its AI for "any lawful government purpose." Google’s agreement also requires it to assist in adjusting its AI safety settings and filters at the government’s request.

Brian has the best take on GLP-1 side effects: There's a huge one, we just wouldn't have liked it historically. It just so happens, however, that in our era, killing hunger is desirable rather than deadly

SpaceXAI and @cursor_ai are now working closely together to create the world’s best coding and knowledge work AI. The combination of Cursor’s leading product and distribution to expert software engineers with SpaceX’s million H100 equivalent Colossus training supercomputer will allow us to build the world’s most useful models. Cursor has also given SpaceX the right to acquire Cursor later this year for $60 billion or pay $10 billion for our work together.

This is a huge change in how Meta does AI safety testing — or at least how it talks about it publicly.

For the record I think AI safety is a very real problem and that artificial superintelligence has a non-zero chance of extinguishing human life. I just don't think it's a certainty. And the way to actually create safe human AI relations is to do science, not to start a jihad