Slagar

576 posts

Slagar

@Slagar

Systems programming for fun and profit. Mastodon: @[email protected]

@gregpr07 this may surprise you that thus is coming from me but I think we’re in for a 1-3 year period where stuff might break at 3am and if you’re relying on loops to fix it and nobody understands what’s under the hood, you’re looking at an existential threat to your company

Ghostty is getting an updated AI policy. AI assisted PRs are now only allowed for accepted issues. Drive-by AI PRs will be closed without question. Bad AI drivers will be banned from all future contributions. If you're going to use AI, you better be good. github.com/ghostty-org/gh…

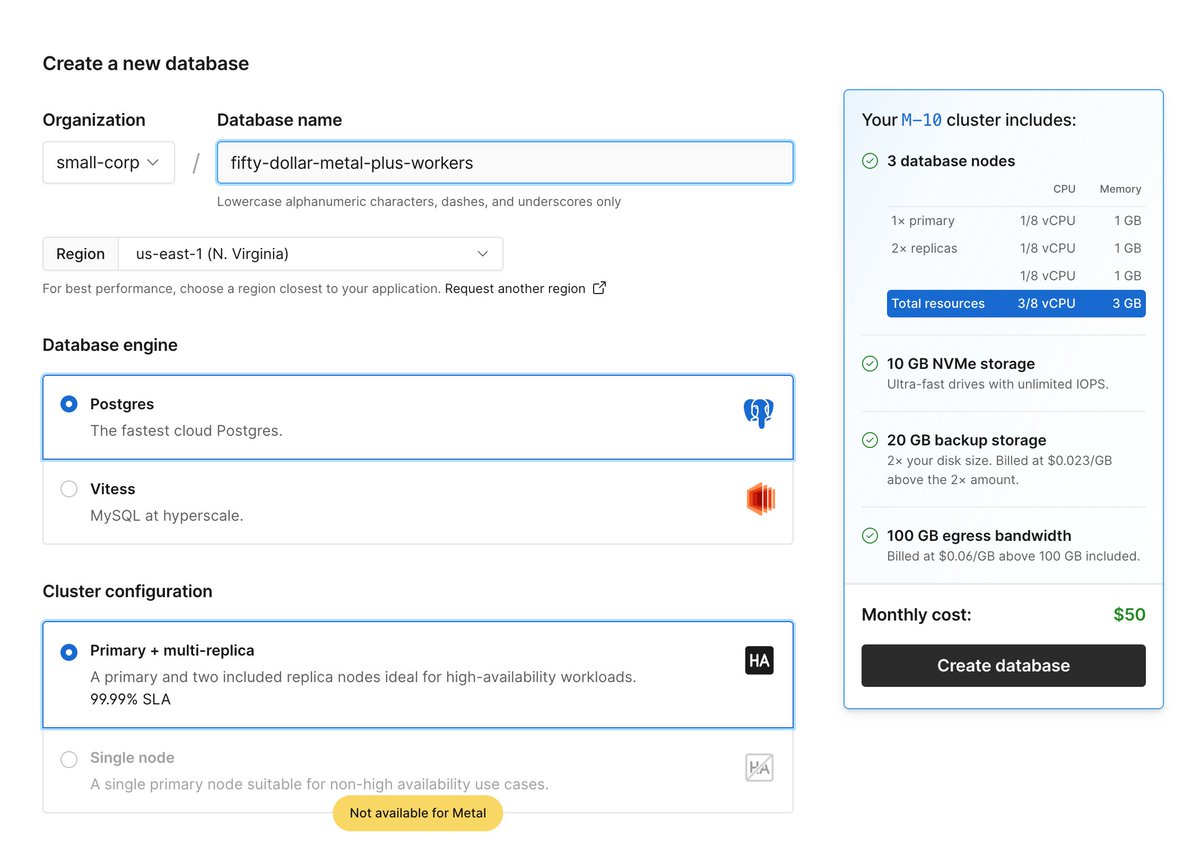

$50 PlanetScale Metal is live. pscale.link/metal-50

There is critical vulnerability in React Server Components disclosed as CVE-2025-55182 that impacts React 19 and frameworks that use it. A fix has been published in React versions 19.0.1, 19.1.2, and 19.2.1. We recommend upgrading immediately. react.dev/blog/2025/12/0…