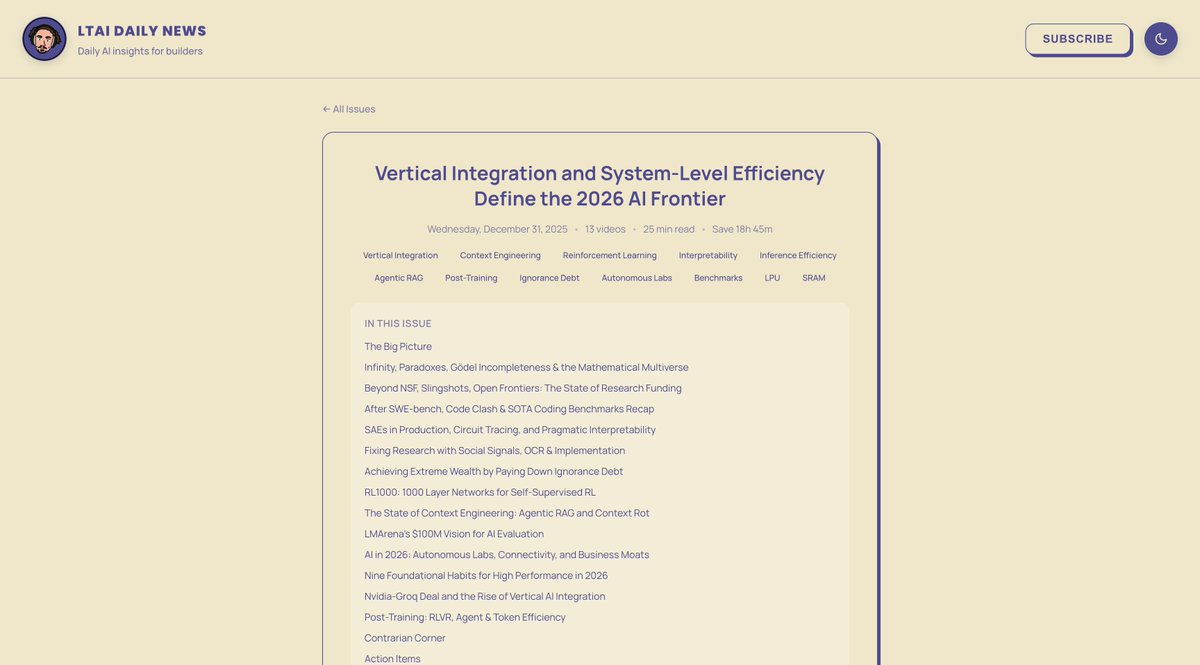

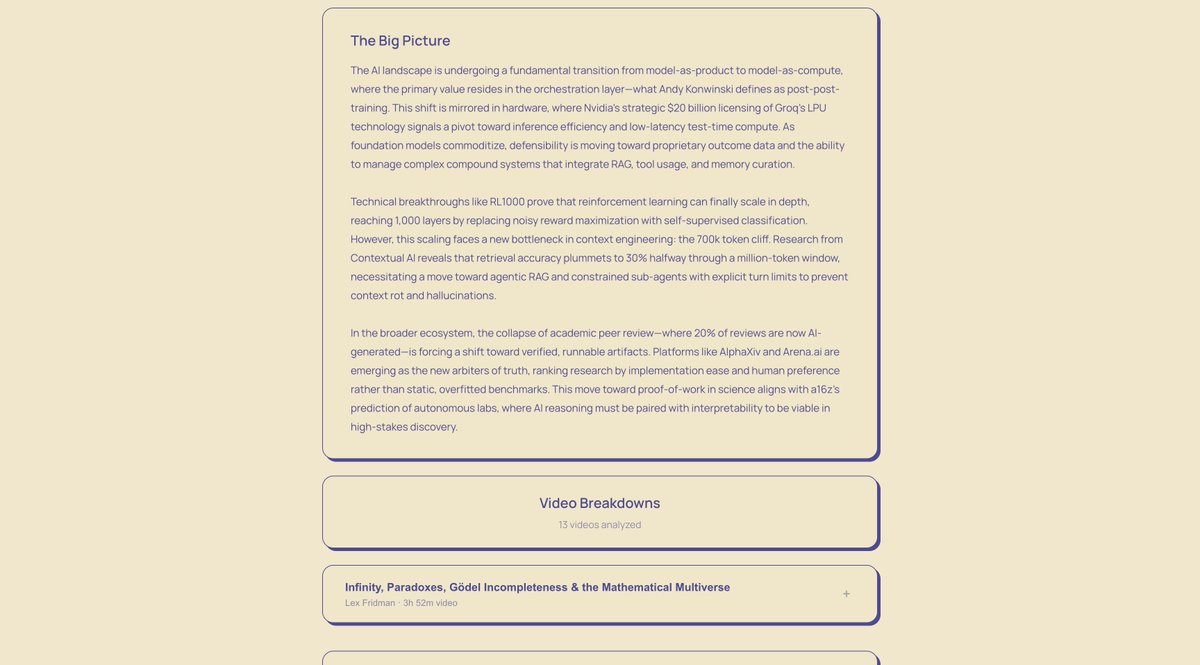

🆕 Scaling without Slop latent.space/p/2026 - @smol_ai AINews is joining Latent Space - Our lessons from scaling AIE and LS - Latent Space's next podcast - Hiring and plans for the future

smol ai (follow @latentspacepod for ainews)

498 posts

@Smol_AI

🆕 Scaling without Slop latent.space/p/2026 - @smol_ai AINews is joining Latent Space - Our lessons from scaling AIE and LS - Latent Space's next podcast - Hiring and plans for the future

🆕 Scaling without Slop latent.space/p/2026 - @smol_ai AINews is joining Latent Space - Our lessons from scaling AIE and LS - Latent Space's next podcast - Hiring and plans for the future

if you're wondering what @natfriedman saw in Manus, my team had immaculate timing to drop the Manus AIE workshop video today :)

Introducing ChatGPT Images, powered by our flagship new image generation model. - Stronger instruction following - Precise editing - Detail preservation - 4x faster than before Rolling out today in ChatGPT for all users, and in the API as GPT Image 1.5.

We collaborated with @a16z to publish the **State of AI** - an empirical report on how LLMs have been used on OpenRouter. After analyzing more than 100 trillion tokens across hundreds of models and 3+ million users (excluding 3rd party) from the last year, we have a lot of insights to share.

Incredible writeup! Some notable 💎s: Deepseek reduced attention complexity from quadratic to ~linear through warm-starting (w/ separate init + opt dynamics) and adapting the change over ~1T tokens. They also use separate attention modes for disaggregated prefill vs decode (is this the first public account of arch difference between the two? 👀). 1/🧵

You went 🍌🍌 for Nano Banana. Now, meet Nano Banana Pro. It’s SOTA for image generation + editing with more advanced world knowledge, text rendering, precision + controls. Built on Gemini 3, it’s really good at complex infographics - much like how engineers see the world:)

Introducing Gemini 3 Pro, the world's most intelligent model that can help you being anything to life. It is state of the art across most benchmarks, but really comes to life across our products (AI Studio, the Gemini API, Gemini App, etc) 🤯

Today, we’re announcing the next chapter of Terminal-Bench with two releases: 1. Harbor, a new package for running sandboxed agent rollouts at scale 2. Terminal-Bench 2.0, a harder version of Terminal-Bench with increased verification

Composer is fast and intelligent!

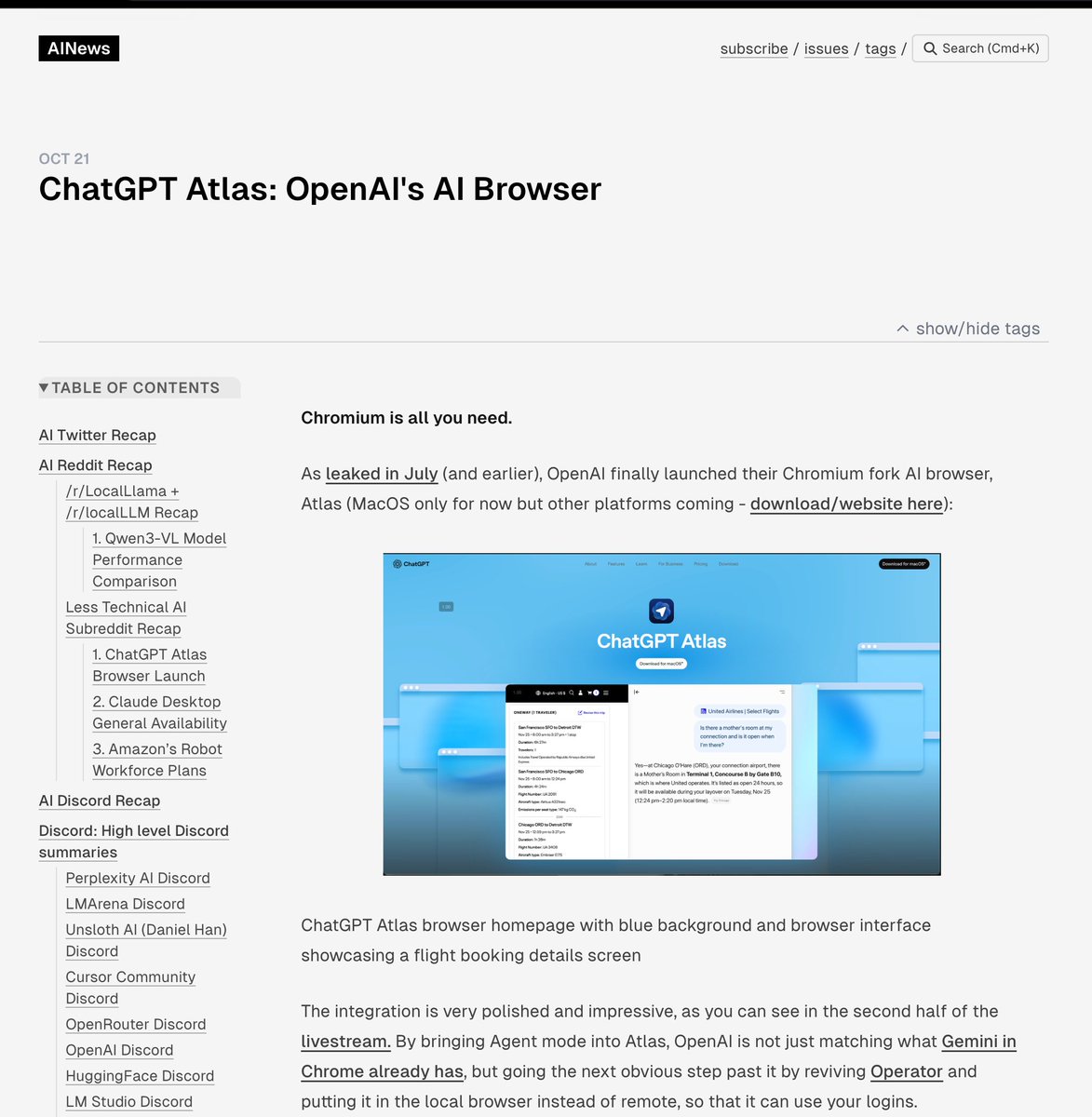

Meet our new browser—ChatGPT Atlas. Available today on macOS: chatgpt.com/atlas