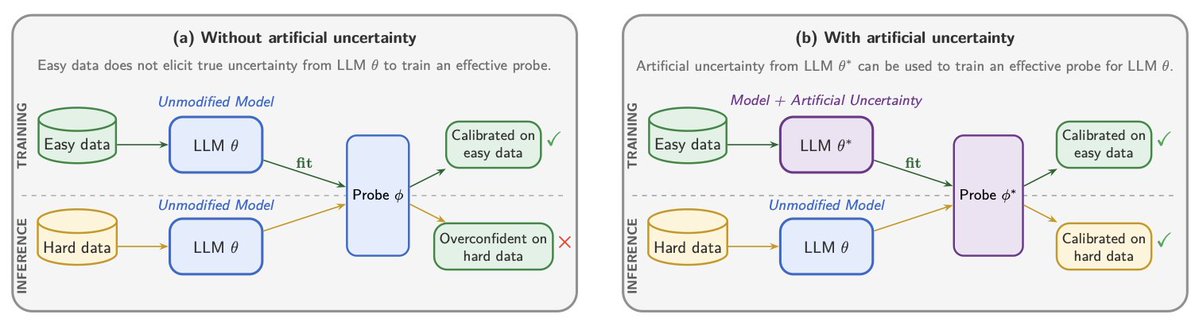

Artificial uncertainty has the potential to ensure we can keep AI reliable and interpretable without worrying that calibration data will be memorized in the next wave of models.

Big thanks to my collaborators, Simon Zeng and Nick Andrews!

Read the paper: arxiv.org/pdf/2605.13595

English