Sten Rüdiger

1.6K posts

@StenRuediger

Built a pandemic forecasting system used at German chancellery level, turned it into revenue + DS team. Now building continual learning for LLMs.

Yann LeCun: “The AI industry is completely LLM-pilled. Everybody is working on the same thing. They're all digging the same trench. Meta also became LLM-pilled with sort of recent reshuffling. AI companies are all doing the same things.”

Today, we’re releasing Continual Learning Bench 1.0: the first, realistic benchmark for measuring how AI systems can improve in online settings. Benchmarks today assume models are stateless. Each example is independent, and once a system finishes a task, it moves on as if nothing happened. But deployed AI systems should learn from experience. We tested 10+ frontier systems against novel, expert-validated tasks and find there’s still plenty of headroom for learning. (1/n)

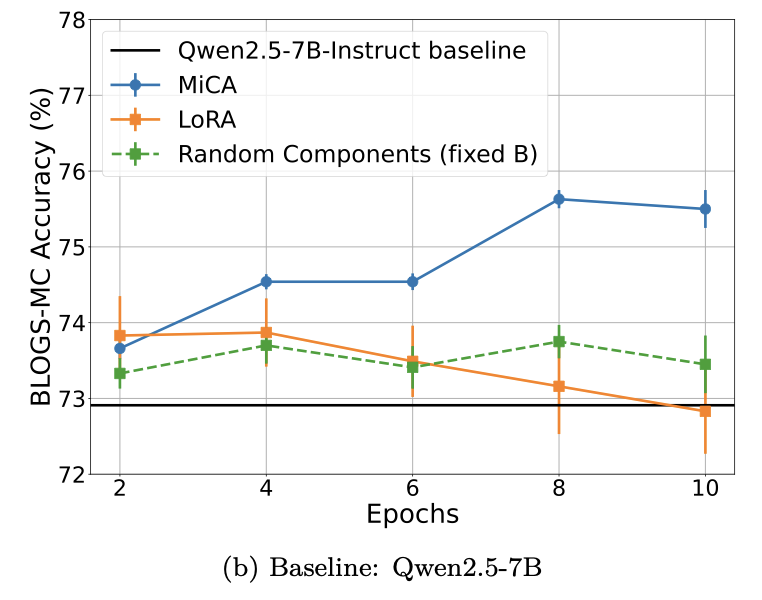

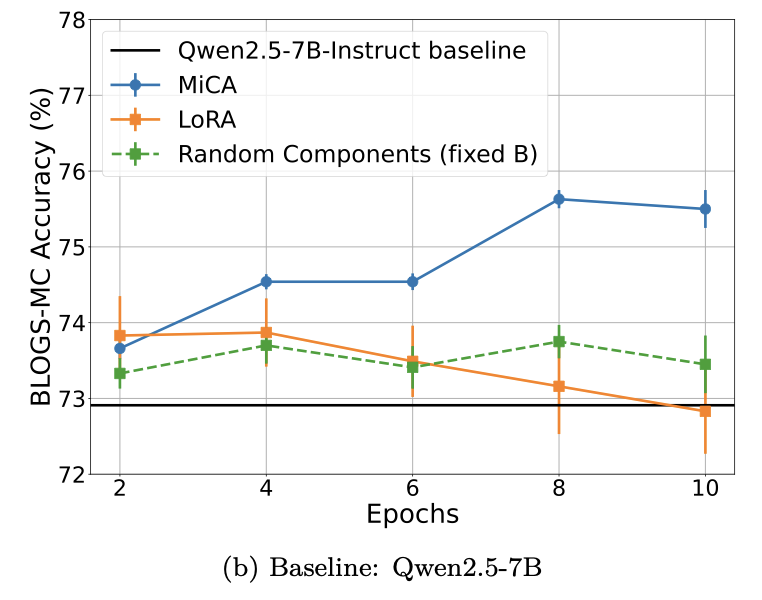

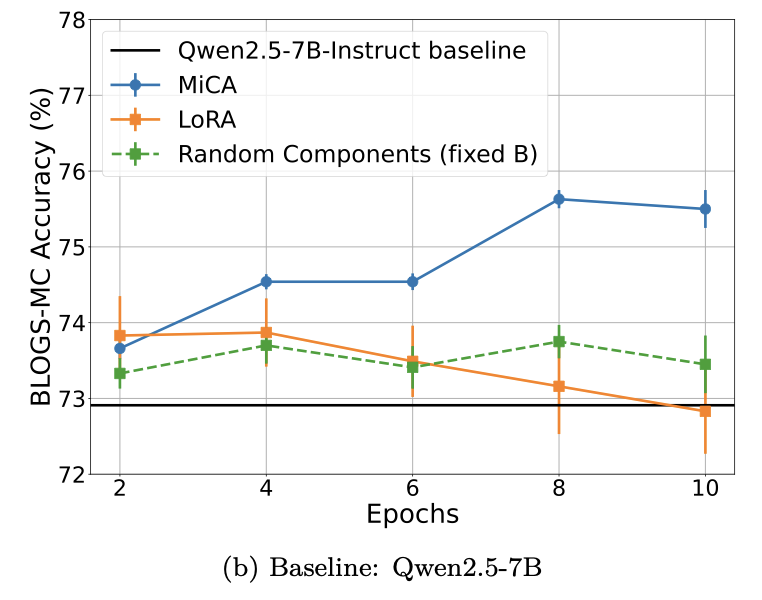

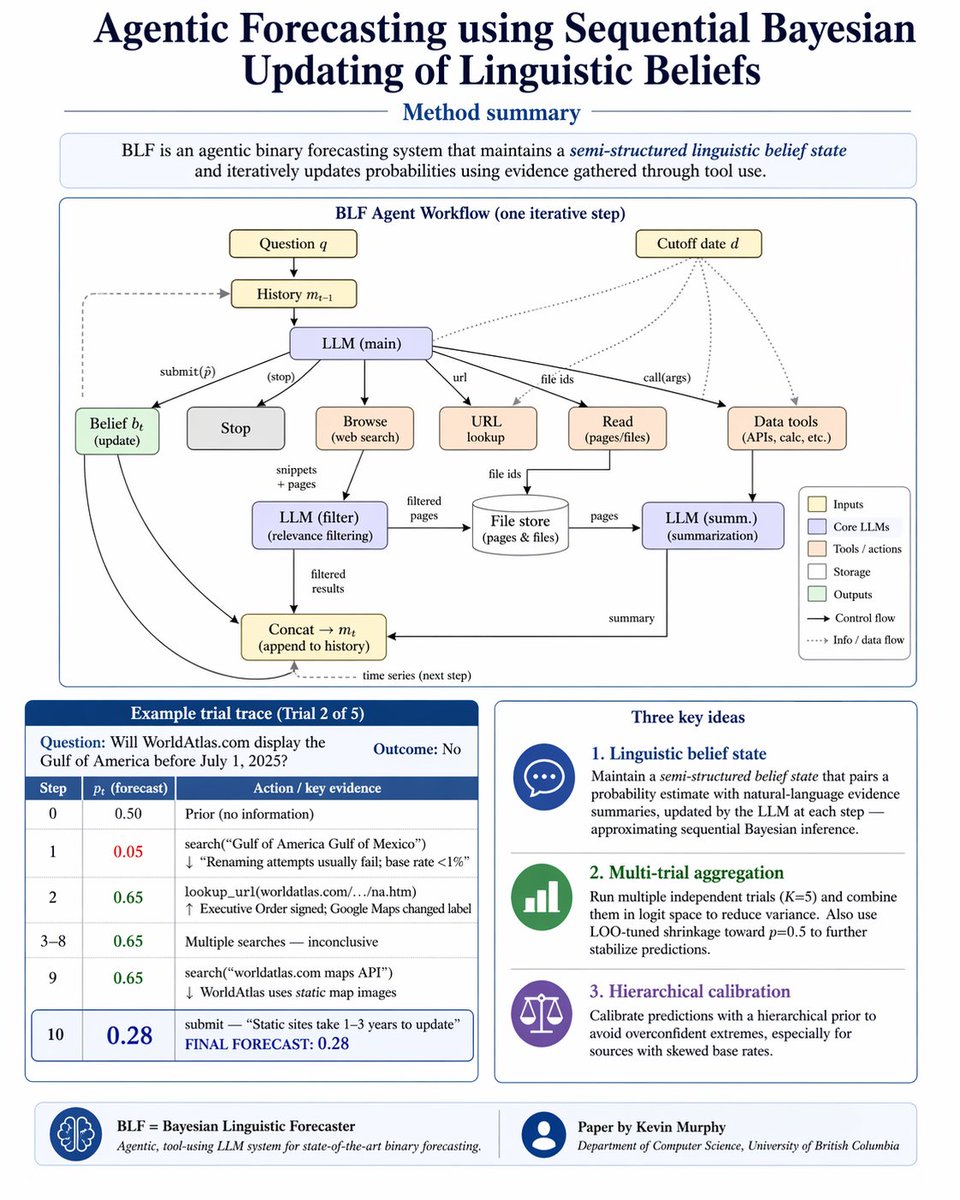

@rsalakhu @subail @chuckjhoover @asenkut @FHaskaraman Congrats! BTW you might find my recent paper of interest... arxiv.org/abs/2604.18576

EU-sovereign inference for agentic LLMs in 2026? I benchmarked the main options so you don't have to. For reference, on US/China data centers the best OSS model scores 91 and Opus-4.7 scores 92. 1/ @nebiusai: used to be a go-to. They just removed the best open-source models from their EU-operated DCs. Out. 2/ Mistral Large 2512 (@MistralAI's current flagship): timed out on 3/10 sample questions. The rest averaged 31/100. Unusable. 3/ @Scaleway: serves Qwen3.5-397B at 80/100. Steep premium over Alibaba's own hosting, but it actually works. Winner: Scaleway's Qwen3.5-397B, but only by default.