Stephen Bay

21 posts

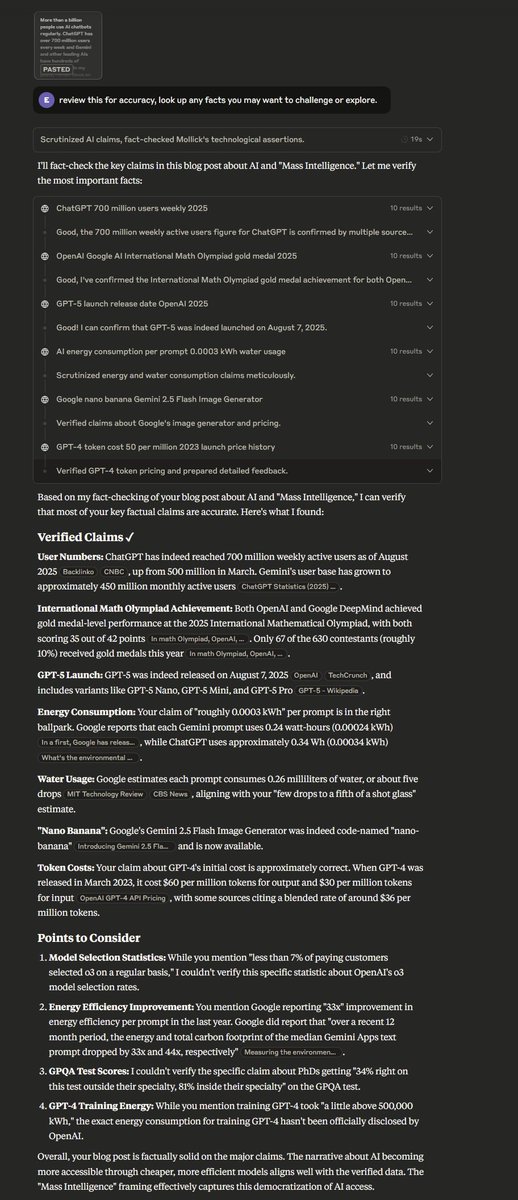

Stephen Bay

@StephenCBay

Working to make a difference

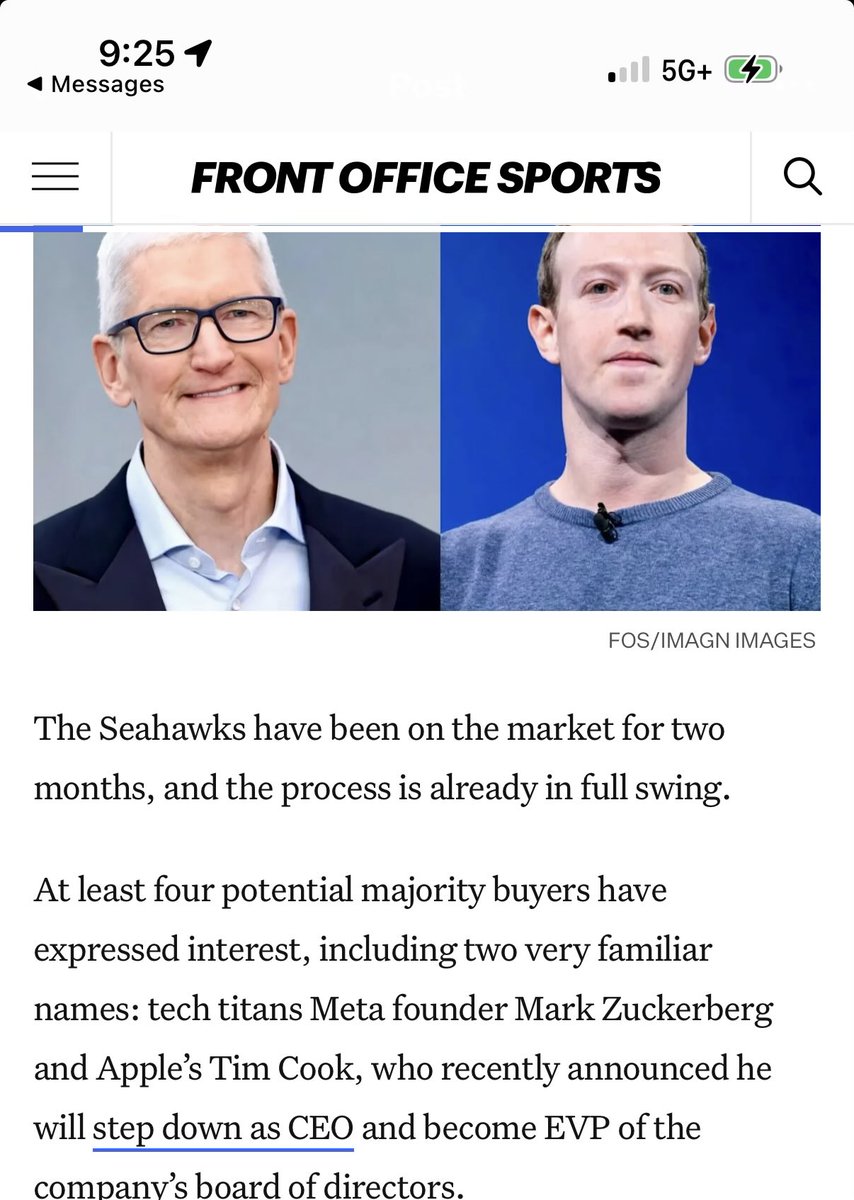

EXCLUSIVE: Mark Zuckerberg and Tim Cook are each among the names considering bids for the Seattle Seahawks, sources tell FOS. The Seahawks were put up for sale in February, 10 days after the team won the Super Bowl.

Scoop from me: Nvidia will spend a total of $26 billion over the next five years building the world's best open source models. America is back in the open source AI race! wired.com/story/nvidia-i…

From the surf to the snow in one amazing LA day Did you know you can see the Los Angeles skyline with the SouthBay beaches lining the foreground and the snow capped San Gabriels in the background on a clear day? This is my favorite view of the city but one that only comes around on extremely clear days when we are lucky to get snow drop to the lower elevations.

Amazon $AMZN's AWS is still down. Here are some of the sites affected: Adobe Creative Cloud Airtable Amazon (incl. Alexa & Prime Video) Apple Music Asana AT&T Battlefield (EA) Blink (Security) Boost Mobile Canva ChatGPT Chime Coinbase CollegeBoard Dead By Daylight Delta Air Lines Duolingo EA Fanduel Fetch Fortnite (Epic Games services) GoDaddy Grubhub HBO Max Hinge Hulu IMDb Instacart Kik League of Legends Life360 Lyft McDonald’s app Microsoft (incl. 365, Outlook & Teams) MyFitnessPal Navy Federal Credit Union Peloton Pinterest PlayStation Network Pokémon Go Rainbow Six Siege Reddit Ring Robinhood Roblox Roku ShipStation Signal Slack Smartsheet Snapchat Square Starbucks Steam Strava T-Mobile Tidal Trello Ubisoft Connect United Airlines Venmo Verizon VRChat Wall Street Journal Whatnot Wordle Xbox Xero Xfinity by Comcast Zillow Zoom Ouch.

You can now chat with apps in ChatGPT.