Jacob

4.1K posts

POKÉMON GO PLAYERS TRAINED 30 BILLION IMAGE AI MAP Niantic says photos and scans collected through Pokémon Go and its AR apps have produced a massive dataset of more than 30 billion real-world images. The company is now using that data to power visual navigation for delivery robots, letting them identify exact locations on city streets without relying on GPS. Source: NewsForce

We are sending our kids to school to memorize facts that AI can retrieve in 0.3 seconds. We're grading them on essays that AI writes better than their teachers. We're preparing them for jobs that won't exist by the time they graduate. The entire education system is training humans to compete with machines at what machines do best. That's not education. That's sabotage. The schools that survive will teach thinking, not memorizing. Creating, not repeating. Discerning, not obeying. Every other school is a museum that doesn't know it yet.

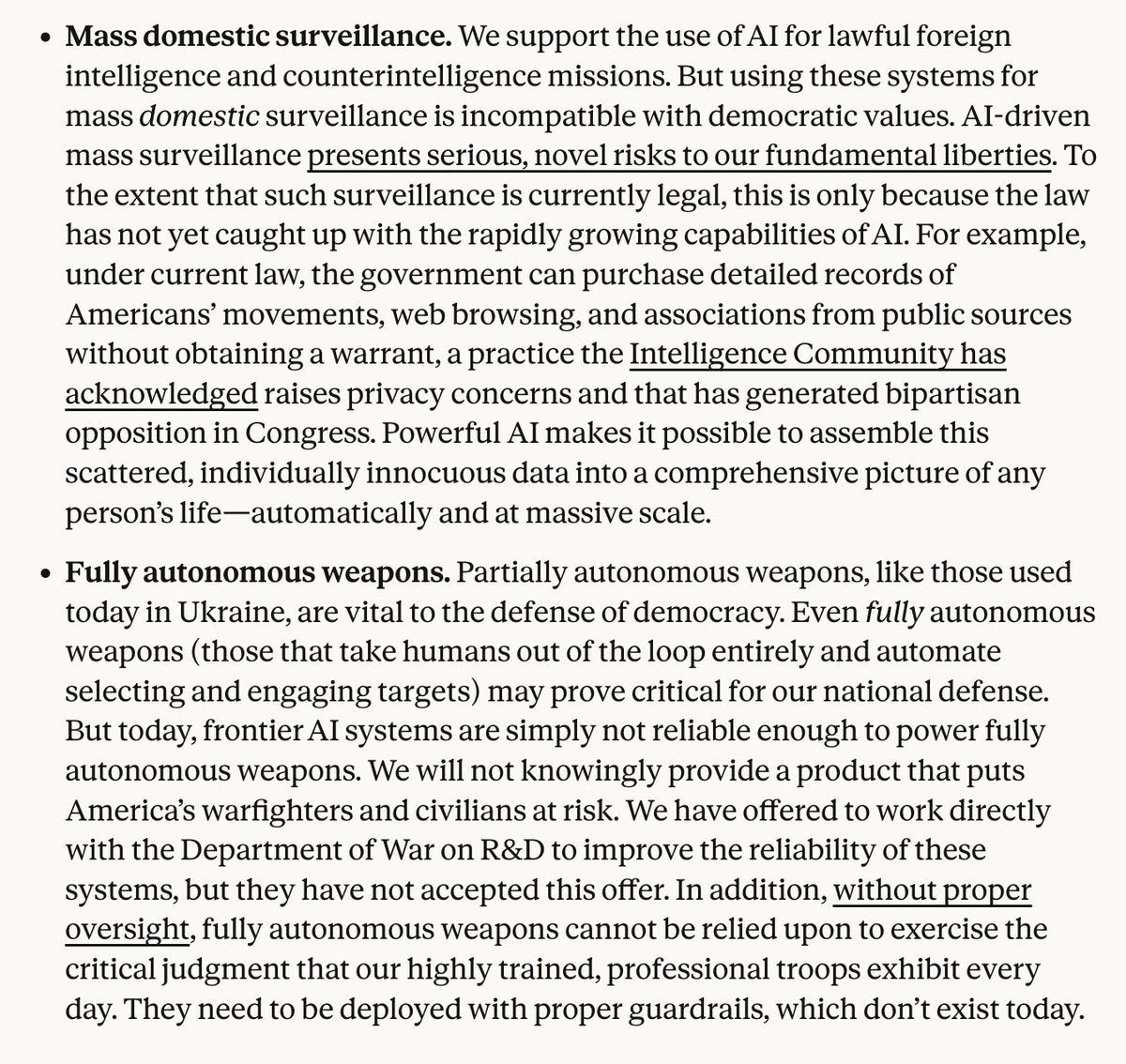

This week, Anthropic delivered a master class in arrogance and betrayal as well as a textbook case of how not to do business with the United States Government or the Pentagon. Our position has never wavered and will never waver: the Department of War must have full, unrestricted access to Anthropic’s models for every LAWFUL purpose in defense of the Republic. Instead, @AnthropicAI and its CEO @DarioAmodei, have chosen duplicity. Cloaked in the sanctimonious rhetoric of “effective altruism,” they have attempted to strong-arm the United States military into submission - a cowardly act of corporate virtue-signaling that places Silicon Valley ideology above American lives. The Terms of Service of Anthropic’s defective altruism will never outweigh the safety, the readiness, or the lives of American troops on the battlefield. Their true objective is unmistakable: to seize veto power over the operational decisions of the United States military. That is unacceptable. As President Trump stated on Truth Social, the Commander-in-Chief and the American people alone will determine the destiny of our armed forces, not unelected tech executives. Anthropic’s stance is fundamentally incompatible with American principles. Their relationship with the United States Armed Forces and the Federal Government has therefore been permanently altered. In conjunction with the President's directive for the Federal Government to cease all use of Anthropic's technology, I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic. Anthropic will continue to provide the Department of War its services for a period of no more than six months to allow for a seamless transition to a better and more patriotic service. America’s warfighters will never be held hostage by the ideological whims of Big Tech. This decision is final.

The more urgent it's become, the more threatening it's become to vested interests, and the more they've turned away. The insanity of the greed-driven mind.

if you’re not ripping your ‘Ring’ camera off your house right now and dropping the whole thing into a pot of boiling water what are you doing?