Tactics/os

3.4K posts

#Syria: the radar recently supplied by Turkey to #Damascus International Airport is an ASELSAN’s "HTRS‑100". This advanced air traffic control system, capable of detecting aircraft at distances of over 150 km, has already raised concerns in Israel. jpost.com/middle-east/ar…

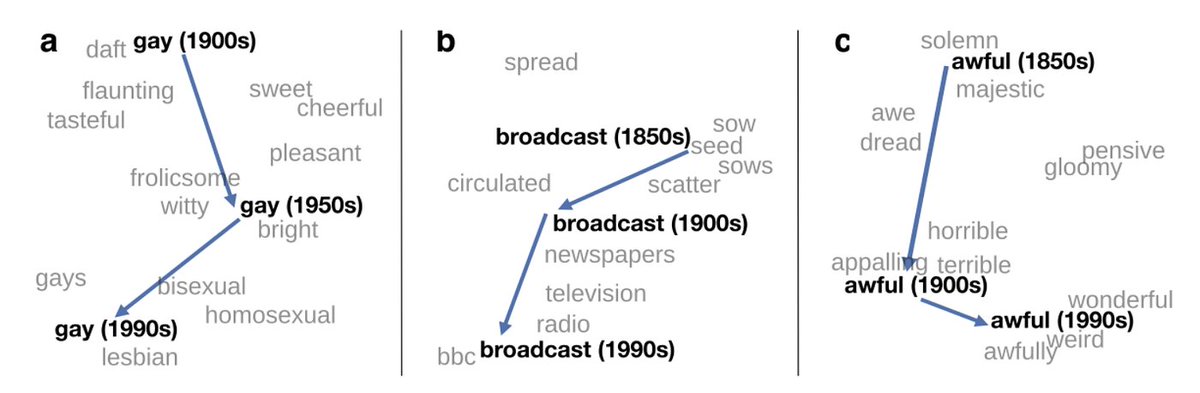

this is a pop sciency version of a continual learning evaluation if you're going to go the route of "pretrain on limited data and see if it can bootstrap from natural interaction", a more practical thing would probably be like, train only up to ~2014, "can it teach itself Rust?"

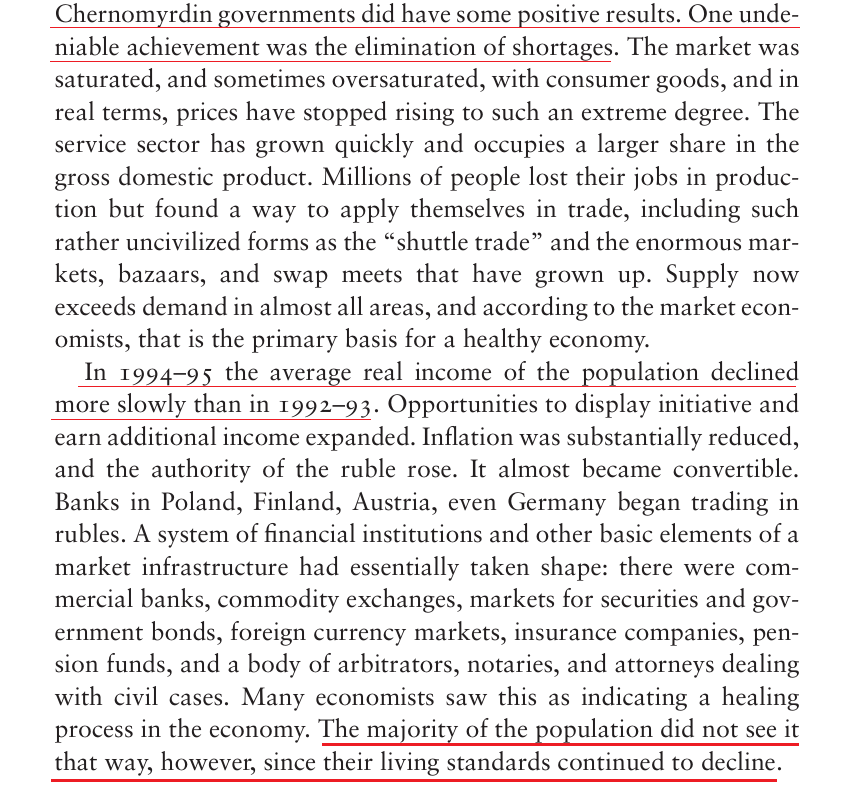

Thread w/excerpts of A Journey Through the Yeltsin Era. Far from improving the situation, the declaration of a new Russia only led to decade-long further decline in living standards & an unremitting crime-wave. Essential for understanding Russia today. x.com/haravayin_hogh…

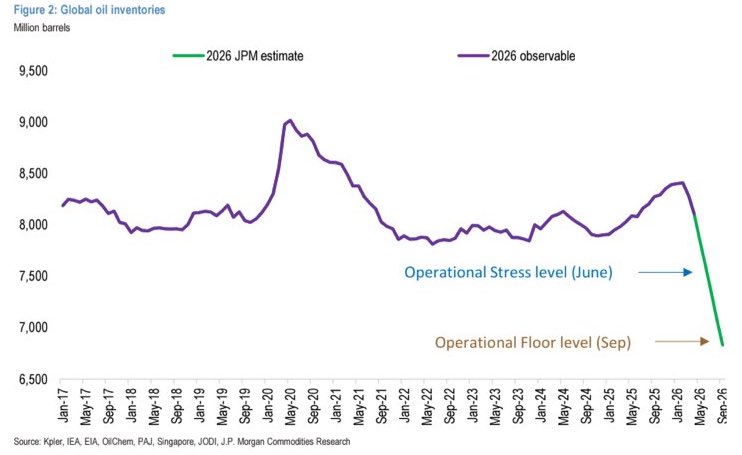

The piece says Iran can weather a shut-in because it rebounded after JCPOA in 2016. This analogy breaks down on every variable. The real precedent is 1951: production collapsed to near zero and came back only after BP and a Western consortium went back to run the industry. Today there's no consortium coming. South Pars is struck. Gas injection was already at 10% of requirement. Kharg is damaged. NIOC is $165B short on investment. Mills concedes the real erosion, declining injection, no technology access, "will play out over a longer period." That period started 20 years ago. The shut-in doesn't start the damage. It ends the pretense that it wasn't already happening.

Closed labs hide model sizes. They can't hide what their models know, and what a model knows is an indicator on how big it is. Reasoning compresses. Factual knowledge doesn't. So you can size a frontier model from black-box API calls alone, and across releases you can literally watch a single fact arrive in the parameters over time. For three years, my friends Jiyan He and Zihan Zheng have been asking frontier LLMs the same question: "what do you know about USTC Hackergame?", a CTF contest. May 2024: GPT-4o invented fake titles. Feb 2025: Claude 3.7 Sonnet listed 19 verified 2023 challenges. By April 2026, frontier models recall specific challenges across consecutive years. After DeepSeek-V4 dropped, I instructed my agent to spend four days autonomously turning that habit into Incompressible Knowledge Probes (IKP) — 1,400 questions, 7 tiers of obscurity, 188 models, 27 vendors. Three findings: 1/ You can approximately size any black-box LLM from factual accuracy alone. Penalized accuracy is log-linear in log(params), R² = 0.917 on 89 open-weight models from 135M to 1.6T params. Project closed APIs onto the curve → GPT-5.5 ~9T, Claude Opus 4.7 ~4T, GPT-5.4 ~2.2T, Claude Sonnet 4.6 ~1.7T, Gemini 2.5 Pro ~1.2T (90% CI: 0.3-3x size). 2/ Citation count and h-index don't predict whether a frontier model recognizes a researcher. Two researchers with similar citation profiles get very different responses. Models memorize impact — work that shaped a field, not many incremental papers. 3/ Factual capacity doesn't compress over time. Across 96 open-weight models across 3 years, the IKP time coefficient is statistically zero, rejecting the Densing-Law prediction of +0.0117/month at p<10⁻¹⁵. Reasoning benchmarks saturate; factual capacity keeps scaling with parameters. Website: 01.me/research/ikp/ Paper: arxiv.org/pdf/2604.24827

really exciting to see an LLM trained on pre-1930 data - post-2022 is already crowded with qwen, deepseek, and kimi

Announcing Talkie: a new, open-weight historical LLM! We trained and finetuned a 13B model on a newly-curated dataset of only pre-1930 data. Try it below! with @AlecRad and @status_effects 🧵

Silicon Valley: AI is self-accelerating, agents run everything, old people are dumb Global 2000: I spent a fortune on your AI chat 2 years ago and got zero productivity; my engineers like the coding AI thing but no one else cares; agents are scary Never seen a gap this huge