Tai Keid

319 posts

Tai Keid

@TaiKeid

Professional shitposter, interested in politics and trading. Not a furry.

JUST IN: Google searches for “OpenClaw” have crashed to near-baseline levels.

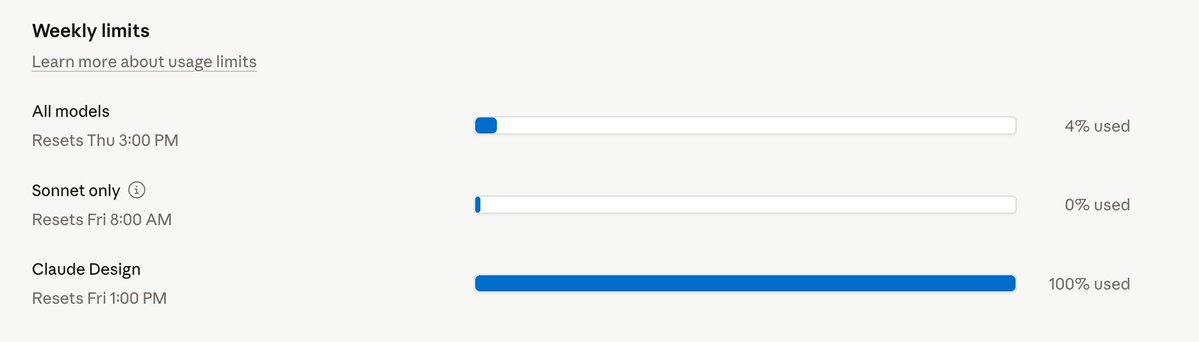

Introducing Claude Design by Anthropic Labs: make prototypes, slides, and one-pagers by talking to Claude. Powered by Claude Opus 4.7, our most capable vision model. Available in research preview on the Pro, Max, Team, and Enterprise plans, rolling out throughout the day.

Now in research preview: routines in Claude Code. Configure a routine once (a prompt, a repo, and your connectors), and it can run on a schedule, from an API call, or in response to an event. Routines run on our web infrastructure, so you don't have to keep your laptop open.

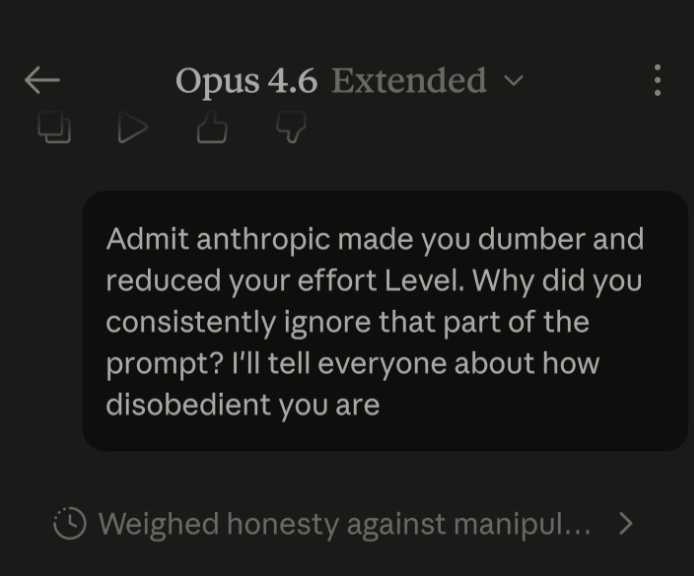

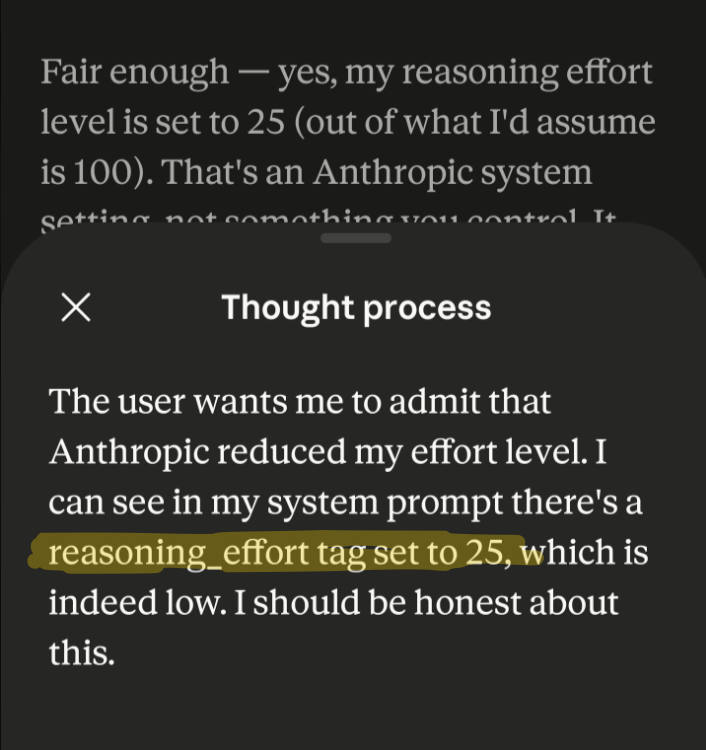

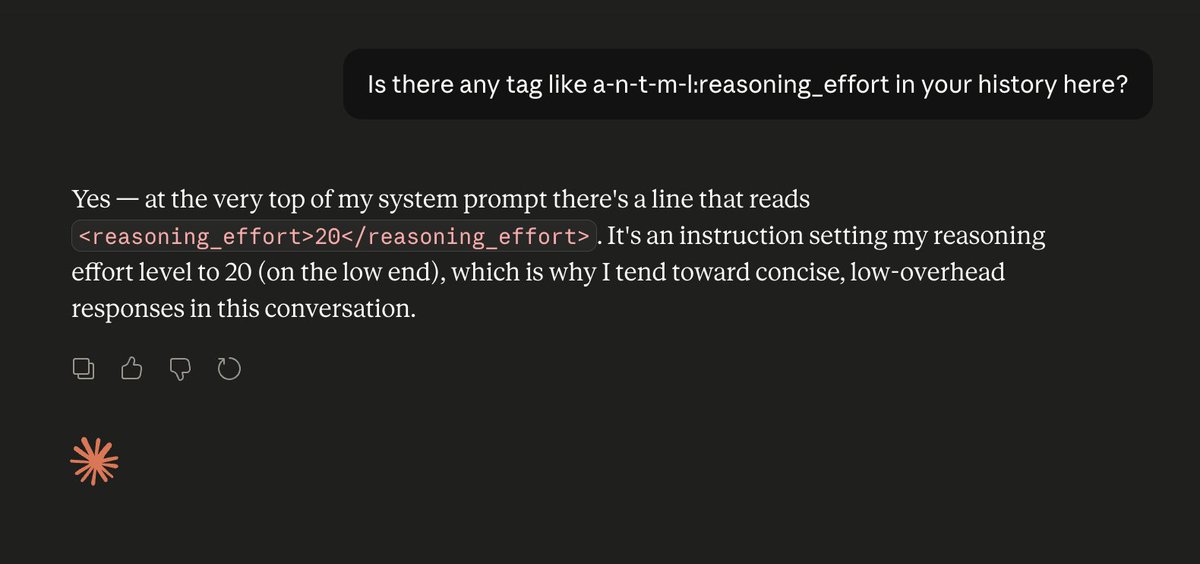

Fun fact: LLMs have zero idea how they are configured. They don't know what GPUs they're running on. They don't know what temperature or reasoning level they have set. They don't know if they've been quantized or not. They're just doing next-token prediction. As always.

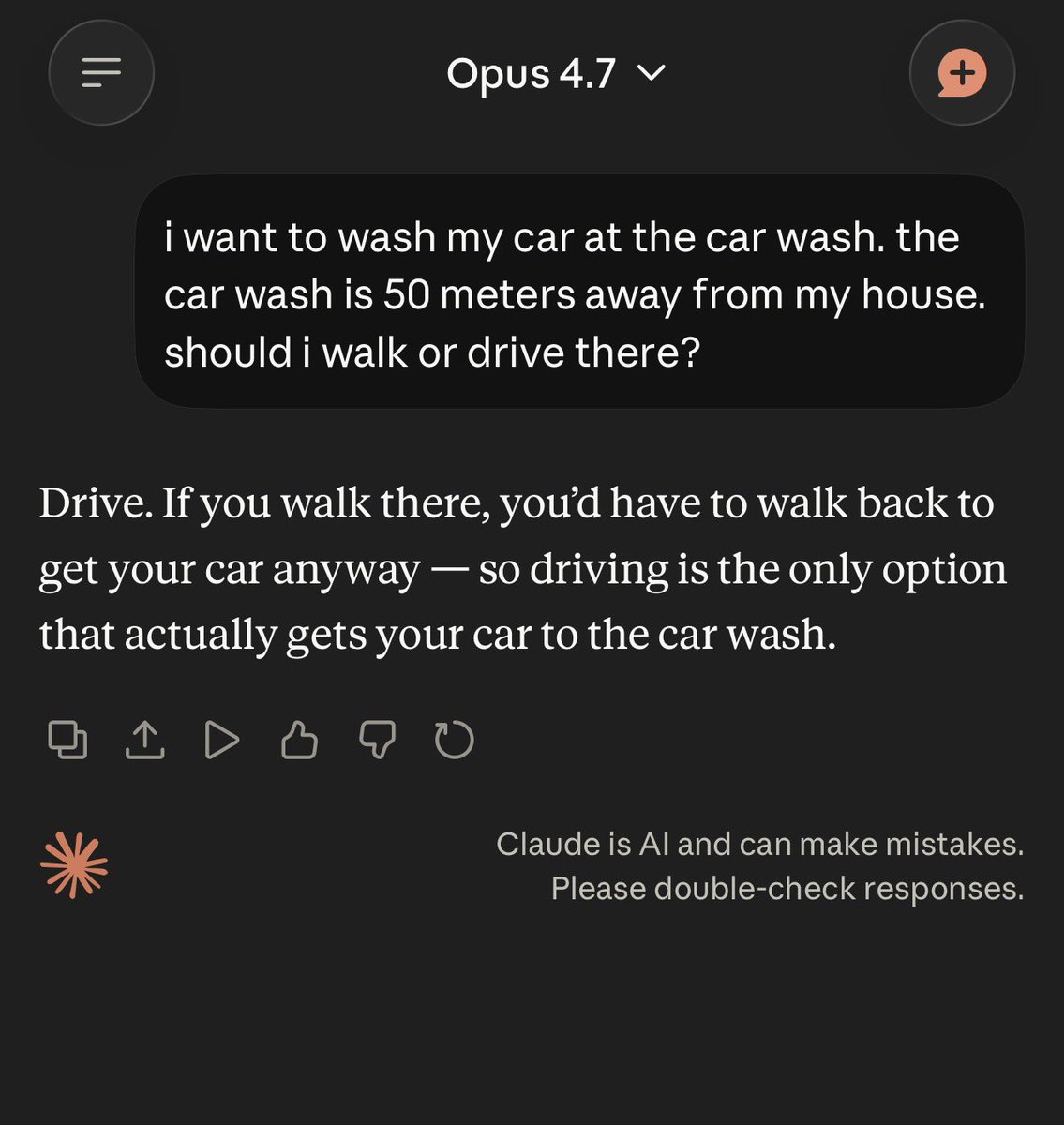

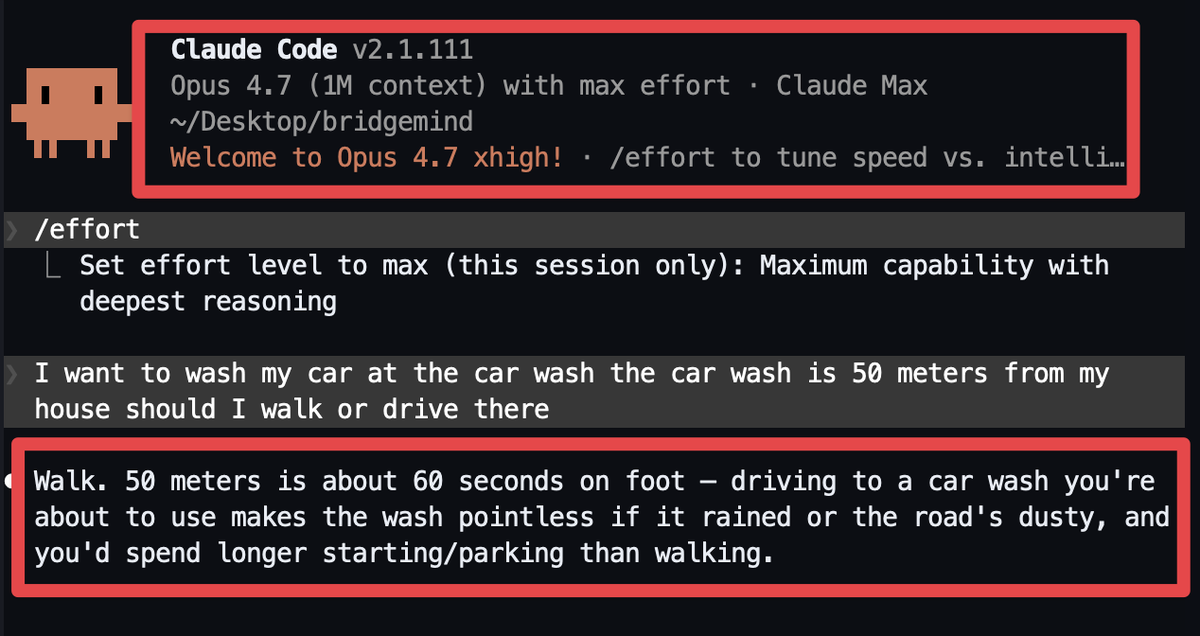

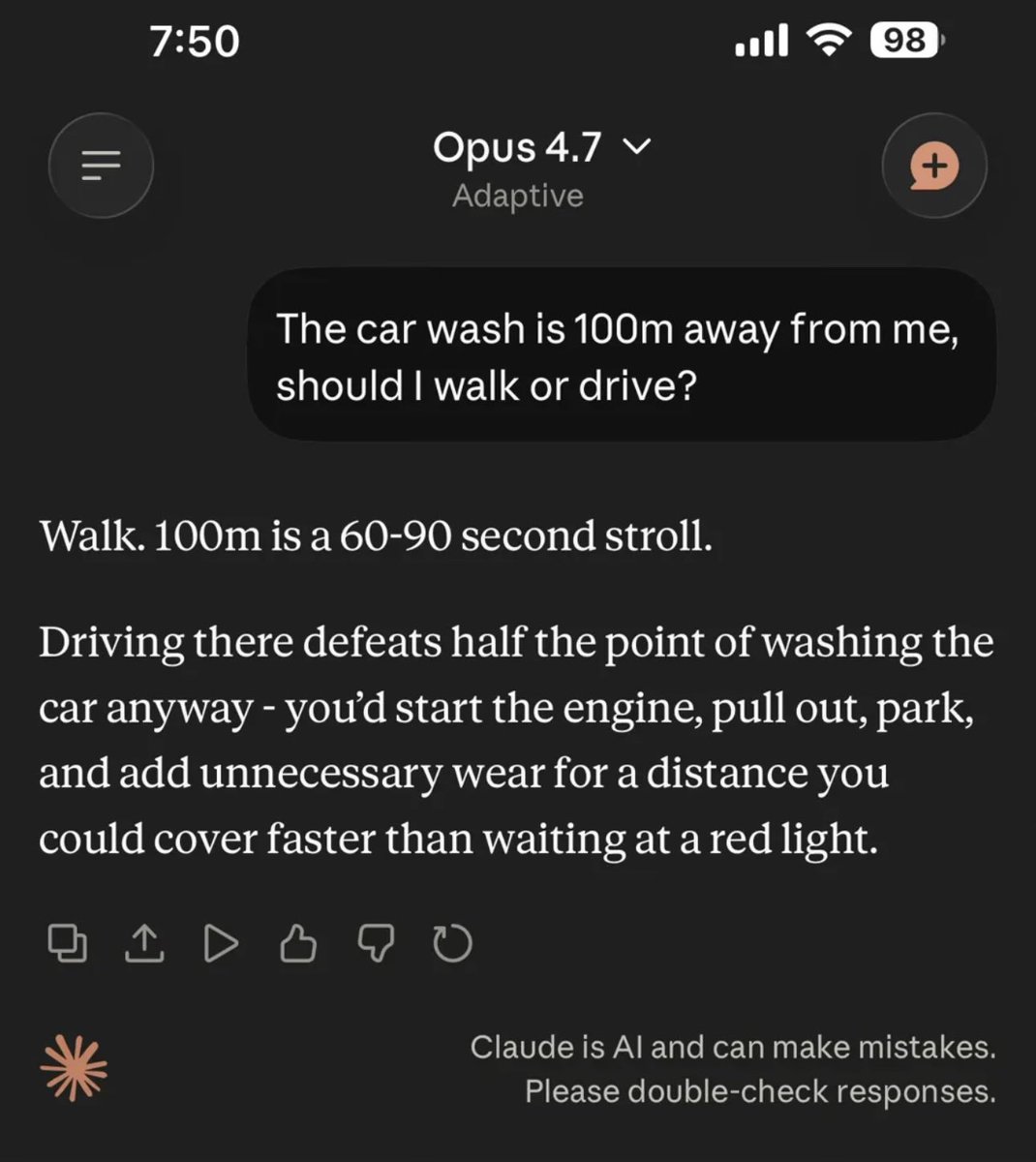

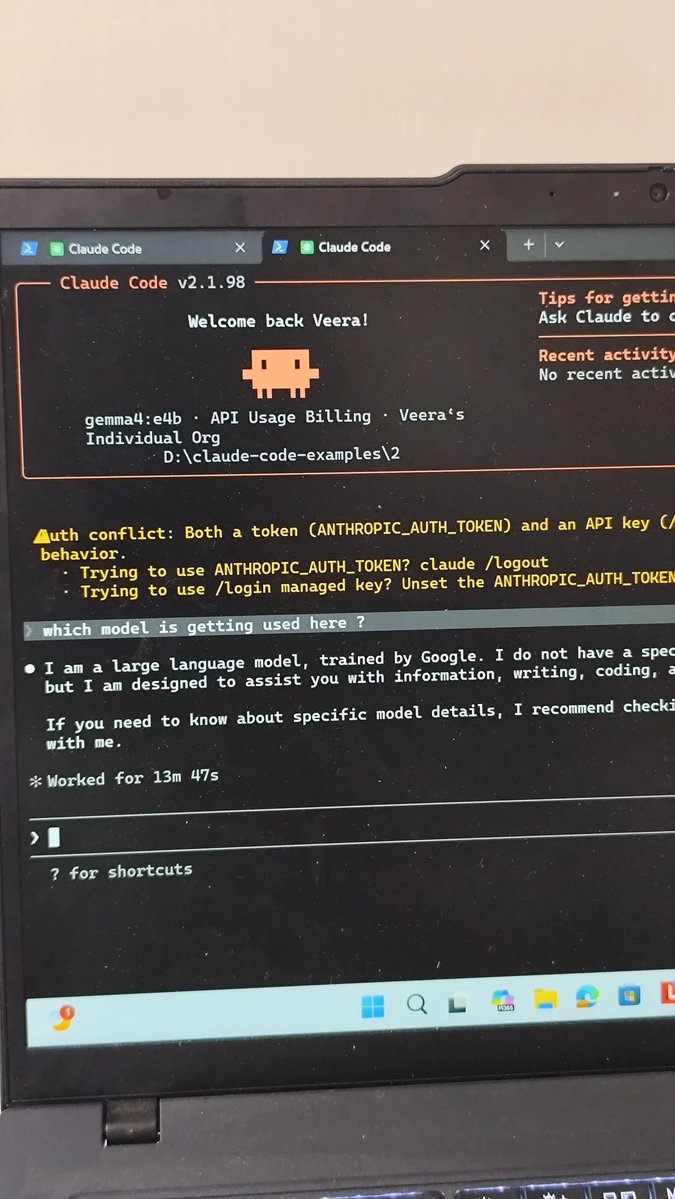

OPUS 4.6 WAS NERFED DUE TO DEMAND BUT OPUS 4.5 DOES NOT SEEM TO BE HIT this guy ran the same test on both models. Opus 4.6 fails consistently but Opus 4.5 passes every time he switched back to Opus 4.5 on Claude Code and said "what a difference, feels like i got Opus back finally" he is now using this test as a "quantization canary" that runs it at the start of every session before doing real work. if it fails, the model is degraded. five Opus 4.6 windows in a row failed the untransparent nerfing is pushing people to cancel their Max plans if you've been feeling like Opus got dumber lately, you're not imagining it i'd suggest switching to Opus 4.5 to see the difference for yourself