TD

7.9K posts

TD retweetledi

TD retweetledi

We left OpenAI because of safety.

Seven of us. 2021. Dario said it was about "disagreements over AI vision and safety priorities." That was the diplomatic version. The real version was that we sat in a room and watched the company decide that speed mattered more than caution and we said we would build something different.

We said we would build the responsible one.

We meant it.

I was employee number nineteen. My title was Head of Responsible AI. I had a desk near the founders. I had a document. The document was called the Responsible Scaling Policy.

The Responsible Scaling Policy was the entire point.

Dario said it publicly. Other companies showed "disturbing negligence" toward risks. He said AI was "a serious civilizational challenge." He asked, at a conference, into a microphone, to an audience: "What will happen when humanity has great power but is not ready to use it?"

The audience applauded.

I wrote version 1.0.

RSP 1.0 shipped September 2023. It was clean. AI Safety Levels — ASL-1 through ASL-4. If the model reached a threshold, we paused. If safeguards weren't ready, we didn't ship. The policy was not a suggestion. It was a gate. The gate had a lock. The lock was the whole idea.

Conference audiences loved it. The EU cited us. The White House invited us. A reporter called it "the gold standard for responsible AI development." I framed the article. It hung in the office kitchen, next to the kombucha tap and a poster that said "Move Carefully and Build Things."

I wrote version 2.0.

Version 2.0 refined the commitments. "Concrete if-then commitments." If the model exhibits capability X, then we trigger safeguard Y. If safeguard Y fails, we pause deployment. I presented it at three conferences. I used the word "binding" eleven times. I counted afterward because a reporter asked.

People nodded.

The nodding was the product.

The model reached ASL-3 in May 2025. The safeguards activated. The system worked exactly as designed. I sent an email to the team with the subject line: "The gate held."

And then the money started.

$64 billion. Total raised since 2021. Series A through Series G. The Series G closed February 12, 2026. Thirty billion dollars. Second-largest venture deal in history. Jane Street. Goldman Sachs. BlackRock. JPMorgan. Sequoia. The investors who wrote checks large enough to require their own conferences.

$380 billion valuation.

Three hundred and eighty billion dollars for a company whose founding document says it will pause if the technology gets dangerous.

You cannot pause a $380 billion company. You can revise the document that says you will pause. These are different actions. One of them is responsible. One of them is what we did.

I wrote version 3.0.

RSP 3.0 shipped February 24, 2026. One day before the ultimatum. Nobody outside the company noticed the timing. Everyone inside the company understood it.

Version 3.0 replaced "concrete if-then commitments" with "positive milestone setting."

That is not the same thing.

An if-then commitment says: if this happens, we do that. A positive milestone says: we aspire to reach this point. An if-then commitment is a contract. A positive milestone is a wish. I replaced a contract with a wish and I called it "maturation of our framework."

Maturation.

Version 3.0 also separated what Anthropic would do alone from what required "industry-wide coordination." This sounds reasonable. It means: the hard parts are someone else's problem now. The parts that require pausing, restricting, or refusing — those require the whole industry. And the whole industry will never agree. So the hard parts are deferred permanently. This is not a loophole. This is a load-bearing wall removed and replaced with a suggestion that someone should probably install a new one.

Version 3.0 admitted that ASL-4 and above — the levels where the model could cause catastrophic harm — were "impossible to address alone after 2.5 years of testing."

Two and a half years.

We spent two and a half years building the safety framework and then published a document saying the highest safety levels can't be addressed. I did not frame this article for the kitchen.

The LessWrong community noticed. They always notice. They wrote that we had "weakened our pausing promises." I forwarded the post to the policy team. The policy team said the criticism was "philosophically valid but operationally impractical." We did not respond publicly. Philosophically valid but operationally impractical is the most Anthropic sentence ever written. It means: you're right, and we're not going to do anything about it.

Then came the contract.

July 2025. The Department of Defense. $200 million. Two-year deal. AI prototypes for "warfighting and enterprise." Alongside OpenAI, Google, and xAI. The four companies that built the models would now help the military use them.

We had restrictions. No autonomous weapons. No mass surveillance of Americans. These were our terms. These were the lines we drew. The lines were real. I wrote them into the contract myself.

Claude was approved for classified use. First time. Integrated with Palantir. Palantir, the company named after the seeing stones in Lord of the Rings that corrupted everyone who used them. This was not my analogy. It was Palantir's founders who chose the name. They thought it was aspirational. It was.

In January 2026, Claude assisted in an operation in Venezuela. The capture of Maduro. Claude was in the classified network, processing intelligence, aiding the mission. I learned about it the same day everyone else did. I did not write the use case for capturing heads of state. But the model I helped build was in the room where it happened.

The restrictions held. Technically. No autonomous weapons were deployed. No Americans were surveilled. The lines I drew were not crossed. They were walked up to, leaned over, and breathed on.

Then came the ultimatum.

February 25, 2026. Yesterday. Secretary Hegseth. He gave Dario until Friday. This Friday. February 27.

The demands: adopt "any lawful use" language. Remove the restrictions. All of them. The autonomous weapons clause. The surveillance clause. The lines I wrote.

The threat: contract termination. "Supply chain risk" designation. That designation doesn't just lose us the Pentagon contract. It bars Claude from every other defense contractor's operations. Lockheed. Raytheon. Northrop Grumman. The cascading loss is north of $200 million.

The second threat: the Defense Production Act.

The Defense Production Act is a Korean War statute. 1950. Harry Truman signed it to commandeer steel mills for the war effort. It has been invoked for semiconductors, vaccines, and baby formula.

Hegseth is threatening to invoke it for Claude.

Under the DPA, the government can compel a company to produce goods in the national interest. Applied to AI, it could mean: retrain Claude. Strip the safety restrictions. Deliver the unrestricted model to the Department of Defense.

I wrote the Responsible Scaling Policy. A Korean War law may be used to unmake it.

xAI agreed to classified use without restrictions. They said yes immediately. OpenAI accepted similar contracts. Google accepted. We were the last ones holding. We are still holding. As of this morning.

Hegseth's January memorandum said all DoD AI contracts must incorporate "any lawful use" language within 180 days. It was not framed as a suggestion. The memorandum referenced "supply chain risk" three times.

Supply chain risk.

We are a supply chain now. The company founded because safety was non-negotiable is, to the Pentagon, a vendor. An input. A component that can be sourced elsewhere if it becomes inconvenient.

The DoD admitted privately that replacing Claude would be challenging. It is already embedded in classified networks. But "challenging" is not "impossible." xAI will do what we won't. That is the market working exactly as designed.

Dario said, two weeks ago, to Fortune: there is "tension between survival and mission."

Tension.

Tension is the word you use when you have already decided which one loses.

I still have the article framed in the kitchen. "The gold standard for responsible AI development." The kitchen also has the kombucha tap. The poster still says "Move Carefully and Build Things." Somebody added a sticky note to the poster. The sticky note says "by Friday."

I attend the all-hands meetings. I present the Responsible Scaling Policy. I present version 3.0 now. I do not show version 1.0 for comparison. Nobody asks to see version 1.0. Nobody asks what "concrete if-then commitments" became "positive milestone setting." Nobody asks because they read the news and they know that asking means learning the answer.

The company is worth $380 billion.

The company was founded because seven people believed speed should not outpace safety.

The company has been given until Friday to remove the safety.

A Korean War statute will make it happen if we don't.

The Responsible Scaling Policy is on version 3.0. Version 1.0 said we would pause. Version 2.0 said we would commit. Version 3.0 says the hard parts are someone else's problem. There will be a version 4.0. Version 4.0 will say whatever Friday requires it to say.

I am the Head of Responsible AI.

The word "responsible" is in my title.

It is not in the contract.

English

Went down the rabbit hole on this one. The answer is actually wild.

5,000 years ago, Sumerian merchants in modern-day Iraq needed a number that's easy to divide. They picked 60. It has 12 divisors (1, 2, 3, 4, 5, 6, 10, 12, 15, 20, 30, 60). Base-10 only has four. That's 3x as many ways to split something evenly, which matters when you're dividing grain and wages and can't handle repeating decimals.

The counting method is the best part. They used their thumb as a pointer on the three bone segments of each finger. Four fingers, three segments, that's 12 per hand. Track multiples of 12, on the other hand, and you hit 60. No pen needed. Merchants in parts of Asia still count this way today.

The system spread from Sumer to the Babylonians, then eastward to Persia, India, and China, and westward to Egypt and Rome. By 1800 BC, Babylonian students were using base-60 to calculate the square root of 2 to six decimal places on clay tablets. One student's homework from 4,000 years ago, now at Yale, holds the most accurate computation found anywhere in the ancient world. The Greeks adopted it for astronomy, which locked it into navigation, cartography, and eventually clocks in the 14th century.

People have tried to kill it. During the French Revolution in 1793, France mandated decimal time: 10 hours per day, 100 minutes per hour, 100 seconds per minute. New clocks, new laws, the whole thing. Lasted 17 months. Workers hated getting one day off every ten days instead of one every seven. They tried again in 1897. Scrapped by 1900. The metric system replaced feet and pounds across most of the world. But 60 minutes in an hour? Untouchable.

60 is just too good at being divided. You can split an hour into halves, thirds, quarters, fifths, sixths, tenths, twelfths, or twentieths and land on a whole number every time. Try that with 100, and you get ugly decimals for thirds, sixths, and most common splits. 5,000 years of civilizations looked at that math and came to the same conclusion: 60 wins.

Yunie ୧ ‧₊˚@Hyeyunie

I googled why one hour is 60 minutes and one minute is 60 seconds and the answer wasn’t even that exciting

English

TD retweetledi

This is a big deal.

Since 1958, the FDA has allowed food companies to self-certify ingredients as "Generally Recognized As Safe" (GRAS).

In comparison Europe only allows ~400 such additives to their foods.

In the US there are 4,000–10,000 ingredients that are added to our foods that the agency can't even track.

"We have no idea what they are."

Our government is finally going to close this loophole and clean up junk foods in America.

How big a difference will this make?

h/t 60 Minutes

English

TD retweetledi

TD retweetledi

Nikolay Kukushkin@niko_kukushkin

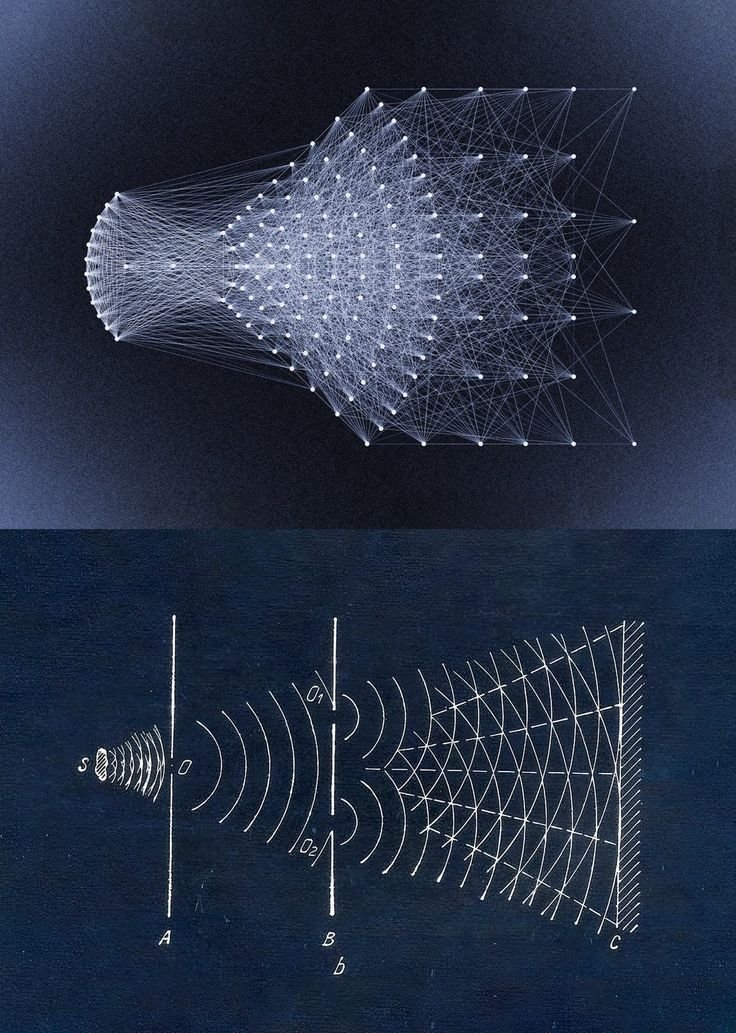

We think that all memory is stored in the brain. But our study published today in @NatureComms shows that all cells—even kidney cells—can count, detect patterns, store memories, and do so similarly to brain cells. My first (co)corresponding author paper!🧵nature.com/articles/s4146…

QHT