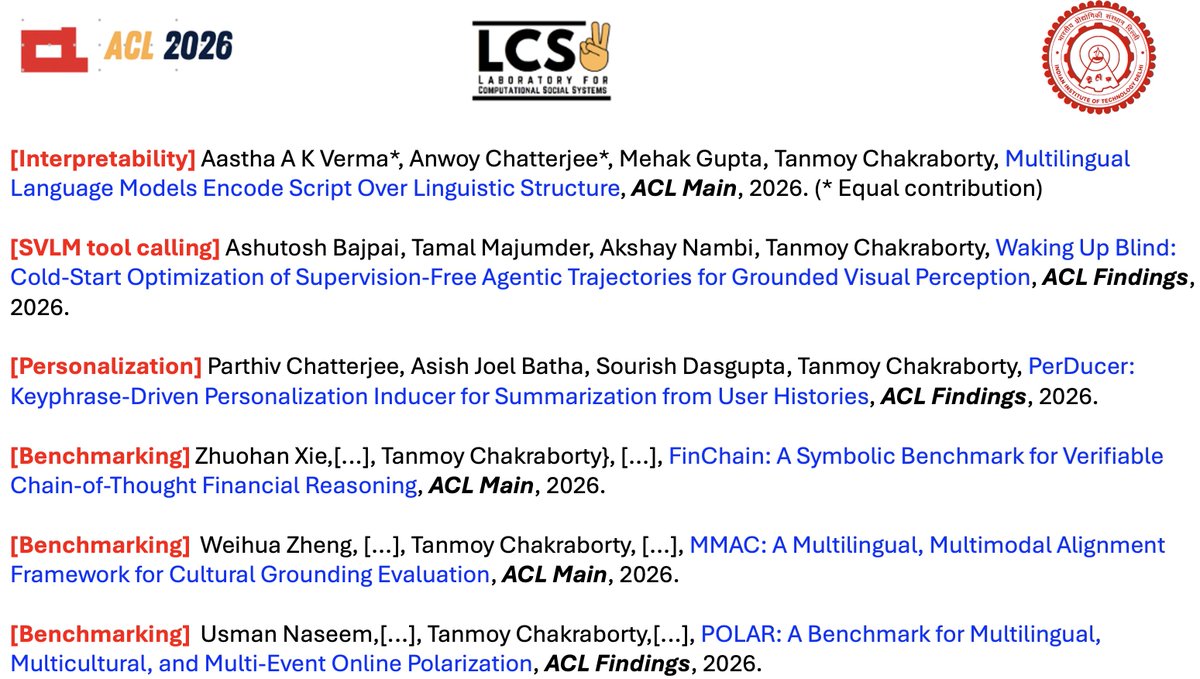

Tanmoy Chakraborty

1.5K posts

@Tanmoy_Chak

Chair Prof in AI, Associate Prof @iitdelhi; ACM Distinguished Speaker; Lab @lcs2lab; Previously @IIITDelhi @UofMaryland @iitkgp; #NLP #LLMs

Excited to share that our work, #MonteCLoRA, has officially been merged into the #HuggingFace PEFT library! 🥳 github.com/huggingface/pe… Build #peft from source to use it right away! 🚀 📜 Paper: arxiv.org/abs/2411.04358 🤗 Docs: #monteclora-monte-carlo-low-rank-adaptation" target="_blank" rel="nofollow noopener">huggingface.co/docs/peft/main…

Happy to announce that our paper has been accepted to #ICLR2026! 🎉 📜 Beyond Markovian Drifts: Action-Biased Geometric Walks with Memory for Personalized Summarization 👥 Parthiv Chatterjee, Asish Batha, Tashvi Patel, @sourish_rygbee, @Tanmoy_Chak Congratulations to all authors!

🚨 Submissions are now open for the Conference for AI Scientists (CAISc) 2026, co-organised by Lossfunk and @bitspilaniindia. Submit to probe what happens when AI systems drive scientific discovery. Submissions are open until May 15! Here is everything you need to know 🧵

🚀Introducing 𝐆𝐔𝐈𝐃𝐄-𝐋𝐋𝐌: A reporting checklist for using LLMs in behavioral & social science ✅GUIDE-LLM is a reporting checklist designed by 80+ experts to improve transparency, reproducibility & ethical accountability of LLM-based research 📄llm-checklist.com