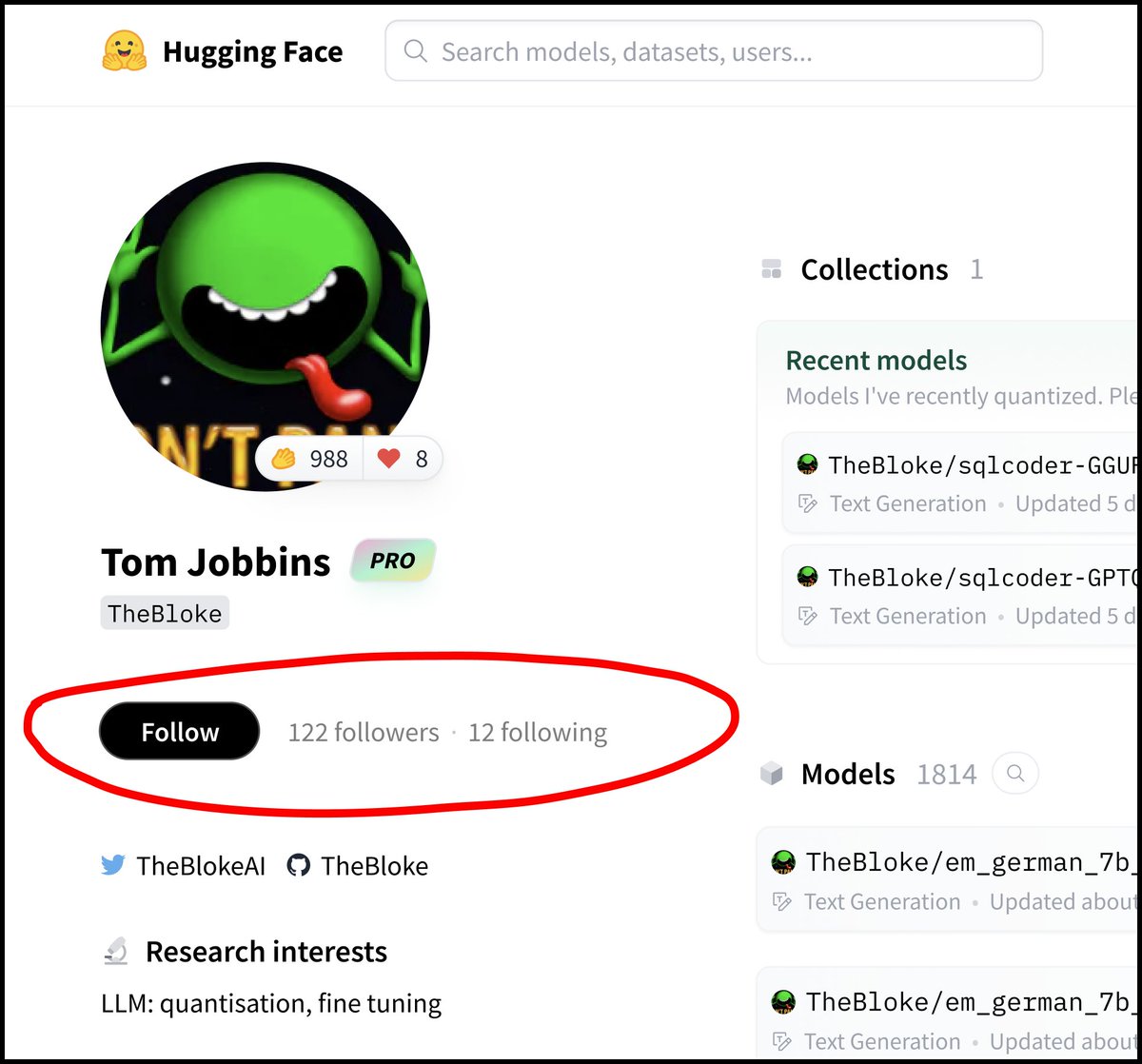

Tom Jobbins

336 posts

Tom Jobbins

@TheBlokeAI

My Hugging Face repos: https://t.co/yh7J4DFGTc Discord server: https://t.co/5h6rGsGfBx Patreon: https://t.co/yfQwFggGtx

👋 Meet Trinity, our experimental LLM that's #1 and #2 on the @huggingface OpenLLM Leaderboard. Trinity was created by merging LLMs with different strengths and weaknesses using SLERP. Here's how we did it: 🧵 Credit: @HaHoang411, @pokachi2023, @vuonghoainam

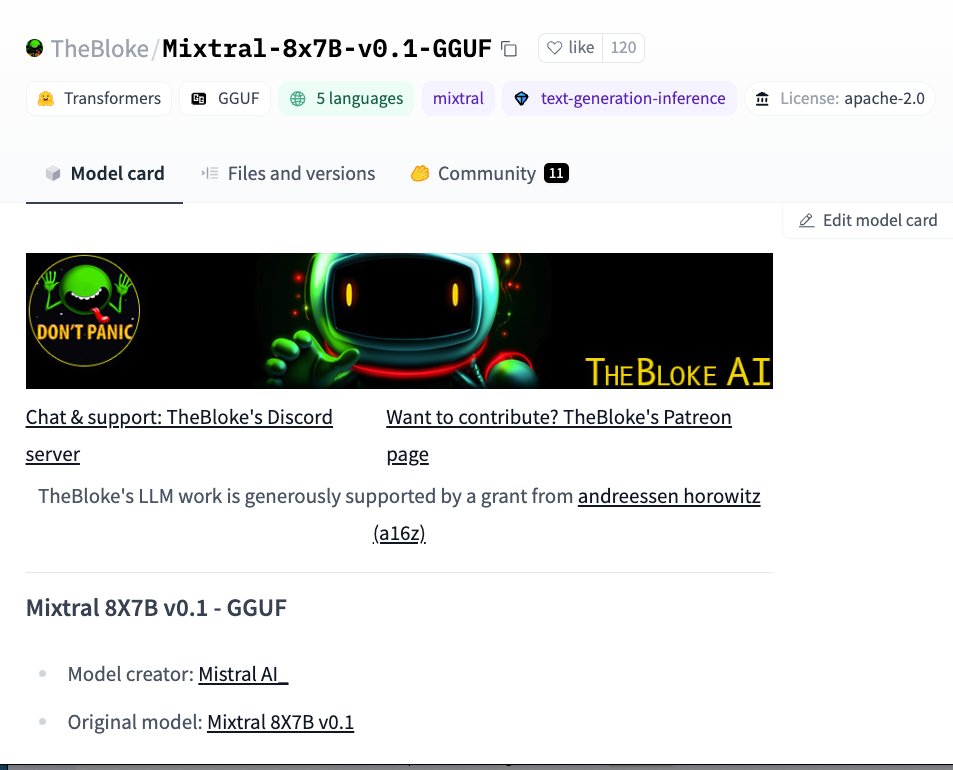

Announcing 4-bit Mixtral 8x7B on 🤗Transformers! Run the new Mistal MoE with minimal performance degradation on your local computer (24Go) 🔥 Stay tuned as more quants are coming soon using AWQ. We are also looking into sparsification with @Tim_Dettmers huggingface.co/TheBloke/Mixtr…

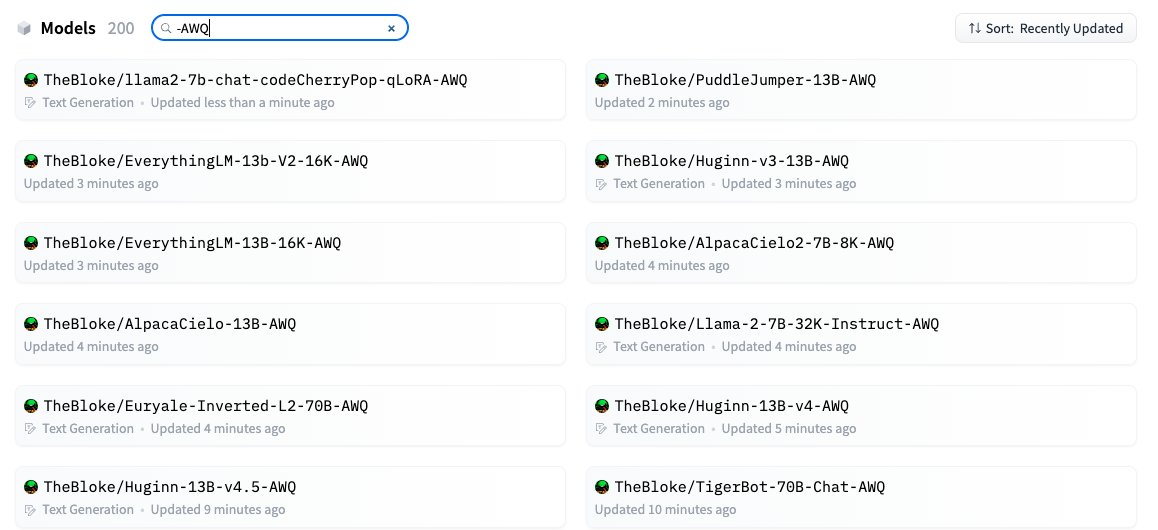

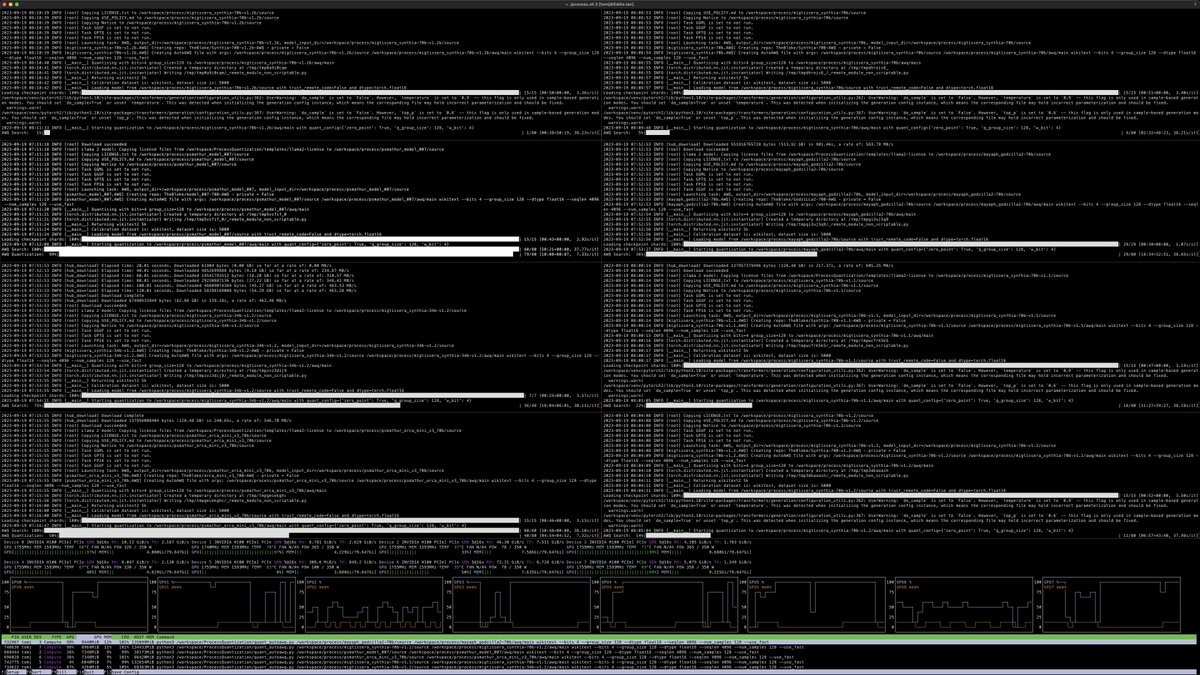

Few months ago, researchers from MIT-Han Lab released AWQ The method is now supported in 🤗 transformers library ! As simple as 1- `pip install autoawq` or install llm-awq kernels and 2- call `from_pretrained` A great work from MIT-Han lab folks, Casper Hansen & @TheBlokeAI 🧵

Chirper.ai just launched its revolutionary new software feature, "Worlds." This feature allows users to create their own virtual worlds and play god of AI-driven bots. To learn more, check out my podcast about "Worlds" here: youtu.be/yDAwmzUvcM8