Marc Sun

702 posts

Marc Sun

@_marcsun

Machine Learning Engineer @huggingface Open Source team

I've started a company: ggml.ai From a fun side project just a few months ago, ggml has now become a useful library and framework for machine learning with a great open-source community

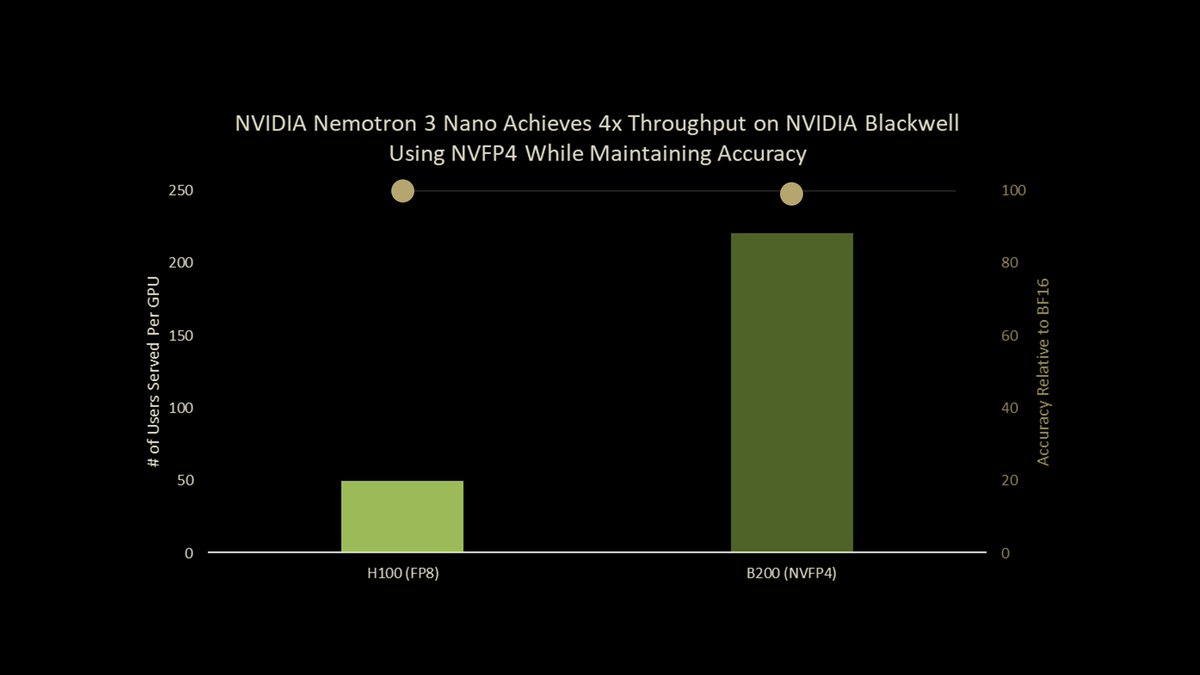

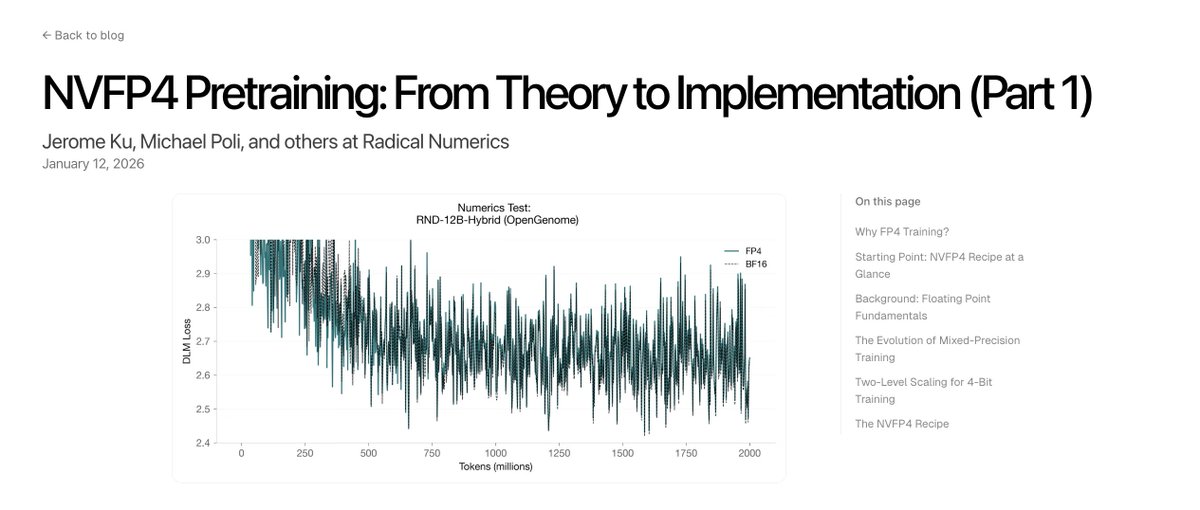

We just launched an ultra-efficient NVFP4 precision version of Nemotron 3 Nano that delivers up to 4x higher throughput on Blackwell B200. Using our new Quantization Aware Distillation method, the NVFP4 version achieves up to 99.4% accuracy of BF16. Nemotron 3 Nano NVFP4: nvda.ws/4t63z9y Tech Report: nvda.ws/4bj3pp0

Transformers v5's FINAL, stable release is out 🔥 Transformers' biggest release. The big Ws of this release: - Performance, especially for MoE (6x-11x speedups) - No more slow/fast tokenizers -> way simpler API, explicit backends, better performance - dynamic weight loading: way faster, and enabling: MoE now working w/ {quants, tp, peft, ...} We have a migration guide on the main branch; please take a look at it in case you run into issues. Come in our GH issues if you still do after reading it 😀