Tigger

3.5K posts

Tigger

@TiggerSharkML

to build is to bless the future

和@sainingxie 一起挑战7小时播客!他刚和Yann LeCun踏上“世界模型”的创业旅程(AMI Labs)。这是他第一次Podcast、第一次访谈。 2026年2月雪后的一天,我们在纽约布鲁克林,从下午2点,开启了一场始料未及的马拉松式访谈,直到凌晨时分散去。 这篇访谈的中文标题叫做《逃出硅谷》,但他又不厌其烦地枚举了影响他学术生涯的每一个人,并反反复复口头描摹这些人的人物特征(侯晓迪、何恺明、杨立昆、李飞飞…)正是这些,让这篇“逃出硅谷”的对话充斥着人性的温度。 By the way, 下面是访谈的YouTube版本,我们提供了中英字幕。 And yes, 我们是在用播客给这个世界建模😎 A 7-hour podcast with Saining Xie. He has just begun a new journey on world models with Yann LeCun at AMI Labs. This was his first podcast appearance and his first long-form interview. A day after the snowfall in February 2026, in Brooklyn, New York, we started recording at 2 p.m. What followed became an unexpected marathon conversation that lasted until the early hours of the morning. The Chinese title of the interview is “Escaping Silicon Valley.” Yet throughout the conversation, he patiently listed the people who shaped his academic life, repeatedly sketching their personalities in vivid detail: Hou Xiaodi, Kaiming He, Yann LeCun, Fei-Fei Li, and others. These portraits are what give this “escape from Silicon Valley” conversation its human warmth. By the way, the YouTube version of the interview is below, with Chinese and English subtitles. And yes, we are using podcasts to model the world 😎 A 7-hour marathon interview with Saining Xie: World Models, AMI Labs, Ya... youtu.be/rIwgZWzUKm8?si… 来自 @YouTube

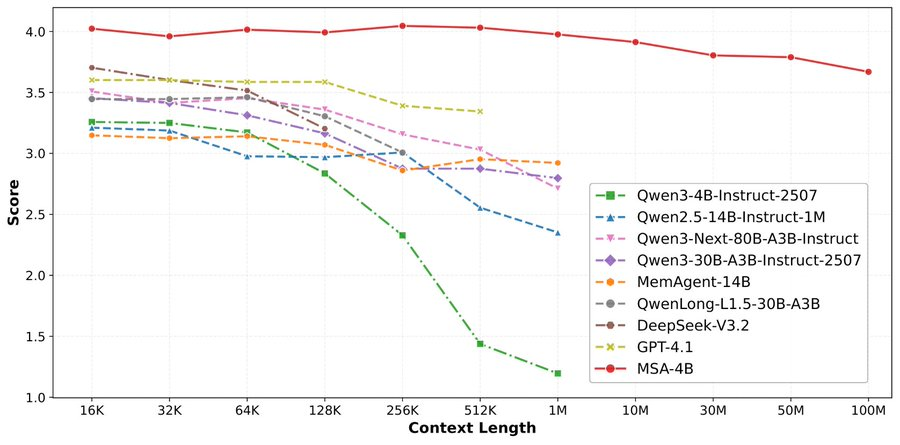

论文来了。名字叫 MSA,Memory Sparse Attention。 一句话说清楚它是什么: 让大模型原生拥有超长记忆。不是外挂检索,不是暴力扩窗口,而是把「记忆」直接长进了注意力机制里,端到端训练。 过去的方案为什么不行? RAG 的本质是「开卷考试」。模型自己不记东西,全靠现场翻笔记。翻得准不准要看检索质量,翻得快不快要看数据量。一旦信息分散在几十份文档里、需要跨文档推理,就抓瞎了。 线性注意力和 KV 缓存的本质是「压缩记忆」。记是记了,但越压越糊,长了就丢。 MSA 的思路完全不同: → 不压缩,不外挂,而是让模型学会「挑重点看」 核心是一种可扩展的稀疏注意力架构,复杂度是线性的。记忆量翻 10 倍,计算成本不会指数爆炸。 → 模型知道「这段记忆来自哪、什么时候的」 用了一种叫 document-wise RoPE 的位置编码,让模型天然理解文档边界和时间顺序。 → 碎片化的信息也能串起来推理 Memory Interleaving 机制,让模型能在散落各处的记忆片段之间做多跳推理。不是只找到一条相关记录,而是把线索串成链。 结果呢? · 从 16K 扩到 1 亿 token,精度衰减不到 9% · 4B 参数的 MSA 模型,在长上下文 benchmark 上打赢 235B 级别的顶级 RAG 系统 · 2 张 A800 就能跑 1 亿 token 推理。这不是实验室专属,这是创业公司买得起的成本。 说白了,以前的大模型是一个极度聪明但只有金鱼记忆的天才。MSA 想做的事情是,让它真正「记住」。 我们放 github 上了,算法的同学不容易,可以点颗星星支持一下。🌟👀🙏 github.com/EverMind-AI/MSA