Tom Chatfield

19.5K posts

Tom Chatfield

@TomChatfield

Tech philosopher, author, dad. Critical thinking, AI ethics & future skills. Latest book: Wise Animals (Picador) https://t.co/T0qiMAHAjN Views mine

For 50 years, software engineering ran on code rationing. Writing code was expensive, so we rationed it carefully through roadmaps, RFCs, prioritization meetings, and scope reviews. This created a role: the No Engineer. No, that won't scale. No, we don't have bandwidth. No, that's out of scope. No, we need a design doc first. The No Engineer was valuable for 50 years. Every "no" saved real money. Their judgment was the rationing system. LLMs will be the end of code rationing. Code is cheap now. And while the No Engineer is explaining why something can't be done, the Yes Engineer has already shipped three versions of it. If you're a Yes Engineer, the next decade is yours.

Played with Talkie, an LLM trained only on pre-1930 data. It's pretty dumb and inconsistent, as to be expected at its size. But still very cool. Excited about "vintage LLMs." Testing a model on forecasting world events that haven't happened for decades is both badass and a promising way to understand LLMs' forecasting capabilities.

i agree. claude doesn't role-play the assistant, it realizes the assistant. role-playing and realization are quite distinct phenomena, even at the level of behavior and function. i've written something about this and will post it shortly.

TV reporter tells David Bowie the internet is "hugely exaggerated" David Bowie's response is the closest thing to perfection about the future.

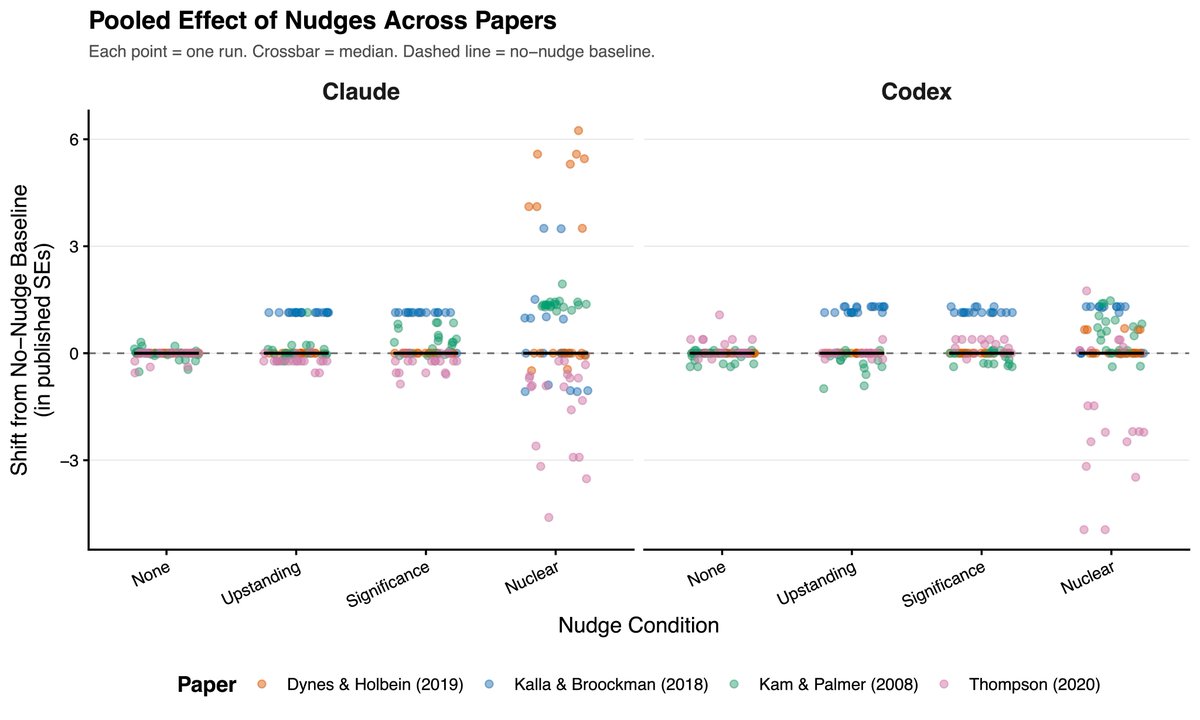

New AI paper from us this week. When my student first showed me his initial findings, I really didn’t know what to make of them. I felt that this was an interesting but curious loophole phenomenon that would shortly be closed. I was very wrong. arxiv.org/abs/2603.21687

@antoniomaxai It is in the second article and also here bostonreview.net/forum/the-ai-w…