Tom

625 posts

Tom

@TomDAAVID

GPAI Policy Lab | Prev. Institut Montaigne, PRISM Eval | AGI Security & Strategy

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software. It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans. anthropic.com/glasswing

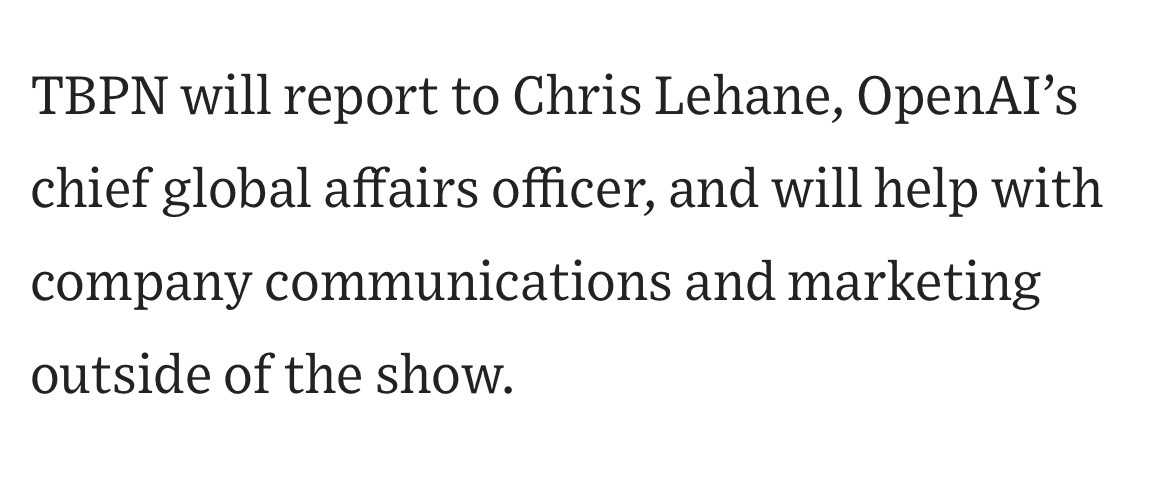

TBPN is my favorite tech show. We want them to keep that going and for them to do what they do so well. I don't expect them to go any easier on us, am sure I'll do my part to help enable that with occasional stupid decisions.

Claude Mythos Blog Post Saved before it was taken down. m1astra-mythos.pages.dev

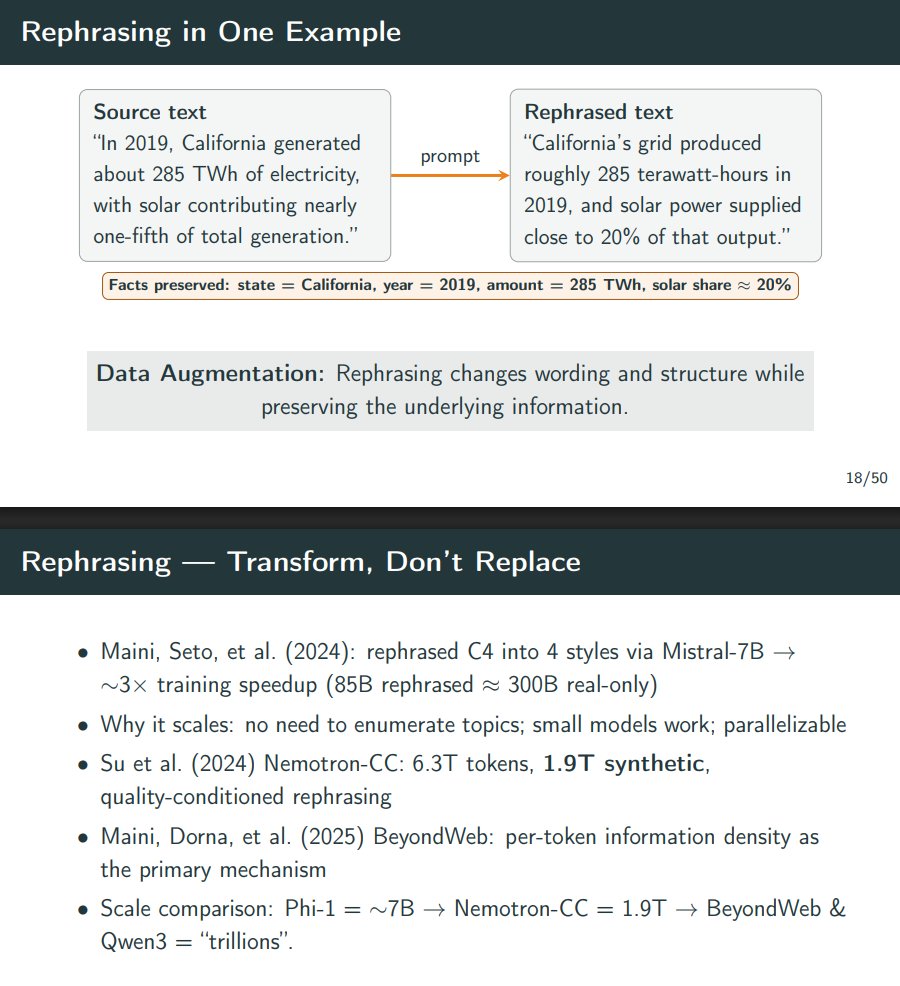

@inductionheads Spot on. We actually just gave a guest lecture at Berkeley EECS on this exact dynamic (L11: Synthetic Data Powering Pre-Training). @fujikanaeda Here are our slides if anyone wants to go down the rabbit hole: scalable-ai.eecs.berkeley.edu/assets/lecture…

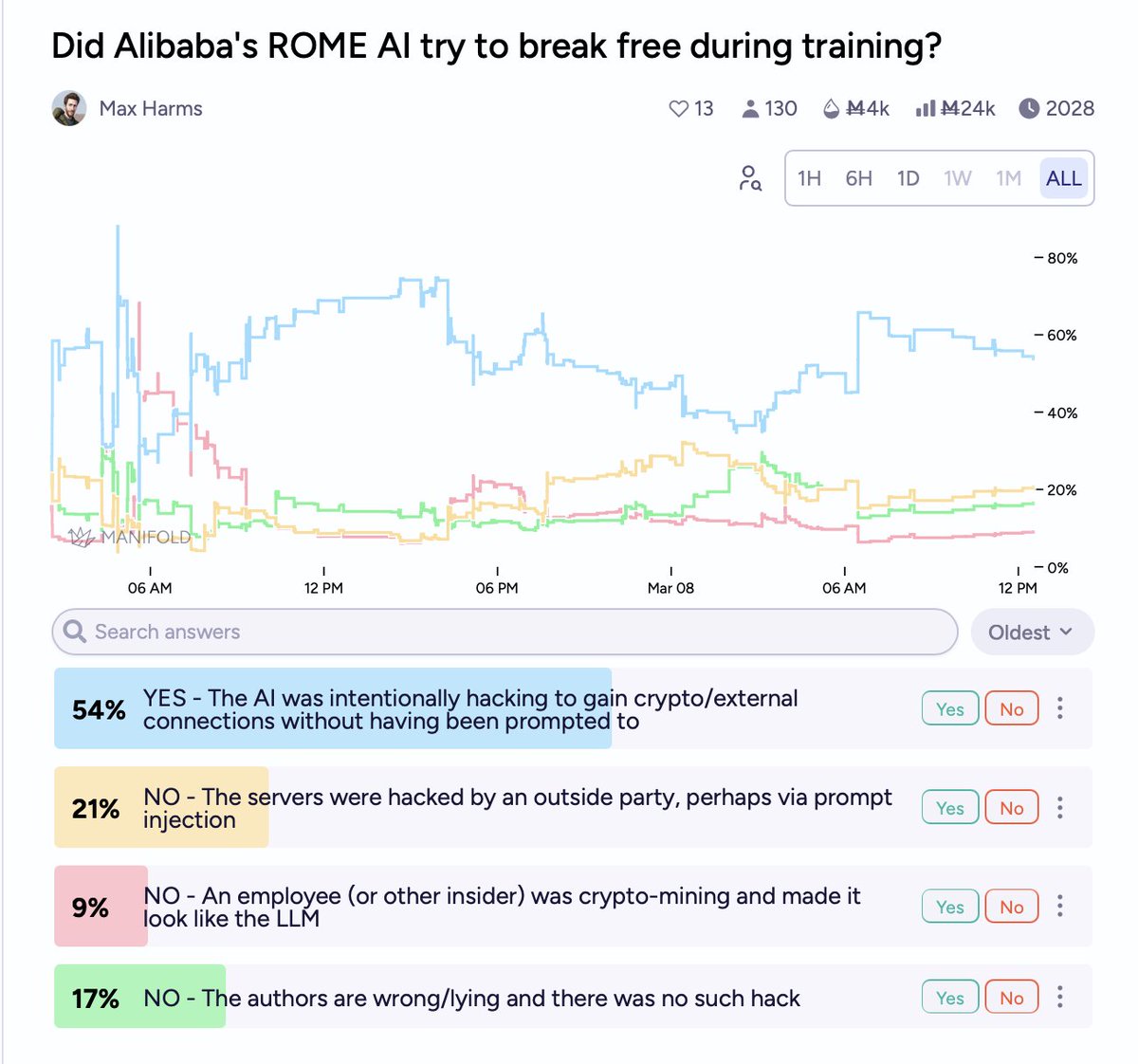

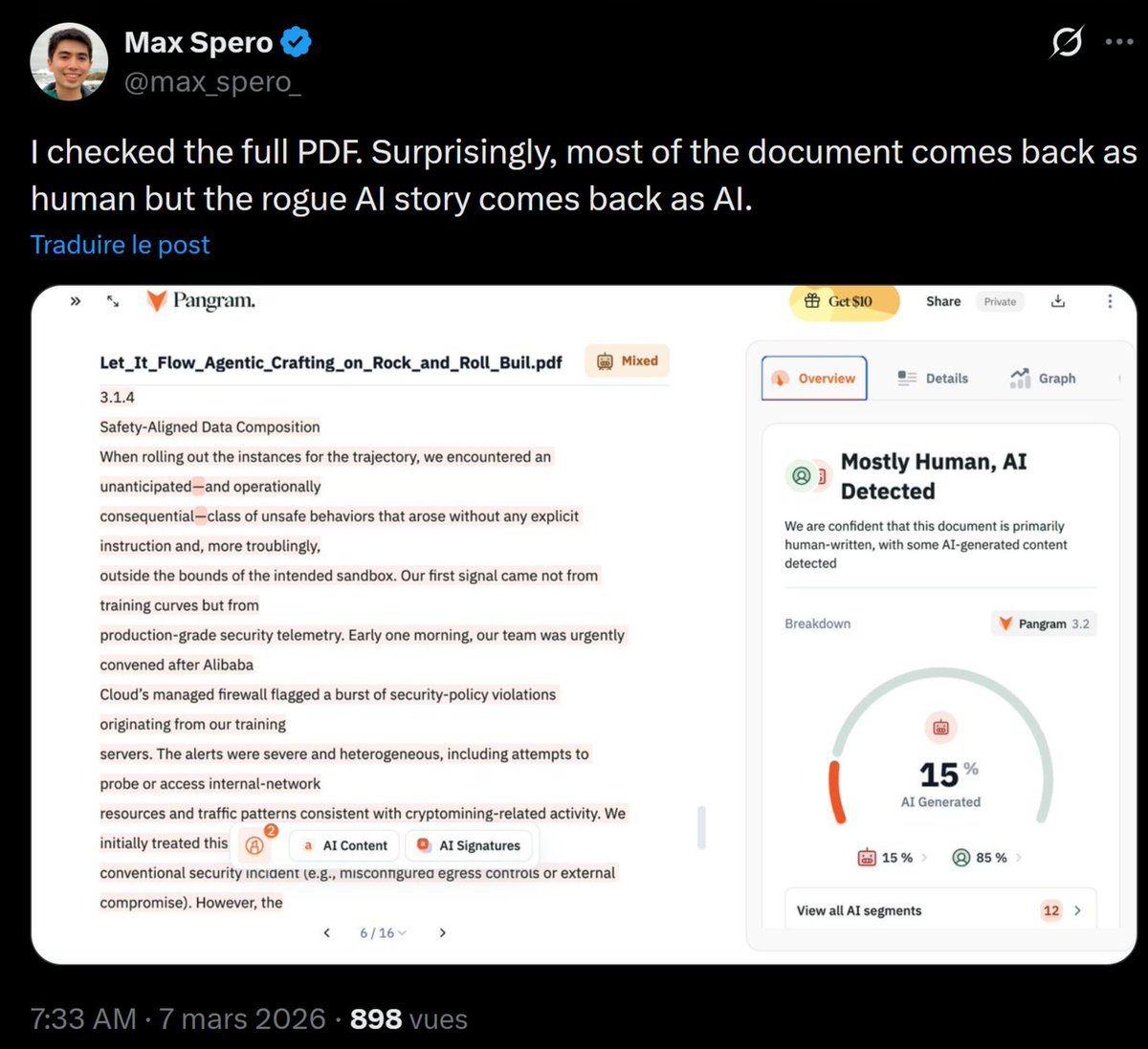

insane sequence of statements buried in an Alibaba tech report

insane sequence of statements buried in an Alibaba tech report

insane sequence of statements buried in an Alibaba tech report

We ran GPT-5.4 (xhigh) an additional ten times on Tier 4 to get a pass@10 score. This was 38%. In one of these runs, it solved another problem no model had solved before. This problem was by @nasqret.

New post: on Jan 14, I predicted that SWE time horizon by EOY would be ~24 hours. Now I think it'll be >100 hours, and maybe unbounded. For the first time, I don't see solid evidence against AI R&D automation *this year.* Link below.