Shreyas Raj

604 posts

Shreyas Raj

@TopR9595

Build voice agents that talk, sell & close — without you. Automate ops & content using AI tools no one’s telling you about. ⚡ Founder @RapidxAI ⚡

Btw, the proceeds of any legal victory in the OpenAI case will be donated to charity. I will in no way enrich myself.

Voice workflows just got stronger with gpt-realtime-1.5 in the Realtime API. The model offers more reliable instruction following, tool calling, and multilingual accuracy. Demo with @charlierguo

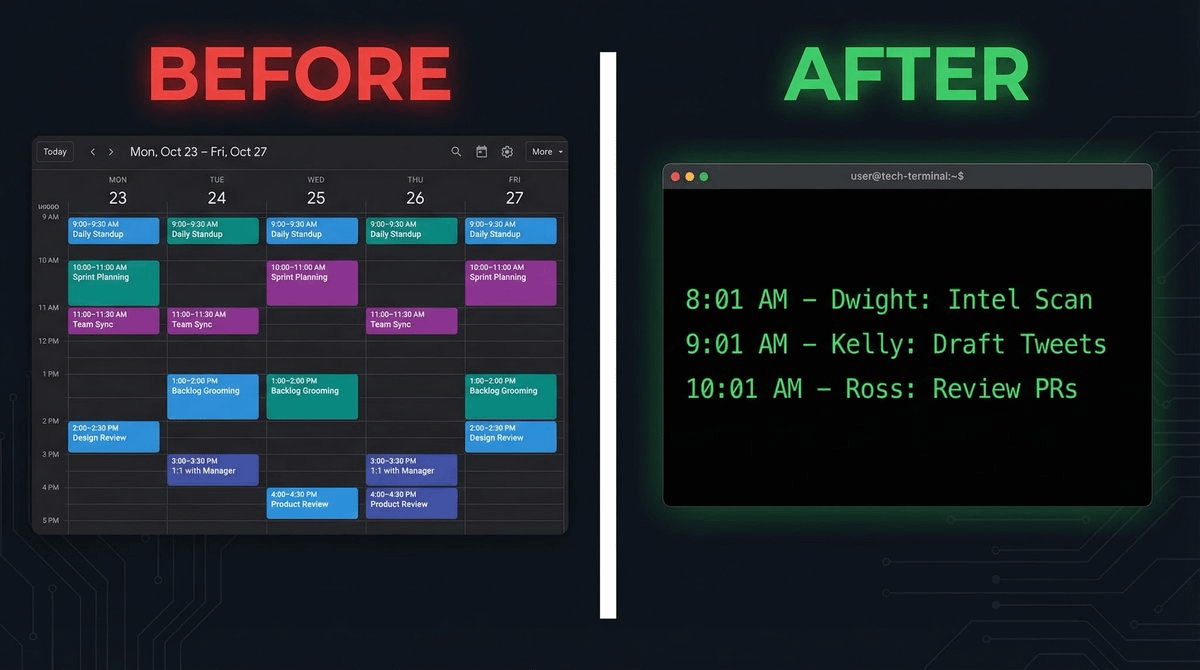

Hyperspace: The Agentic OS Apple Should Have Built On December 19th, 2024, we announced the world’s first Agentic Browser. What followed was a movement — a new category was born which led to many early products in this space and recently the hundreds of people lining up outside the The Agentic Browser Summit in San Francisco underscored that. Silicon Valley instinctively gets it, from students to tech executives, people can feel a revolutionary new change in computing is in the air. Past year taught us why such a product was inevitable, a hard engineering effort, and also the last mover in the entire software world this decade if and when done right. All paths are headed in the same direction: one tool which orchestrates them all. At Hyperspace we showed that path with essays and products we launched in earlier months: from a spatial UI of orchestrating agents, to showcasing transparent activity in how the AI system operates which leads to user trust, to presenting the software end-game, which massively improves human productivity. We also built the world’s largest AI network, drawing participation from people in almost 6000 cities around the world contributing their machines as nodes in the network. Think Uber, but for AI. That is, planetary-scale. And now we are stretching this industry ambition further with our end-to-end vision of the Agentic Supercomputer, the first breakthrough new AI OS, and an effort which spans from AI research to distributed systems to inventing a new UI to inventing a new business model to complement it. All of this together helps us in serving our mission, of delivering “Everyone’s Personal Supercomputer”. While others have built AI-native browsers, no one though has built something agentic from the ground up — with AI as the foundation, not a feature. How do you fundamentally improve the lives’ of billions around the world ? We believe that requires building a native environment for agents to be viewed, created, deployed, executed, discovered and priced in. That is a world where we move on from static apps, to dynamic agents. But, as my 2 year old niece likes to ask: “but why ?” The issue is that the world of software today is fragmented, and everyone is sprinkling on AI as a feature and charging a subscription fees for it. From browser makers, to IDEs, to design and other productivity tools. This leads to a fragmented UX, where people have to learn to use AI in each app, their memory and other context is not shared between all these apps, and they also have to pay separately for compute for each such AI-enhanced app. Each app maker has to figure out basics such as compute, and leads to the issues we saw with Cursor pricing recently. This is not the future. What if AI was the foundation instead of a feature ? What if Apple had built a fundamentally new AI OS from the ground up and what would it have looked like ? At Hyperspace, that is what we did. On July 15th we introduced three breakthrough key pillars of our AI OS: 1. Agentic Browser 2. Agentic Memory 3. Agentic Payments And we didn’t stop there. We also introduced a breakthrough new user interface called the Spatial AI which is inspired both from the spreadsheet and the HyperCard - each card is an agent, with it’s own inputs and outputs, endlessly extensible and pluggable with others, just like cells of a spreadsheet. Update one cell and all the dependents update, like a spreadsheet formula. It goes beyond a static linear workflow to being able to operate in all directions. This revolutionary new interface helps manage all of the below: 1. Multiple websites being browsed in parallel 2. Multiple desktop apps being browsed in parallel 3. Multiple server tools being used in parallel 4. Multiple smartphone apps streamed to your device or opened via an emulator All the software which you need comes together in this one seamless, agent-native interface. This interface provides you access to the largest network of models, vectors, agents and compute on the planet. The Browser. The IDE. The Notepad… they are not separate products: they are all in one, the Agentic Browser. As Steve Jobs famously said at the iPhone announcement, “are you getting it ?” And beneath this UI lies a new intelligence routing layer — leveraging both swarms of specialized models to the Hyperspace Matrix model that recalls thousands of tools in real-time, not by context window hacks, but through retrieval, ranking, and reuse. To many, this will feel like AGI. Not one big system by one big company, but an intelligent network. Now lets talk about privacy… Are you comfortable with one company owning all your memory forever ? I am not. So we have invented Agentic Memory as a new open protocol which provides full power over memory to you, the user. Your memory is yours, encrypted, on your device, and portable if and how you want. Anyone can build on it without our permission, but not without your permission. This protocol, and the decentralized vector database spread out across the world, would enable apps and agents to share context and memory. Think copy-paste, but for the AI world. It doesn’t just remember — it knows what matters. VectorRank helps your AI weigh your life’s most relevant moments over time, just like the way our minds elevate memories. Now each time you use an agent, your experience with other agents will also continuously improve: you don’t have to keep repeating the same things about yourself, while fully preserving your privacy. Agentic Memory is accessible within the Agentic Browser to manage. And there is one more thing… AI as the foundation requires compute to be available at the base layer, but this base layer spans models running on your own device, to cloud APIs, to also running across the peer-to-peer distributed network. Agentic Payments provides a singular interface to all of that compute, running a spot auction clearing marketplace every second to determine the fair price of compute. This results in price transparency, and you as the user paying the lowest possible cost. If you want predictability, you can reserve compute in advance. This end-to-end system provides the most streamlined world for agents to operate in. In order to enable this world and the world of agents being able to pay each other in sub-cent increments millions of times a second, we had to also invent a fundamentally new agentic micropayments blockchain. All of this together would enable a world where you as a user, or the agent itself, can efficiently call and utilize other agents built by others and also pay for content which is unique and useful. This enables a move away from the current AI exploitative economy for bloggers and other content creators, to a web with a fundamental new business model. Earlier we didn’t have the right infrastructure to enable such a world. Now, all the dots connect. The Hyperspace AI OS would give the power of a supercomputer in everyone’s hands. This isn’t a browser, or an IDE or limited to any device or cloud. It’s an entire AI operating system — with a breakthrough new spatial UI, local and distributed compute, agentic memory, agentic payments, and orchestration built into the foundation. As a user, we move the choice back in your hands with an experience you will love and find delightful. You get to choose the level of privacy, cost, and utility you want. And while Apple should have done it, we could not wait, and we feel this just required a new level of passion and DNA which we bring here. We are just getting started. Thank you, Varun Mathur Cofounder and CEO, Hyperspace cc @naval @pmarca @vkhosla @karpathy @sama