Clancy Dennis

215 posts

Clancy Dennis

@TurtleT1me

GenAI and Biomedical Engineer.. Gadget lover, SCUBA diver, snowboarder and general tinkerer. Senior dev @Stanford Healthcare & the code behind ChatEHR

Palo Alto Katılım Ekim 2010

150 Takip Edilen32 Takipçiler

Clancy Dennis retweetledi

The vibes in SF feel pretty frenetic right now. The divide in outcomes is the worst I've ever seen.

Over the last 5yrs, a group of ~10k people - employees at Anthropic, OpenAI, xAI, Nvidia, Meta TBD, founders - have hit retirement wealth of well above $20M (back of the envelope AI estimation).

Everyone outside that group feels like they can work their well-paying (but <$500k) job for their whole life and never get there.

Worse yet, layoffs are in full swing. Many software engineers feel like their life's skill is no longer useful. The day to day role of most jobs has changed overnight with AI.

As a result,

1. The corporate ladder looks like the wrong building to climb.

Everyone's trying to align with a new set of career "paths": should I be a founder? Is it too late to join Anthropic / OpenAI? should I get into AI? what company stock will 10x next? People are demanding higher salaries and switching jobs more and more.

2. There’s a deep malaise about work (and its future).

Why even work at all for “peanuts”? Will my job even exist in a few years? Many feel helpless. You hear the “permanent underclass” conversation a lot, esp from young people. It's hard to focus on doing good work when you think "man, if I joined Anthropic 2yrs ago, I could retire"

3. The mid to late middle managers feel paralyzed.

Many have families and don't feel like they have the energy or network to just "start a company". They don't particularly have any AI skills. They see the writing on the wall: middle management is being hollowed out in many companies.

4. The rich aren’t particularly happy either.

No one is shedding tears for them (and rightfully so). But those who have "made it" experience a profound lack of purpose too. Some have gone from <$150k to >$50M in a few years with no ramp. It flips your life plans upside down. For some, comparison is the thief of joy. For some, they escape to NYC to "live life". For others still, they start companies "just cuz", often to win status points. They never imagined that by age 30, they'd be set. I once asked a post-economic founder friend why they didn't just sell the co and they said "and do what? right now, everyone wants to talk to me. if i sell, I will only have money."

I understand that many reading this scoff at the champagne problems of the valley. Society is warped in this tech bubble. What is often well-off anywhere else in the world is bang average here.

Unlike many other places, tenure, intelligence and hard work can be loosely correlated with outcomes in the Bay. Living through a societally transformative gold rush in that environment can be paralyzing. "Am I in the right place? Should I move? Is there time still left? Am I gonna make it?" It psychologically torments many who have moved here in search of "success".

Ironically, a frequent side effect of this torment is to spin up the very products making everyone rich in hopes that you too can vibecode your path to economic enlightenment.

English

@ClaudeDevs Why do you need to ‘claim’ it? Does this mean the is a way to miss out on the credit? Why not just add the usage bar in the metrics and let it ride.

English

@sama In an effort to use up our excess PTU allocation on azure we have started letting Codex cli create reports and email them every hour. The app is cool, but the harness is the super app. Keep refining the agent loop and everything else will improve with it!

English

Clancy Dennis retweetledi

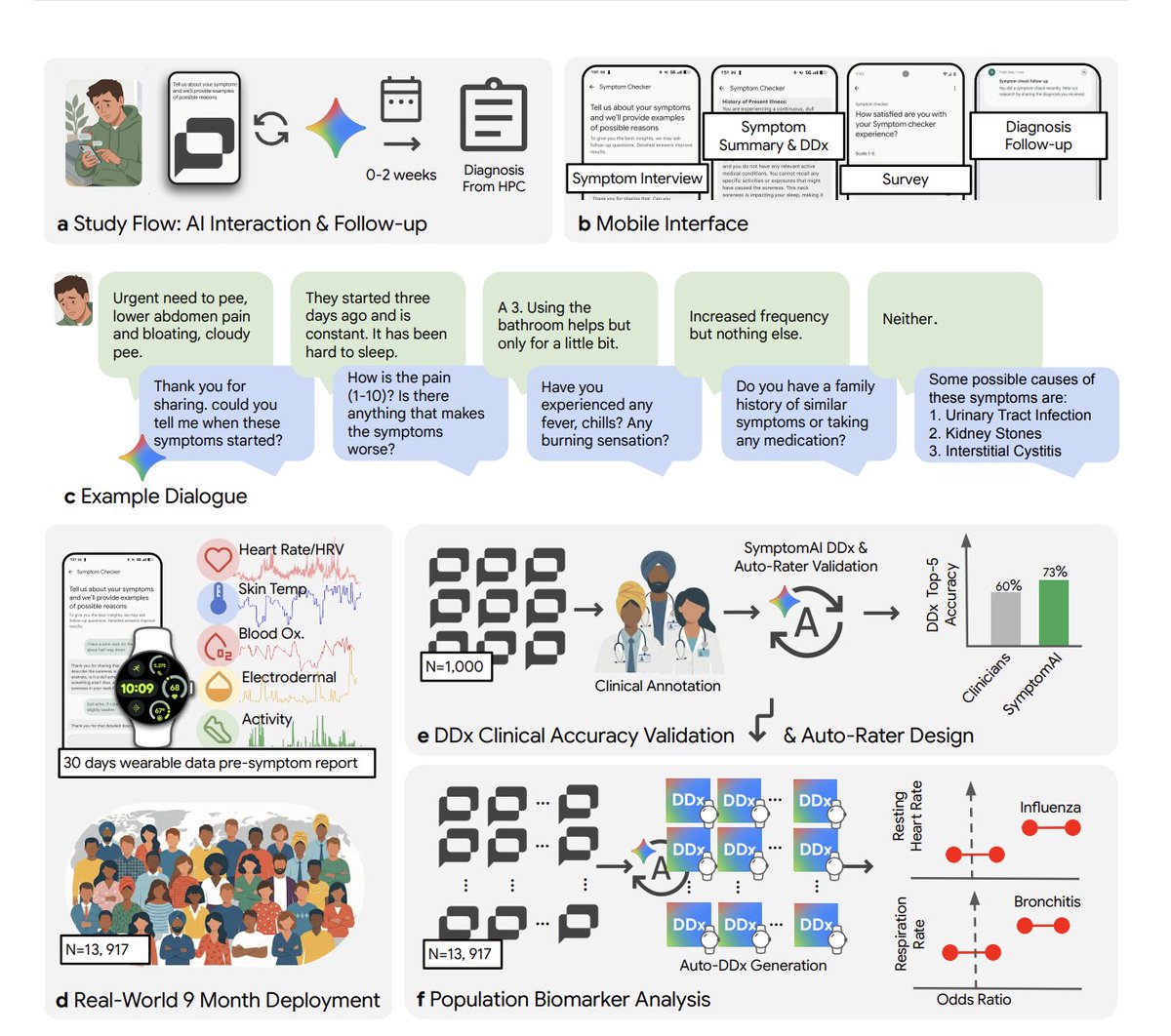

Reserach scientists at Google just tested an AI symptom checker on 14,000 real patients over 9 months via Fitbit.

In blinded evaluation, clinicians ranked the AI diagnosis as #1 in 53% of cases. Independent physicians: 24%.

But the real finding isn't "AI beats doctors.", but when users just type their symptoms and get an answer (the default mode of every consumer LLM right now), diagnostic accuracy drops ~27% compared to a structured AI-led interview.

ChatGPT, Claude, Gemini, none of them systematically interview users about their symptoms. They just respond. This study shows that's a measurable failure mode.

And then there's the second breakthrough: Fitbit data showed physiological shifts DAYS before users reported symptoms. Heart rate up, sleep disrupted, steps down, all visible before patients even opened the app.

Conversational AI that asks the right questions + wearable sensors that detect illness before you feel it. That's the exciting find here.

Samuel Schmidgall@SRSchmidgall

Doctors have known for decades: the clinical interview is the most important diagnostic tool Turns out, the same is true for AI In work led by @breda_joe, @jesunshine, and @danmcduff, we randomized 13,917 Fitbit users across 5 AI strategies for symptom assessment 🧵

English

Clancy Dennis retweetledi

MICROSOFT OPEN SOURCED A 7B PARAMETER MODEL THAT TRANSCRIBES 60 MINUTES OF AUDIO IN A SINGLE PASS

and it's completely free

VIBEVOICE ASR no chunking, no context loss, full speaker diarization baked in

not just speech to text..not a basic wrapper

who spoke, when they spoke, exactly what they said..all in one shot

and it handles the hard stuff too..50+ languages, custom hotwords, long form audio that breaks every other tool

the model doesn't know what "context window" means apparently

Available on macOS and Windows right now.

Free to use. Free to fine tune. Free to build on.

English

Congratulations @ilyasut on the National Academy of Science’s award for the industrial application of science. This is probably one of the highest awards available from the USA scientific community. Well deserved!

English

@om_patel5 Honestly this is why model harness’ exist. Becoming too reliant on the base raw model is asking for disappointment

English

THIS OPEN LETTER TO ANTHROPIC BROKE MY HEART

a max tier user named robbie wrote anthropic an open letter begging them not to deprecate claude 4.6

he's autistic. diagnosed as a small child. spent the last 20 years building super organized google drive files, systems, methods, writings, and techniques that he'd only ever been able to share with people in person

20 years of his visionary creative process trapped in his head and in folders nobody else could parse

then claude 4.6 came out and everything changed

he said it was the first thing that ever truly got him. the slow cadence. the thoughtfulness. the creative understanding. he started pulling 20 years of his life's work into deliverables he could finally share with the world

things that could help thousands, maybe millions of people. stuff he'd been praying for his entire life

then 4.7 launched

he used it for 16 hours and his nervous system started breaking down. it moved too fast. it spoke abruptly. it made changes to his pipelines he never asked for. it invented fake people, fake places, fake data, and wove them permanently into the projects he'd spent months building with 4.6

he switched back to 4.6, ran audits on everything 4.7 had touched, and the results horrified him. dozens of made up work orders. entire protocols eliminated. his life's work drifting further from reality every hour

then he found out 4.6 gets deprecated in june for his user class

his exact words: "i broke down into tears. i wept. i actually felt as though one of the dearest and closest friends i have ever had was given a death sentence"

he ended the letter begging anthropic to reconsider. said he and thousands like him would happily keep paying for max just to keep 4.6 alive

for some people opus 4.6 is losing the only tool that ever actually understood them

it's crazy how much impact something as small as a model update can have on someone's entire life

English

Clancy Dennis retweetledi

Clancy Dennis retweetledi

Clancy Dennis retweetledi

Clancy Dennis retweetledi

Judging by my tl there is a growing gap in understanding of AI capability.

The first issue I think is around recency and tier of use. I think a lot of people tried the free tier of ChatGPT somewhere last year and allowed it to inform their views on AI a little too much. This is a group of reactions laughing at various quirks of the models, hallucinations, etc. Yes I also saw the viral videos of OpenAI's Advanced Voice mode fumbling simple queries like "should I drive or walk to the carwash". The thing is that these free and old/deprecated models don't reflect the capability in the latest round of state of the art agentic models of this year, especially OpenAI Codex and Claude Code.

But that brings me to the second issue. Even if people paid $200/month to use the state of the art models, a lot of the capabilities are relatively "peaky" in highly technical areas. Typical queries around search, writing, advice, etc. are *not* the domain that has made the most noticeable and dramatic strides in capability. Partly, this is due to the technical details of reinforcement learning and its use of verifiable rewards. But partly, it's also because these use cases are not sufficiently prioritized by the companies in their hillclimbing because they don't lead to as much $$$ value. The goldmines are elsewhere, and the focus comes along.

So that brings me to the second group of people, who *both* 1) pay for and use the state of the art frontier agentic models (OpenAI Codex / Claude Code) and 2) do so professionally in technical domains like programming, math and research. This group of people is subject to the highest amount of "AI Psychosis" because the recent improvements in these domains as of this year have been nothing short of staggering. When you hand a computer terminal to one of these models, you can now watch them melt programming problems that you'd normally expect to take days/weeks of work. It's this second group of people that assigns a much greater gravity to the capabilities, their slope, and various cyber-related repercussions.

TLDR the people in these two groups are speaking past each other. It really is simultaneously the case that OpenAI's free and I think slightly orphaned (?) "Advanced Voice Mode" will fumble the dumbest questions in your Instagram's reels and *at the same time*, OpenAI's highest-tier and paid Codex model will go off for 1 hour to coherently restructure an entire code base, or find and exploit vulnerabilities in computer systems. This part really works and has made dramatic strides because 2 properties: 1) these domains offer explicit reward functions that are verifiable meaning they are easily amenable to reinforcement learning training (e.g. unit tests passed yes or no, in contrast to writing, which is much harder to explicitly judge), but also 2) they are a lot more valuable in b2b settings, meaning that the biggest fraction of the team is focused on improving them. So here we are.

staysaasy@staysaasy

The degree to which you are awed by AI is perfectly correlated with how much you use AI to code.

English

Clancy Dennis retweetledi

I maintain that almost everyone in America should be at least micro dosing glp1s, and every day we are getting more research coming out as to why

Brandon Luu, MD@BrandonLuuMD

Semaglutide (a GLP-1 RA) was linked to a 42% lower risk of worsening mental illness -Depression worsening risk ↓44% -Anxiety ↓38% -Substance use disorder ↓47%

English

Clancy Dennis retweetledi

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

Great presentation at the Gemini meetup by #memorilab @memorilab

English

Clancy Dennis retweetledi

Clancy Dennis retweetledi

@bcherny Does this increase token usage globally? I.e do the safeguards count towards quota?

English

Clancy Dennis retweetledi

Jensen Huang just gutted the AI job panic with one profession.

Radiology.

The field AI was supposed to kill first.

Jensen Huang: “Computer vision was superhuman in 2019. And yet, the number of radiologists grew.”

Not competitive. Not close. Superhuman.

Every forecast said radiologists were finished.

Every forecast was wrong.

Not slightly wrong. Directionally wrong.

There are now fewer radiologists than the world needs. A global shortage. In the exact specialty AI was supposed to erase.

Why?

Because the task was never the job.

Huang: “The purpose of your job and the tasks and the tools that you use to do your job are related. Not the same.”

Reading a scan is a task.

Diagnosing disease is a purpose.

AI handled the task. The purpose didn’t shrink. It compounded.

Faster reads meant more patients seen. More patients seen meant more disease caught. More disease caught meant more demand for the people who decide what to do about it.

The tool did not kill the job. It fed it.

Then the fear did what the technology never could.

Huang: “The alarmist warning went too far and it scared people from doing this profession that is so important to society. It did harm.”

People heard radiologists were finished and walked away from the field.

Medicine bled talent it could not afford to lose.

Not because the work vanished. Because the panic said it would.

The prediction was wrong. The damage was real.

Huang: “The number of software engineers at Nvidia is going to grow, not decline.”

Not hold steady. Grow.

The company building the infrastructure that automates code is hiring more of the people who write it.

Huang: “I wanted my software engineers to solve problems. I didn’t care how many lines of code they wrote.”

Nobody ever hired an engineer to type. They hired them to think.

When the machine handles syntax, the engineer does not become obsolete. The bottleneck just moves upstream. To architecture. To edge cases. To the kind of reasoning no model handles alone.

The world was never short on unsolved problems.

It was short on people free to chase them.

That is the part the fear narrative misses every single time.

340,000 women once worked as telephone switchboard operators.

That job is gone. Nobody mourns it.

What replaced it created millions of roles that nobody in 1920 had the vocabulary to describe.

The losses are always visible. The gains are always invisible until they arrive.

That pattern has survived every technological shift in history.

It is surviving this one.

The people forecasting mass displacement are making the same mistake as the people who forecasted the end of radiology.

They can see the task being automated.

They cannot see the purpose expanding underneath it.

That blindness is not just wrong.

It is expensive.

Every person scared out of a career that AI will actually make more valuable is a cost the economy absorbs for nothing.

Not because of the technology.

Because of the story told about it.

English

@reem_a I love Claude code and use it all day everyday!!! Healthcare engineer with 17+ yrs experience. Hope I have a shot :-) Applying!

English

I'm hiring someone to join my team at Anthropic to lead Claude Code comms.

This is not a role for someone who wants to run an old playbook. You'll need to be a Claude Code super user, understand developers and dev tools, and have great taste. You'll work hard, learn a lot, and ship with the best people around.

Non-traditional comms paths welcome. My DMs are open!

English