Untethered

5.5K posts

PM Carney to announce clean electricity strategy today bnnbloomberg.ca/business/polit…

🚨 After everything Canadians have witnessed in 2026: 👇 📍110,000 jobs gone 📍 4.5M living in poverty 📍Biggest house price drop ever 📍Construction falling 18.1% 📍Debt taller than space 🚀 📍Poorer than Alabama 📍Bengali ballots at nominations 🗳️ 📍Pension eyed for Net Zero 💸 📍Elbows up then deeper integration 🕺 📍0.5% of HIS money in Canada I have ONE question for every Canadian. 👇 #CdnPoli #ElbowsUp #WakeUpCanada 🇨🇦

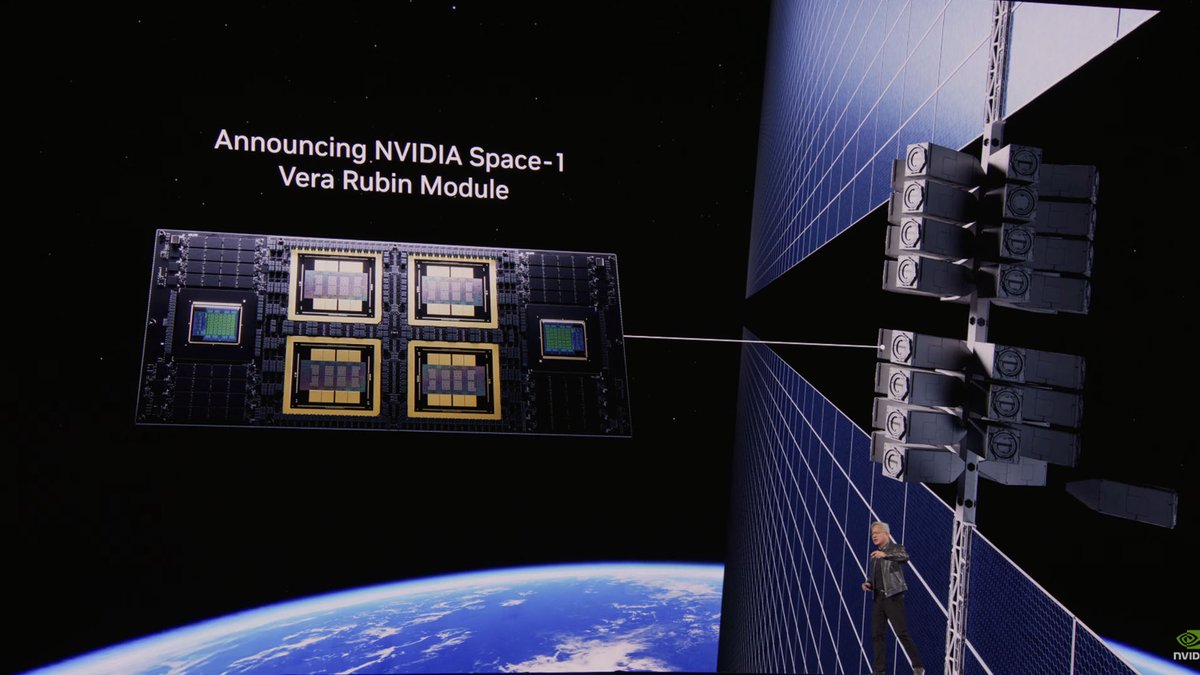

What the SpaceX–Anthropic Deal Means Two weeks ago, we published a note laying out what GPT-5.5's release implied. The conclusion was simple: whoever secures compute first, in greater volume, and with greater reliability ultimately takes the win. With OpenAI's 30GW roadmap dwarfing Anthropic's 7–8GW, we closed by arguing that the structural advantage on compute sat with OpenAI. Less than a fortnight later, that conclusion is being tested. On May 6, Anthropic signed a single-tenant lease for the entirety of Colossus 1 with SpaceXAI — the infrastructure subsidiary that consolidates Elon Musk's xAI and SpaceX. The asset carries more than 220,000 GPUs and 300MW of power, and crucially, is scheduled to come online within this month. It served as the capstone of Anthropic's April blitz, which added 13.8GW of cumulative capacity over the span of a single month. On headline numbers alone, OpenAI took more than a year to stack 18GW; Anthropic has put 13.8GW in the ground in thirty days. The takeaways break down into three. First, the compute pecking order has been redrawn again. Anthropic has now swept up the AWS expansion (5GW, with $100B+ in spend commitments over a decade), Google + Broadcom (3.5GW of TPU), Google Cloud (5GW alongside a $40B investment), and now SpaceXAI's Colossus 1 (0.3GW). Cumulative committed capacity, inclusive of pre-April allocations, sits at 14.8GW. This is still only half of OpenAI's 2030 target of 30GW, but the fact that the SpaceX lease will be live inside a month makes "deliverability" a qualitatively different proposition. Second, Elon Musk is the plaintiff in an active lawsuit against OpenAI — and at the same time, the supplier handing 220,000+ GPUs and 300MW of power, in one block, to OpenAI's most formidable competitor. The timing matters: the deal was struck in the middle of the Musk–Altman trial. We read this as a deliberate pincer with OpenAI in the middle. In the courtroom, Musk works to dismantle the moral legitimacy of OpenAI's leadership; in the market, he arms Anthropic to absorb OpenAI's revenue and user base. Third, the structure is financial-engineering perfection — a clean win-win for both sides. xAI can recognize $6B of annual revenue from a single contract, an amount that almost precisely offsets its Q1 2026 annualized net loss of $6B. It also accelerates the cleanup of SpaceXAI's pre-IPO balance sheet, with the entity now being floated at around $1.75T. Anthropic, on the other side, converts roughly $5B of spend into what it expects to be $15B of ARR via the coming inference-revenue surge. (Mirae Asset Securities, May 8, 2026)