Shangshang Wang

56 posts

Shangshang Wang

@UpupWang

Phd @CSatUSC | LLM & RL | Intern @Alibaba_Qwen | Prev. Intern @bespokelabsai @bluelightai

We now know that LoRA can match full-parameter RL training (from x.com/thinkymachines… and our Tina paper arxiv.org/abs/2504.15777), but what about DoRA, QLoRA, and more? We are releasing a clean LoRA-for-RL repo to explore them all. github.com/shangshang-wan…

Introducing Tinker: a flexible API for fine-tuning language models. Write training loops in Python on your laptop; we'll run them on distributed GPUs. Private beta starts today. We can't wait to see what researchers and developers build with cutting-edge open models! thinkingmachines.ai/tinker

We now know that LoRA can match full-parameter RL training (from x.com/thinkymachines… and our Tina paper arxiv.org/abs/2504.15777), but what about DoRA, QLoRA, and more? We are releasing a clean LoRA-for-RL repo to explore them all. github.com/shangshang-wan…

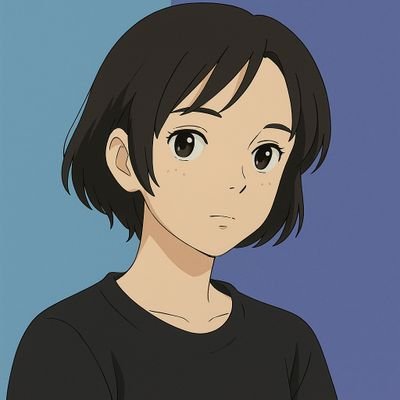

one last time. say it clearly: LoRA GRPO is rank at 1, all layers, same performance and 40% of the VRAM.

We now know that LoRA can match full-parameter RL training (from x.com/thinkymachines… and our Tina paper arxiv.org/abs/2504.15777), but what about DoRA, QLoRA, and more? We are releasing a clean LoRA-for-RL repo to explore them all. github.com/shangshang-wan…

LoRA makes fine-tuning more accessible, but it's unclear how it compares to full fine-tuning. We find that the performance often matches closely---more often than you might expect. In our latest Connectionism post, we share our experimental results and recommendations for LoRA. thinkingmachines.ai/blog/lora/

We now know that LoRA can match full-parameter RL training (from x.com/thinkymachines… and our Tina paper arxiv.org/abs/2504.15777), but what about DoRA, QLoRA, and more? We are releasing a clean LoRA-for-RL repo to explore them all. github.com/shangshang-wan…

LoRA makes fine-tuning more accessible, but it's unclear how it compares to full fine-tuning. We find that the performance often matches closely---more often than you might expect. In our latest Connectionism post, we share our experimental results and recommendations for LoRA. thinkingmachines.ai/blog/lora/

We now know that LoRA can match full-parameter RL training (from x.com/thinkymachines… and our Tina paper arxiv.org/abs/2504.15777), but what about DoRA, QLoRA, and more? We are releasing a clean LoRA-for-RL repo to explore them all. github.com/shangshang-wan…

much more convinced after getting my own results: LoRA with rank=1 learns (and generalizes) as well as full-tuning while saving 43% vRAM usage! allows me to RL bigger models with limited resources😆 script: github.com/sail-sg/oat/bl…

LoRA makes fine-tuning more accessible, but it's unclear how it compares to full fine-tuning. We find that the performance often matches closely---more often than you might expect. In our latest Connectionism post, we share our experimental results and recommendations for LoRA. thinkingmachines.ai/blog/lora/

😋 Want strong LLM reasoning without breaking the bank? We explored just how cost-effectively RL can enhance reasoning using LoRA! [1/9] Introducing Tina: A family of tiny reasoning models with strong performance at low cost, providing an accessible testbed for RL reasoning. 🧵

LoRA makes fine-tuning more accessible, but it's unclear how it compares to full fine-tuning. We find that the performance often matches closely---more often than you might expect. In our latest Connectionism post, we share our experimental results and recommendations for LoRA. thinkingmachines.ai/blog/lora/

Sparse autoencoders (SAEs) can be used to elicit strong reasoning abilities with remarkable efficiency. Using only 1 hour of training at $2 cost without any reasoning traces, we find a way to train 1.5B models via SAEs to score 43.33% Pass@1 on AIME24 and 90% Pass@1 on AMC23.