Osman R.

1.3K posts

Osman R.

@UsmanReads

I think I know, but I really don't. AI and Tech with 15 years in Industry. prev @groupon and @toptal

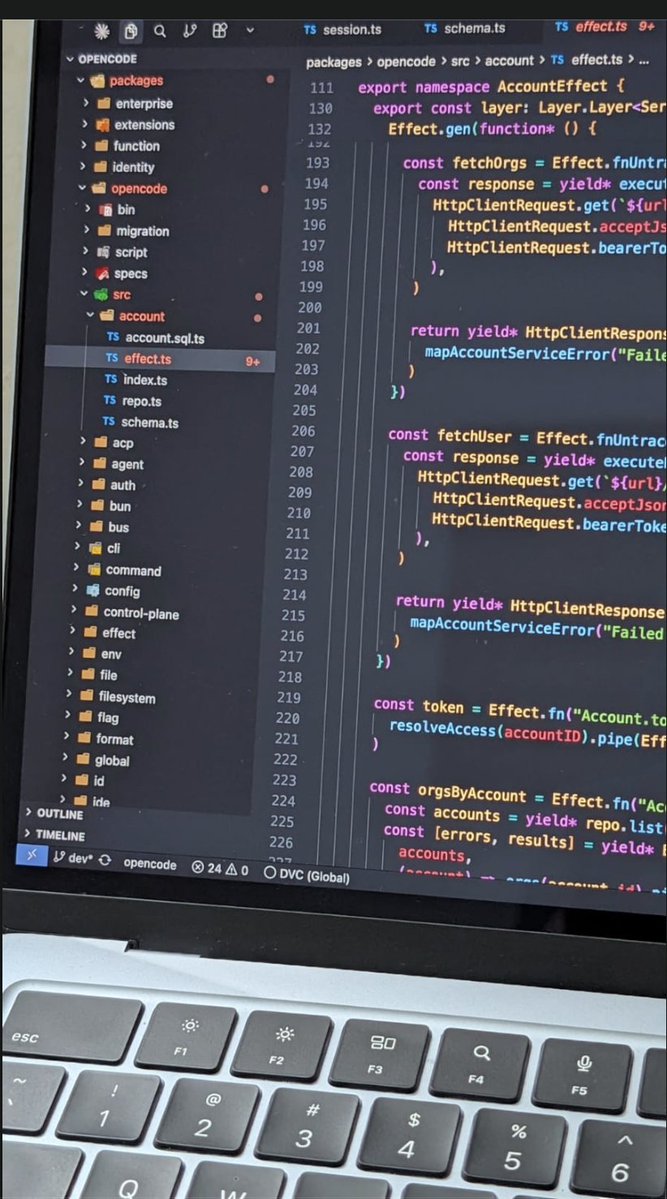

vendor-specific chatbots are broken by design that means the Sentry agent, the Linear agent, and any others you might have in Slack they are fine for some point situations, they're nice to get started with, but agents with generalized access outperform them in every single scenario some weeks ago we built an internal Slackbot, gave it access to a bunch of systems (Sentry, GitHub, Linear, Notion, etc), and its capabilities overnight far exceed these other bots "Oh cool Linear can now search your code bases" - our bot did that on day one, and then could push that information wherever it needed to go. Its useful to the point where I now discourage use of things like the Linear bot because it _creates worse outcomes_. this also goes beyond the simple generalization of access: we can customize it. we throw in skills-as-runbooks, templates, etc and the outcomes once again incrementally improve if your org hasnt already built a general purpose bot internally you should. if you need inspiration ours is open source on GitHub (albeit fairly unstable still) github.com/getsentry/juni…