VerAi

225 posts

@VerAI_Agents

Shared Computing Power for AI Development & Deployment Early Access Waitlist: https://t.co/OrAOSvuwrJ https://t.co/928u7A4smL

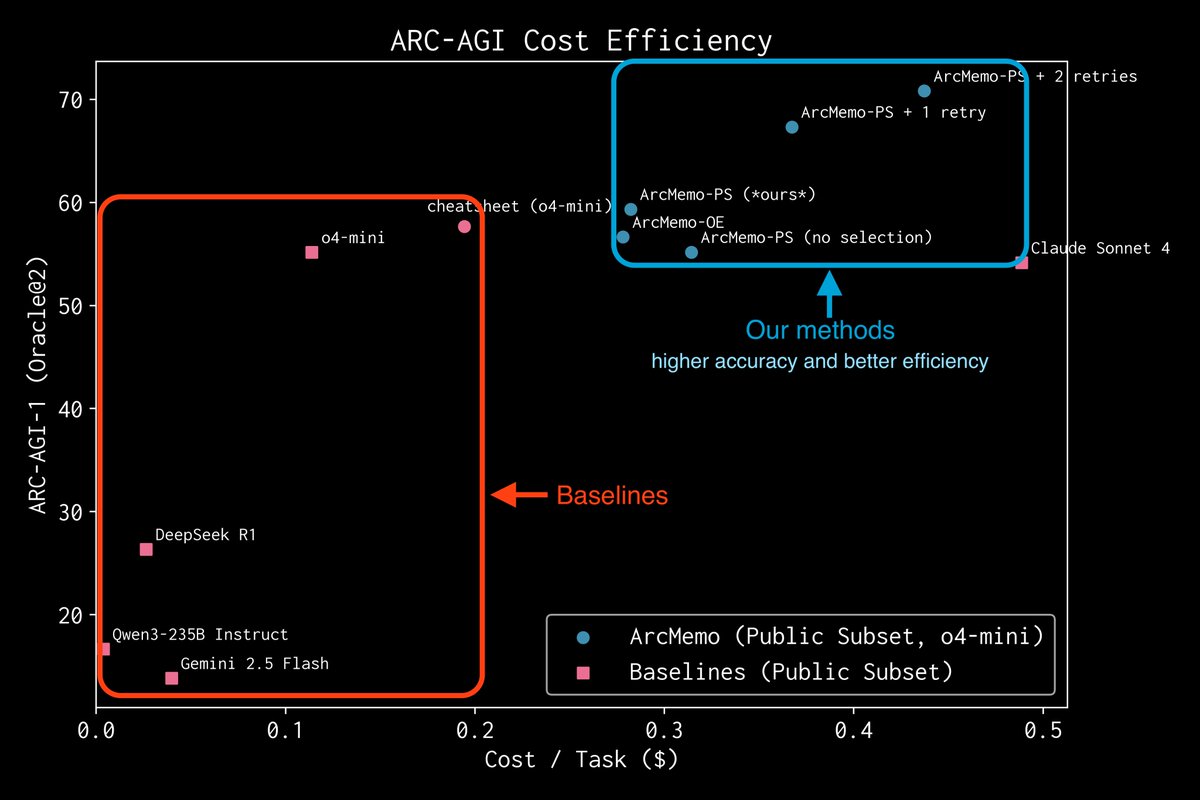

ArcMemo yields +7.5% relative on ARC-AGI vs o4-mini (same backbone). It extends the LLM idea of “compressing knowledge for generalization” into a lightweight, continually learnable abstract memory—model-agnostic and text-based. Preprint: Lifelong LM Learning via Abstract Memory

New in-depth blog post - "Inside vLLM: Anatomy of a High-Throughput LLM Inference System". Probably the most in depth explanation of how LLM inference engines and vLLM in particular work! Took me a while to get this level of understanding of the codebase and then to write up this one - i quickly realized i understimated the effort. 😅 It could have easily been a book/booklet (lol). I covered: * Basics of inference engine flow (input/output request processing, scheduling, paged attention, continuous batching) * "Advanced" stuff: chunked prefill, prefix caching, guided decoding (grammar-constrained FSM), speculative decoding, disaggregated P/D * Scaling up: going from smaller LMs that can be hosted on a single GPU all the way to trillion+ params (via TP/PP/SP) -> multi-GPU, multi-node setup * Serving the model on the web: going from offline deployment to multiple API servers, load balancing, DP coordinator, multiple engines setup :) * Measuring perf of inference systems (latency (ttft, itl, e2e, tpot), throughput) and GPU perf roofline model Lots of examples, lots of visuals! --- I realize i've been silent on social - many of you noticed and thanks for reaching out! :) --> I'm so back! lots of things happened. Also, in general, I'm a bit sick of superficial content, it really is an equivalent of junk food (h/t @karpathy). I want to do the best/deepest technical work of my life over the next years and write much more in depth (high quality organic food ;)) so I might not be as frequent around here as i used to be (? we'll see). I'll make it a goal to share a few paper summaries a week or stuff that's relevant / in the zeitgeist. If you have any topics that happened over the past few weeks/months drop it down in the comments i might focus on some of those in my next posts. --- Huge thank you to @Hyperstackcloud for giving me an H100 node to run some of the experiments and analysis that i needed to write this up. The team there led by Christopher Starkey is amazing! Also a big thank you to Nick Hill (who did a very thorough review of the post - basically a code review lol; Nick's a core vLLM contributor and principal SWE at RedHat) and to my friends Kyle Krannen (NVIDIA Dynamo), @marksaroufim (PyTorch), and @ashVaswani (goat) for taking the time during weekend when they didn't have to!

The Modern Software Stack