We processed over 1.3 Quadrillion tokens last month - that's 1,300,000,000,000,000 tokens! or to put it another way that's 500M tokens a second or 1.8 Trillion tokens an hour... 🤯

Victor Hah

1.6K posts

@VictorHah

Bridging AI compute and capital markets. Building an AI chip company | Ex-investment banker & hedge-fund analyst. Seoul ↔ Silicon Valley

We processed over 1.3 Quadrillion tokens last month - that's 1,300,000,000,000,000 tokens! or to put it another way that's 500M tokens a second or 1.8 Trillion tokens an hour... 🤯

The world is transitioning to a compute-powered economy. The field of software engineering is currently undergoing a renaissance, with AI having dramatically sped up software engineering even over just the past six months. AI is now on track to bring this same transformation to every other kind of work that people do with a computer. Using a computer has always been about contorting yourself to the machine. You take a goal and break it down into smaller goals. You translate intent into instructions. We are moving into a world where you no longer have to micromanage the computer. More and more, it adapts to what you want. Rather doing work with a computer, the computer does work for you. The rate, scale, and sophistication of problem solving it will do for you will be bound by the amount of compute you have access to. Friction is starting to disappear. You can try ideas faster. You can build things you would not have attempted before. Small teams can do what used to require much larger ones, and larger ones may be capable of unprecedented feats. More and more, people can turn intent into software, spreadsheets, presentations, workflows, science, and companies. People are spending less energy managing the tool and more energy focusing on what they are actually trying to create. That shift brings a kind of joy back into work that many people haven’t felt in a long time. Everyone can just build things with these tools. This is disruptive. Institutions will change, and the paths and jobs that people assumed were stable may not hold. We don’t know exactly how it will play out and we need to take mitigating downsides very seriously, as well as figuring out how to support each other as a society and world through this time. But there is something very freeing about this moment. For the first time, far more people can become who they want to become, with fewer barriers between an idea and a reality. OpenAI’s mission implies making sure that, as the tools do more, humans are the ones who set their intent and that the benefits are broadly distributed, rather than empowering just one or a small set of people. We're already seeing this in practice with ChatGPT and Codex. Nearly a billion people are using these systems every week in their personal and work lives. Token usage is growing quickly on many use-cases, as the surface of ways people are getting value from these models keeps expanding. Ten years ago, when we started OpenAI, we thought this moment might be possible. It’s happening on the earlier side, and happening in a much more interesting and empowering way for everyone than we’d anticipated (for example, we are seeing an emerging wave of entrepreneurship that we hadn’t previously been anticipating). And at the same time, we are still so early, and there is so much for everyone to define about how these systems get deployed and used in the world. The next phase will be defined by systems that can do more — reason better, use tools better, plan over longer horizons, and take more useful actions on your behalf. And there are horizons beyond, as AI starts to accelerate science and technology development, which have the potential to truly lift up quality of life for everyone. All of this is starting to happen, in small ways and large, today, and everyone can participate. I feel this shift in my own work every day, and see a roadmap to much more useful and beneficial systems. These systems can truly benefit all of humanity.

💡 You’re either a token producer or consumer. @GavinSBaker, Chief Investment Officer and Managing Partner at @Atreidesmgmt, sets the stage for the next phase of tokens and how they will be an incredibly vital asset for businesses. 📺 Watch the full #NVIDIAGTC Live Pregame Show: nvda.ws/4tEtfJP

New episode on The Creative Genius of Rick Rubin This episode contains a nonstop stream of ideas directly from Rick about how to make great work over a long period of time: (01:00) Just one habit, at the top of any field, can be enough to give an edge over the competition. Wooden considered every aspect of the game where an issue might arise, and trained his players for each one. Repeatedly. Until they became habits. The goal was immaculate performance. Wooden often said the only person you’re ever competing against is yourself. The rest is out of your control. This way of thinking applies to the creative life just as well. For both the artist and the athlete, the details matter, whether the players recognize their importance or not. Good habits create good art. The way we do anything is the way we do everything. Treat each choice you make, each action you take, each word you speak with skillful care. The goal is to live your life in the service of art. (08:41) Faith allows you to trust the direction without needing to understand it. (10:16) If you make the choice of reading classic literature every day for a year, rather than reading the news, by the end of that time period you’ll have a more honed sensitivity for recognizing greatness from the books than from the media. This applies to every choice we make. Not just with art, but with the friends we choose, the conversations we have, even the thoughts we reflect on. All of these aspects affect our ability to distinguish good from very good, very good from great. They help us determine what’s worthy of our time and attention. Because there’s an endless amount of data available to us and we have a limited bandwidth to conserve, we might consider carefully curating the quality of what we allow in. The objective is not to learn to mimic greatness, but to calibrate our internal meter for greatness. So we can better make the thousands of choices that might ultimately lead to our own great work. (14:25) We’re affected by our surroundings, and finding the best environment to create a clear channel is personal and to be tested. (27:57) Rules direct us to average behaviors. If we’re aiming to create works that are exceptional, most rules don’t apply. Average is nothing to aspire to. The goal is not to fit in. Communicate your singular perspective. (28:30) It’s a healthy practice to approach our work with as few accepted rules, starting points, and limitations as possible. Often the standards in our chosen medium are so ubiquitous, we take them for granted. They are invisible and unquestioned. (29:00) The world isn’t waiting for more of the same. The most innovative ideas come from those who master the rules to such a degree that they can see past them or from those who never learned them at all. (38:50) Fear of criticism. Attachment to a commercial result. Competing with past work. Time and resource constraints. The aspiration of wanting to change the world. And any story beyond “I want to make the best thing I can make, whatever it is” are all undermining forces in the quest for greatness. (42:32) To hone your craft is to honor creation. By practicing to improve, you are fulfilling your ultimate purpose on this planet. A lot more ideas in this episode including: The importance of developing a practice of paying attention, why impatience is an argument with reality, why you need to create space in your schedule to tap into ideas in from your subconscious, why you'll find what you're searching for by looking deeper, the reason it is important to carefully curate the quality of what we allow in: people, ideas, content, why rereading the same books again and again can help you find new ideas, how to find the environment that allows you to produce your best work —and how other artists have done so, several examples of the people that are the best in the world at what they do being full of self-doubt —and yet they do it anyways, why adversity is unavoidable, if you want to make great work for a long time you can’t self-sabotage, why the goal is to keep playing (Rubin has been playing for 40+ years), how you can overcome insecurities by naming them, how you can doubt your way to excellence, why distraction is not procrastination and why distraction can be a strategy in service of the work, why if you're aiming to create works that are exceptional most rules don’t apply, the importance of communicating your singular perspective, and why if there is one rule on creativity that’s unbreakable it’s that the need for patience is ever-present.

My conversation with Patrick O'Shaughnessy (@patrick_oshag), founder and CEO of Colossus & Positive Sum. 0:00 The Joy of Championing Undiscovered Talent 2:21 How One Tweet Changed David's Life 5:07 The Upanishads Passage That Shaped Patrick's Worldview 8:34 Growth Without Goals Philosophy 10:40 Why Media and Investing Are the Same Thing 28:41 The Search for True Understanding Through Biography 31:04 The Daniel Ek Dinner That Launched This Podcast 34:28 Making Your Own Recipe From the Ingredients of Great Lives 39:11 The Privilege of a Lifetime Is Being Who You Are 48:25 Bruce Springsteen's Battle With Depression and Self-Worth 53:21 Clean Fuel vs Dirty Fuel: The Source of Your Ambition 57:03 Professional Learners: The Unfair Advantage of Podcasting 1:00:18 Relationships Run the World 1:06:30 The Origin Story of Invest Like the Best 1:08:05 Building Colossus: Why Start a Magazine in 2025 1:14:01 People Are More Interested in People Than Anything Else 1:17:32 Finding Jeremy Stern and Hiring Through Output 1:23:40 Learn, Build, Share, Repeat 1:30:07 The Daisy Chain: How Reading Books Led to Everything 1:30:32 Red on the Color Wheel: Sam Hinkie's Observation 1:37:13 Finding Your Superpower and Becoming More Yourself 1:42:57 Repetition Doesn't Spoil the Prayer: Teaching as Leadership 1:46:02 Life's Work: A Lifelong Quest to Build Something for Others 1:49:51 The Ten Roles Game and What Matters Most 1:57:03 Husband, Father, Grandfather: The Roles That Endure 1:59:48 The Kindest Thing: Tim O'Shaughnessy and Meeting Lauren 2:05:11 Conclusion Includes paid partnerships.

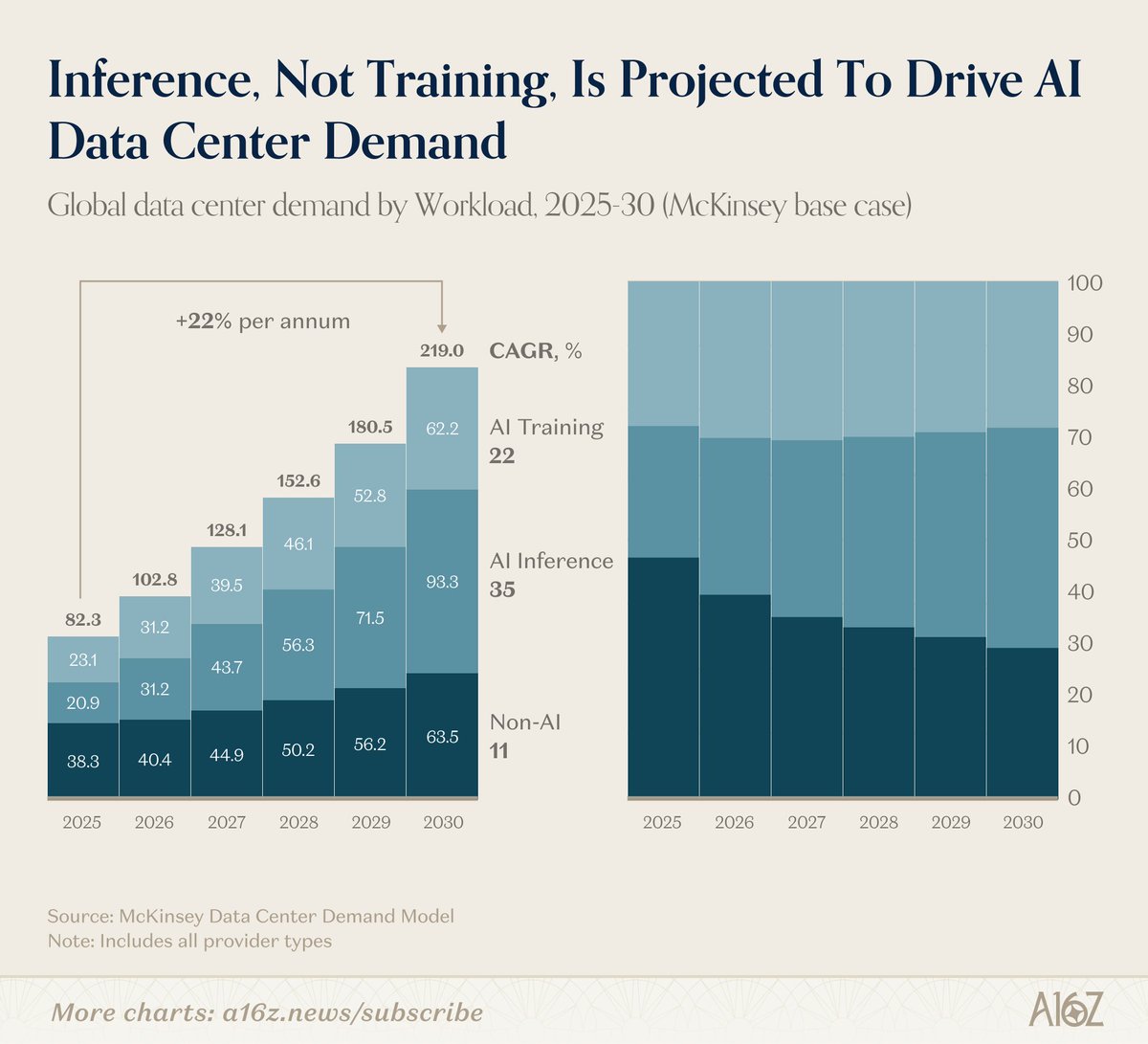

$NVDA $GFS NVIDIA’s reported agreement to acquire Groq for $20B in cash (per CNBC, amplified via Reuters and other wire coverage) represents a materially different strategic posture than NVIDIA’s prior M&A pattern, given both the headline size (largest reported NVIDIA acquisition to date) and the unusual carve-out that Groq’s early-stage cloud business would not be included. Public reporting indicates the information originated from Alex Davis, CEO of Disruptive (lead investor in Groq’s latest financing), and that neither NVIDIA nor Groq had issued an immediate confirmation at the time of publication. The same reporting frames the transaction as coming together quickly, only months after Groq raised $750M at a ~$6.9B valuation, and highlights Groq’s positioning as a high-performance inference chip vendor founded by ex-Google TPU engineers. Groq is best understood as a vertically integrated inference acceleration company whose core asset is an application-specific processor optimized for deterministic, low-latency execution of transformer-style workloads, paired with a compiler-led software stack and a distribution layer (GroqCloud) designed to reduce developer friction via OpenAI-compatible APIs and integrations. Groq brands its architecture as a Language Processing Unit (LPU) and consistently emphasizes that the design target is inference, not training. The company’s own architecture description centers on 1-core execution, large on-chip SRAM used as primary storage (explicitly not cache), a custom compiler that statically schedules compute and communication, and direct chip-to-chip connectivity intended to coordinate multi-chip execution without relying on conventional caching hierarchies or dynamic runtime scheduling. The technical premise is a deliberate inversion of the conventional GPU approach. GPUs deliver throughput via massively parallel, multi-core execution with dynamic scheduling, complex memory hierarchies, and heavy reliance on off-chip HBM bandwidth and sophisticated runtime/kernel optimization. Groq instead argues that inference bottlenecks are driven by latency variance (tail latency), synchronization overhead, and memory access unpredictability inherent in dynamically scheduled, cache-heavy architectures, particularly when workloads are latency sensitive and batch sizes cannot be inflated. Groq’s solution is to move “control” into the compiler: the full execution graph and inter-chip communication schedule are computed ahead of time down to clock-cycle granularity, with deterministic execution designed to reduce run-to-run variance. In Groq’s framing, the removal of caches, reorder buffers, speculative execution overhead, and other sources of contention enables predictable latency and high utilization without per-model kernel engineering typical of GPU tuning cycles. A critical nuance is that Groq’s determinism is not merely a software claim; it is tightly coupled to architectural constraints and system design choices that trade flexibility for predictability. Third-party technical commentary indicates Groq’s chip uses a fully deterministic VLIW-style approach with minimal buffering, no external memory, and heavy dependence on sharding models across many chips because on-chip SRAM capacity is limited. SemiAnalysis describes a ~725 mm^2 die on GlobalFoundries 14nm with ~230MB of SRAM and notes that “no useful models” fit on a single chip, forcing multi-chip partitioning for modern LLMs and driving a system-level design where networking and compilation are first-class scheduling problems rather than ancillary infrastructure. This is consistent with Groq’s own messaging that tensor parallelism across chips is a primary design goal, enabled by large on-chip SRAM and compile-time coordination of compute plus interconnect. The on-chip SRAM emphasis is central to Groq’s latency story and also its most constraining trade-off. Groq claims on-chip SRAM bandwidth “upwards of 80 TB/s” and contrasts that with off-chip HBM bandwidth “about 8 TB/s,” asserting a potential 10x advantage from bandwidth plus reduced trips across chip-to-memory boundaries. While these comparisons are marketing-oriented and depend on workload specifics, the architectural implication is clear: Groq prioritizes ultra-fast local weight/activation access and then scales capacity by adding chips, not by attaching large off-chip memory pools. This design can reduce latency for sequential inference layers and minimize unpredictable stalls, but it pushes complexity into partitioning strategy, interconnect topology, and compiler scheduling, and it increases the number of chips needed for very large parameter counts and large KV-cache footprints. Groq also highlights numeric formats and compiler-driven precision management as a performance lever. In its 2025 technical blog, Groq describes “TruePoint numerics,” including 100-bit intermediate accumulation and selective quantization choices (FP32 for attention-sensitive operations, block floating point for MoE weights, FP8 storage in error-tolerant layers), and claims 2-4x speedups versus BF16 without measurable accuracy degradation on benchmarks such as MMLU and HumanEval. Even if the absolute uplift is workload dependent, the strategic point is that Groq is pursuing performance via end-to-end co-design: precision policy is not just hardware capability (FP8/BF16) but compiler-enforced mapping of precision to error sensitivity, which can matter materially for inference cost-per-token if it reduces memory traffic and boosts throughput without forcing aggressive, accuracy-damaging quantization. Independent performance datapoints indicate Groq has been credible on latency-oriented inference speed, at least for certain regimes. EE Times reported in 2023 that Groq demonstrated Llama-2 70B inference at ~240 tokens/s per user on a cloud-based dev system described as 10 racks and 64 chips, using the company’s 1st-gen silicon introduced several years earlier. Separate Groq commentary around independent benchmarking cites ArtificialAnalysis.ai results showing ~241 tokens/s throughput and ~0.8s time to receive 100 output tokens for a Llama-2 70B API configuration, positioning the platform as a step-change in “available speed” for certain interactive use cases. These figures do not settle total cost-of-ownership versus GPUs or hyperscaler ASICs, but they establish that Groq’s system-level architecture can deliver strong single-user throughput and latency on large models when properly partitioned and scheduled. GroqCloud is the commercial wrapper that packages this hardware/software stack as “tokens-as-a-service,” aiming to make Groq adoption feel like switching API endpoints rather than adopting new silicon. Groq’s documentation states its API is designed to be “mostly compatible” with OpenAI client libraries, and its pricing page provides model-specific token rates, published speeds (tokens/s), prompt caching discounts, and batch processing discounts. For example, pricing lists inputs as low as $0.05 per 1M tokens and outputs as low as $0.08 per 1M tokens for certain smaller LLM configurations, with higher prices for larger models and long-context or MoE variants; it also advertises prompt caching with a 50% discount on cached input tokens for certain models and a batch API offering 50% lower cost for asynchronous processing windows. These mechanics are economically important because they demonstrate Groq’s go-to-market is not simply “sell chips,” but “sell predictable unit economics per token,” with tooling (batch, caching) that directly targets inference cost drivers (reused prompts, throughput smoothing, and asynchronous workloads). The cloud footprint and distribution partnerships indicate Groq has been building an inference-native “edge within the cloud” strategy rather than competing head-on with hyperscalers on breadth of services. A 2025 Groq newsroom release describes a European deployment in Helsinki with Equinix, positioned as latency reduction and data governance for European customers, and explicitly references Equinix Fabric enabling private connectivity to GroqCloud over public, private, or sovereign infrastructure. The same release enumerates additional capacity in the U.S. (Equinix, DataBank), Canada (Bell Canada), and Saudi Arabia (HUMAIN), and states these sites collectively served more than 20M tokens/s across Groq’s global network at that time. That supply-side metric matters because it provides a directional sense that Groq is scaling capacity as a network, not merely as a chip vendor. Customer disclosure is inherently limited because Groq is private and many enterprise deployments are not public, but Groq’s marketing materials and partnerships provide signals about demand vectors. The company’s public website displays logos of large consumer and enterprise brands (e.g., Dropbox, Vercel, Chevron, Volkswagen, Canva, Robinhood, Riot Games, Workday, Ramp) and includes a published customer quote claiming a 7.41x chat speed increase and an 89% cost reduction after moving to GroqCloud, followed by a tripling of token consumption. While marketing claims should be treated as case-specific and not generalized, they indicate that Groq is targeting both AI-native developers (who measure success by latency and cost-per-token) and enterprise buyers (who care about predictable performance and governance). Supplier and dependency mapping for Groq spans 3 layers: silicon production, system integration, and cloud infrastructure. On silicon, third-party analysis indicates GlobalFoundries 14nm for the 1st-gen Groq chip, implying a supply chain less constrained by the most capacity-tight leading-edge nodes and advanced packaging bottlenecks that dominate high-end GPU supply (HBM stacks, CoWoS-type packaging constraints). If accurate, this is strategically meaningful because it suggests Groq capacity expansion could be gated more by conventional wafer supply, board assembly, and data center power than by the same HBM/advanced packaging scarcity that has constrained top-tier GPU ramp cycles. On systems and cloud, Groq’s own releases identify colocation and connectivity partners (Equinix, DataBank, Bell Canada) and a Middle East partner (HUMAIN), implying dependencies on data center real estate, power availability, and network connectivity, alongside procurement of standard server components, NICs/switching, racks, and cooling infrastructure. The Groq design narrative also emphasizes air cooling and reduced need for complex power/cooling infrastructure, which—if realized in deployments—can widen the set of feasible hosting locations and lower deployment friction relative to liquid-cooled, very high power density GPU racks. Against that backdrop, the strategic rationale for NVIDIA acquiring Groq can be framed as a set of overlapping objectives: inference silicon optionality, architectural hedging, competitive defense, and supply chain diversification, with the carve-out of GroqCloud signaling a preference to avoid direct cloud competition and to focus on IP and product portfolio control rather than operating a capital-intensive token-serving business. The deal, if confirmed, would occur at a valuation step-up of ~190% versus Groq’s reported ~$6.9B private valuation in the September $750M round, reinforcing that any acquisition logic would be predominantly strategic rather than a conventional financial multiple arbitrage. The most compelling strategic driver is inference. Training has historically been the center of gravity for cutting-edge GPU demand, but inference volume is structurally larger and more distributed as deployments scale, with economics dominated by cost-per-token, latency guarantees, and utilization under spiky demand. Inference workloads also create a strategic vulnerability for NVIDIA: hyperscalers and large platforms can justify bespoke ASICs (TPU, Trainium/Inferentia, Maia-class efforts) because inference is stable, repeatable, and can amortize software investment at massive scale. Groq’s core proposition—deterministic, compiler-scheduled inference with predictable latency—aligns directly with the segment where GPU generality is least valued and where “good enough” programmability plus superior unit economics can win share. Acquiring Groq would allow NVIDIA to own a credible inference-native architecture rather than relying solely on GPUs and software optimization to defend that segment. Competitive defense logic is also plausible. Groq occupies a specific competitive wedge: low-latency, high-throughput interactive inference, delivered via a simple API abstraction that reduces switching cost. That wedge directly pressures GPU inference margins in the long run because it makes inference price/performance comparisons more transparent at the token level, and it targets a developer persona that historically defaulted to CUDA-first ecosystems. Even if NVIDIA’s current-generation systems can achieve very high tokens/s per user with extensive optimization, the strategic risk is that competing architectures normalize the idea that inference is best served by special-purpose silicon with a simpler programming model, weakening CUDA lock-in at the application layer. NVIDIA has actively demonstrated that Blackwell-era systems can exceed 1,000 tokens/s per user in benchmarked configurations, but that performance leadership does not automatically translate to lowest cost-per-token across the full range of batch sizes, latency targets, and deployment environments. Groq’s existence as a credible alternative architecture forces NVIDIA to keep defending inference economics rather than only raw performance leadership. The “technology acquisition” rationale is unusually strong in this specific case because Groq’s differentiator is not a single block of silicon IP but an end-to-end methodology: compiler-led static scheduling, deterministic networking, and a system architecture designed around tensor-parallel inference rather than throughput-maximizing batch inference. NVIDIA’s stack is already compiler-heavy (TensorRT, Triton, CUDA graphs, kernel fusion, speculative decoding techniques), but GPUs remain dynamically scheduled devices with complex memory hierarchies and stochastic latency behaviors under contention. Groq’s approach provides an alternate design point: treating the entire inference execution (compute plus communication) as a statically schedulable program. In principle, that IP could be valuable even if Groq silicon itself is not adopted at massive scale, because it can inform how NVIDIA builds future inference-optimized products, compilers, and networking fabrics, especially as distributed inference with large models makes communication a first-order performance determinant. Supply chain diversification is a non-obvious but potentially important driver. If Groq’s mainstream product generation is truly based on a mature process node and avoids HBM, then the scaling constraints look different than those of state-of-the-art GPUs. NVIDIA’s ability to meet incremental demand has been tightly coupled to advanced packaging and HBM supply, and those constraints can remain binding even when wafer supply is available. An inference ASIC architecture that relies primarily on on-chip SRAM and scales by adding chips—while not costless—could reduce dependence on HBM availability and advanced packaging capacity, enabling NVIDIA to ship “inference capacity” in higher absolute volumes or into geographies and customer segments where the highest-end GPUs are economically or logistically difficult to deploy. This could be particularly relevant for latency-sensitive inference deployed in regional colocation footprints rather than centralized hyperscale campuses. The carve-out of GroqCloud, if accurate, is itself a strategic signal about NVIDIA’s priorities. Operating a token-serving cloud at scale is capital intensive, structurally lower margin than silicon IP rents, and creates channel conflict with hyperscalers and CSP partners who are core NVIDIA customers. NVIDIA has generally positioned its cloud offerings through partnerships rather than as a direct hyperscale competitor. Excluding GroqCloud would preserve neutrality with CSPs and avoid inheriting multi-region data residency obligations and partner contracts, while still allowing NVIDIA to acquire Groq’s silicon, compiler technology, and engineering talent. At the same time, excluding GroqCloud would also mean NVIDIA would not automatically acquire the commercial proof-point of Groq’s unit economics or the customer contracts that validate product-market fit at scale, increasing the importance of diligence on whether Groq’s cloud pricing is structurally profitable or partially subsidized by fundraising. There is also a “preemptive acquisition” angle. The reporting identifies recent investors in Groq’s latest round including large financial institutions and strategic/industry players. In that context, Groq represents an asset that could plausibly have been acquired by a competitor (AMD/Intel) or by a hyperscaler seeking to accelerate inference independence. NVIDIA acquiring Groq could be a defensive move to prevent a credible inference-native architecture from being weaponized by a rival with deep distribution. Even if GroqCloud is carved out, controlling the silicon roadmap and compiler IP would meaningfully constrain Groq’s ability to evolve into a standalone competitor, unless the carved-out entity retains long-term rights to the hardware and software stack. However, the strategic case is not one-sided; there are meaningful risks and potential contradictions that would need to be reconciled for the transaction to be value-accretive on a multi-year horizon. 1st, Groq’s architecture appears to rely on scaling out chip count to achieve capacity, which introduces system cost, networking complexity, and physical footprint considerations. The absence of external memory and limited on-chip SRAM implies very large models require substantial chip parallelism, and the economics then depend heavily on chip cost, yield, power efficiency, and interconnect overhead. SemiAnalysis explicitly frames Groq as trading space for time and raises questions about token economics and whether publicly advertised pricing reflects fully loaded costs or market share capture. 2nd, integration risk is non-trivial. Groq’s compiler-led deterministic model is philosophically and practically different from CUDA’s dominant programming and execution model. A poorly executed integration could create internal product confusion, dilute engineering focus, or alienate developers if the combined stack fragments. 3rd, there is cannibalization risk. If Groq-class inference silicon undercuts GPU inference economics, NVIDIA could face internal margin trade-offs, even if the goal is to defend share against hyperscaler ASICs. Cannibalization can still be rational if it prevents larger share loss, but it would require crisp portfolio segmentation and go-to-market discipline. The presence of NVIDIA’s own rapidly improving inference performance complicates the “need” for Groq but does not eliminate the “option value.” NVIDIA has demonstrated benchmark-leading tokens/s per user on Blackwell-based systems, suggesting that raw interactive throughput is not necessarily the limiting factor for NVIDIA’s product line. The more enduring strategic question is unit economics and architectural control: whether future inference demand is better monetized through general-purpose GPUs plus software optimization, or whether a bifurcated product portfolio (training GPUs plus inference-native ASICs) becomes necessary to defend total AI compute wallet share as hyperscaler ASIC penetration increases. Acquiring Groq could be a decisive move to ensure NVIDIA participates in both regimes rather than betting exclusively on GPUs to win inference forever. What is “special” about Groq’s technology relative to a typical accelerator roadmap is the tight coupling of determinism, compilation, and networking into a single scheduling problem. The LPU narrative emphasizes deterministic compute and networking, static scheduling, and direct chip-to-chip coordination that allows “hundreds” (more precisely, 100s) of chips to behave like a single scheduled resource. The architecture also explicitly targets tensor-parallel, latency-optimized distribution rather than pure data-parallel throughput scaling, which matters for real-time applications where a single response must arrive quickly rather than many requests being processed in bulk. The implication is that Groq is optimized for the time-to-first-token and steady token streaming behavior that defines user experience in interactive LLMs, and it attempts to achieve that without relying on large batch sizes that can degrade latency. From a portfolio manager’s perspective, the most important interpretation is that an NVIDIA-Groq combination would likely be less about “NVIDIA needs more inference speed” and more about controlling the architectural trajectory of inference acceleration and removing a fast-improving, developer-friendly competitor from the market. The carve-out of GroqCloud would reinforce that the transaction is aimed at IP, talent, and product optionality, not acquiring a cloud revenue stream. The valuation step-up implied by $20B versus $6.9B would therefore be justified only if the acquired assets materially reduce long-term competitive risk (hyperscaler ASIC displacement, inference margin compression) or enable new monetization vectors (inference ASIC product line, supply chain de-bottlenecking, improved software determinism) that would be difficult to achieve on a comparable timeline via internal R&D.