Vin ./

1.2K posts

Vin ./

@VinoisAsian

ALL IN | MAXI | @Gradient_HQ

Benchmarks that test what models have memorized are saturating fast. ARC-AGI-3 is asking a harder question: can AI actually learn something new on the fly? One direction we've been exploring: multi-agent orchestration. In our study, coordinating four frontier LLMs across multiple turns consistently matched or outperformed the strongest single model, even on tasks none of them could solve alone. The gap between "best single model" and "best coordination of models" is where a lot of the real progress is hiding. More on our multi-turn, multi-agent orchestration study: arxiv.org/abs/2509.23537

Announcing Personal Computer. Personal Computer is an always on, local merge with Perplexity Computer that works for you 24/7. It's personal, secure, and works across your files, apps, and sessions through a continuously running Mac mini.

Apple just dropped the M5 Max MacBook Pro and it's an AI Powerhouse. 4x faster AI Compute over M4 Max. These Specs are insane: - 18-core CPU with 6 "super cores" = world's fastest CPU core - 40-core GPU = rivals an RTX 4070 in a laptop - 128GB unified memory = more than most servers - 614 GB/s bandwidth = 4x what a DGX Spark gets - 24-hour battery life You can now run Llama 70B, a model that required a $40,000 GPU cluster 18 months ago on, a laptop at your local coffee shop. At ~20-30 tokens/sec it's fast enough to actually use. The "local AI" revolution just shipped for $3,499.

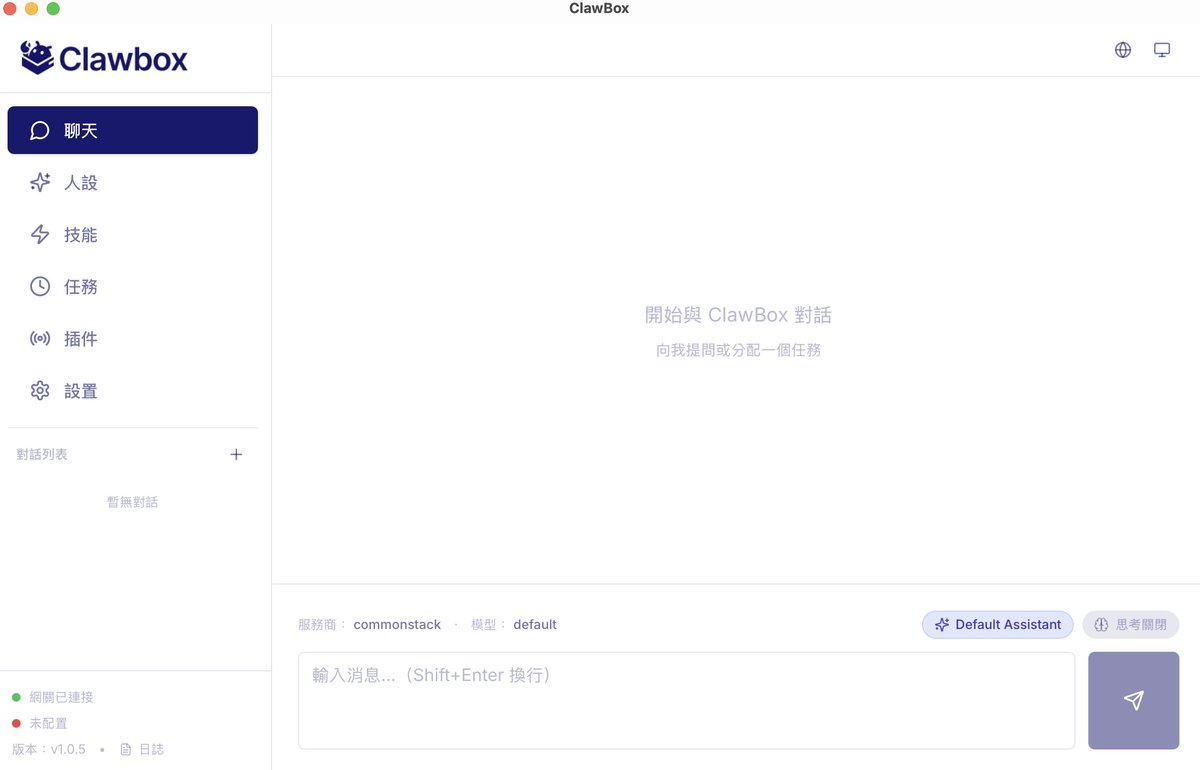

AI should be a public good, not something gatekept by a handful of megacorps We had Eric Yang, co-founder of Gradient Network, on the pod this week to talk through exactly that. Gradient's "Open Intelligence Stack" includes: i) Parallax for distributed model serving ii) Echo for decentralized reinforcement learning The whole thesis is that anyone should be able to run large models on consumer hardware (yes, including your Mac Minis + OpenClaws) Eric breaks down their $10M seed round led by Pantera, Multicoin, and HSG; where he sees the industry heading; and why post-training is going to be the dominant force in enterprise. Timestamps: 00:00 Intro 01:15 AI market is booming 02:29 Local compute is a hot topic 03:02 Parallax Inference Engine 04:34 Intelligence as a public good 05:46 AI models will become a commodity 07:32 Bottlenecks in AI models accessibility 09:34 Smaller AI models are catching up 11:01 How Gradient's Infrastructure Enables Model Development 12:15 Model post-training 14:24 How does reinforcement learning work? 17:35 AI going rogue 19:20 Gradient's token 23:02 AI entrepreneurs that Eric admires 26:11 Use cases on chain for AI 31:34 The trade-offs of coming to crypto 35:09 How low-spec GPUs will work on Gradient Ecosystem 38:08 Post-training will be the dominating force for enterprise 38:43 Open source models are way cheaper 41:39 Eric's founding story 49:07 Empowering researchers globally 53:37 Why did Multicoin Capital and Pantera Capital invested in Gradient 55:08 One-click deploy agent 58:16 Gradient in 3 years