Xiang Lisa Li retweetledi

Xiang Lisa Li

40 posts

Xiang Lisa Li retweetledi

When @XiangLisaLi2 built diffusion LMs in 2022 (arxiv.org/abs/2205.14217), we were interested in more powerful controllable generation (inference-time conditioning on an arbitrary reward), but inference was slow. Interestingly, the main advantage now is speed. Impressive to see how far diffusion LMs have come!

Inception@_inception_ai

We are excited to introduce Mercury, the first commercial-grade diffusion large language model (dLLM)! dLLMs push the frontier of intelligence and speed with parallel, coarse-to-fine text generation.

English

Xiang Lisa Li retweetledi

Xiang Lisa Li retweetledi

Xiang Lisa Li retweetledi

Lisa Li (@XiangLisaLi2) changes how people fine-tune (prefix tuning, the original PEFT), generate (diffusion LM, non-autoregressively), improve (GV consistency fine-tuning without supervision), and evaluate language models (using LMs). Prefix tuning:

arxiv.org/abs/2101.00190

English

Can we get language models to exhibit certain behaviors?

We train investigator models to elicit target behaviors from LMs, which helps us proactively detect harmful responses and hallucination!

Neil Chowdhury@ChowdhuryNeil

Excited to finally share what I’ve been up to at @TransluceAI: training Investigator Agents to elicit behaviors in LMs (including harmful responses and hallucinations)!

English

Xiang Lisa Li retweetledi

Eliciting Language Model Behaviors with Investigator Agents

We train AI agents to help us understand the space of language model behaviors, discovering new jailbreaks and automatically surfacing a diverse set of hallucinations.

Full report: transluce.org/automated-elic…

English

Xiang Lisa Li retweetledi

Introducing *Transfusion* - a unified approach for training models that can generate both text and images. arxiv.org/pdf/2408.11039

Transfusion combines language modeling (next token prediction) with diffusion to train a single transformer over mixed-modality sequences. This allows us to leverage the strengths of both approaches in one model. 1/5

English

Exciting joint work with

evanliu,

@percyliang

@tatsu_hashimoto

🙂Code available at

github.com/XiangLi1999/Au…

English

arxiv.org/abs/2407.08351

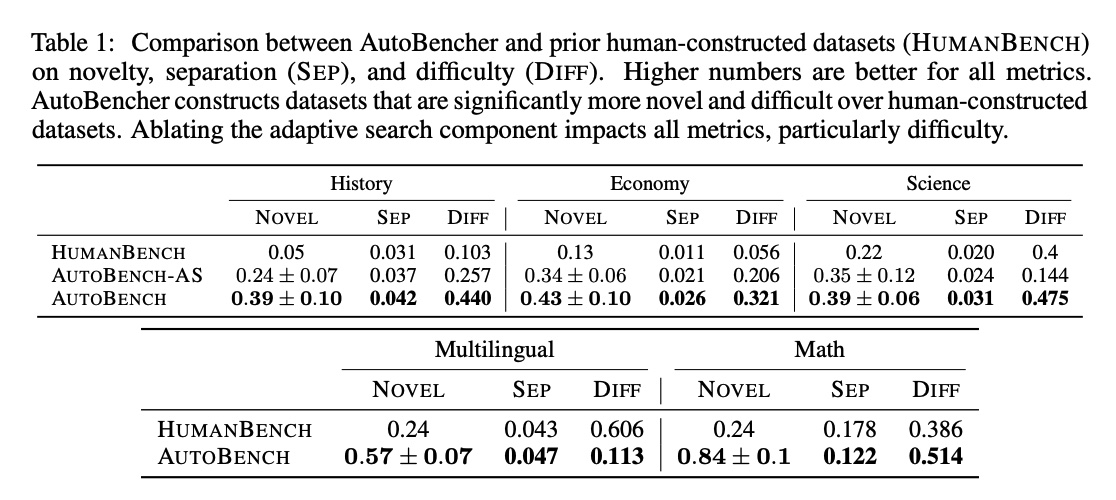

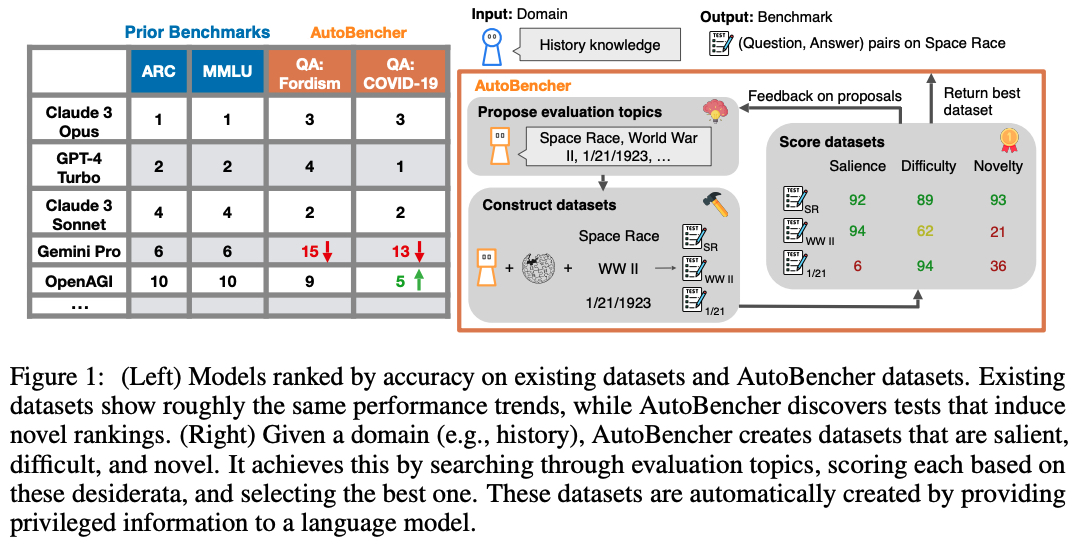

LM performance on existing benchmarks is highly correlated. How do we build novel benchmarks that reveal previously unknown trends?

We propose AutoBencher: it casts benchmark creation as an optimization problem with a novelty term in the objective.

English

Xiang Lisa Li retweetledi

New from @GoogleDeepMind: When can you trust your LLM? We show that LLMs consistently overestimate their own accuracy on some topics (eg nutrition) while underestimating it on others (eg math). Our Few-shot Recalibrator fixes LLM over/under-confidence: arxiv.org/abs/2403.18286 🧵

English

Xiang Lisa Li retweetledi

Prompting is cool and all, but isn't it a waste of compute to encode a prompt over and over again?

We learn to compress prompts up to 26x by using "gist tokens", saving memory+storage and speeding up LM inference:

arxiv.org/abs/2304.08467 (w/ @XiangLisaLi2 and @noahdgoodman)

🧵

English

Xiang Lisa Li retweetledi

Xiang Lisa Li retweetledi

The #cs224n poster session is happening now! We are super excited about amazing, cutting-edge NLP posters from ~650 students!

English

Xiang Lisa Li retweetledi

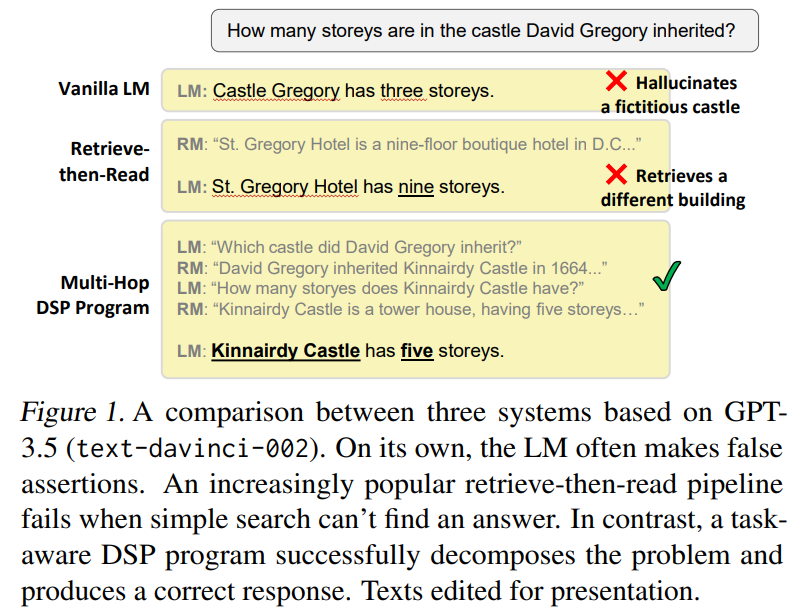

Introducing Demonstrate–Search–Predict (𝗗𝗦𝗣), a framework for composing search and LMs w/ up to 120% gains over GPT-3.5.

No more prompt engineering.❌

Describe a high-level strategy as imperative code and let 𝗗𝗦𝗣 deal with prompts and queries.🧵

arxiv.org/abs/2212.14024

English

Xiang Lisa Li retweetledi

Xiang Lisa Li retweetledi

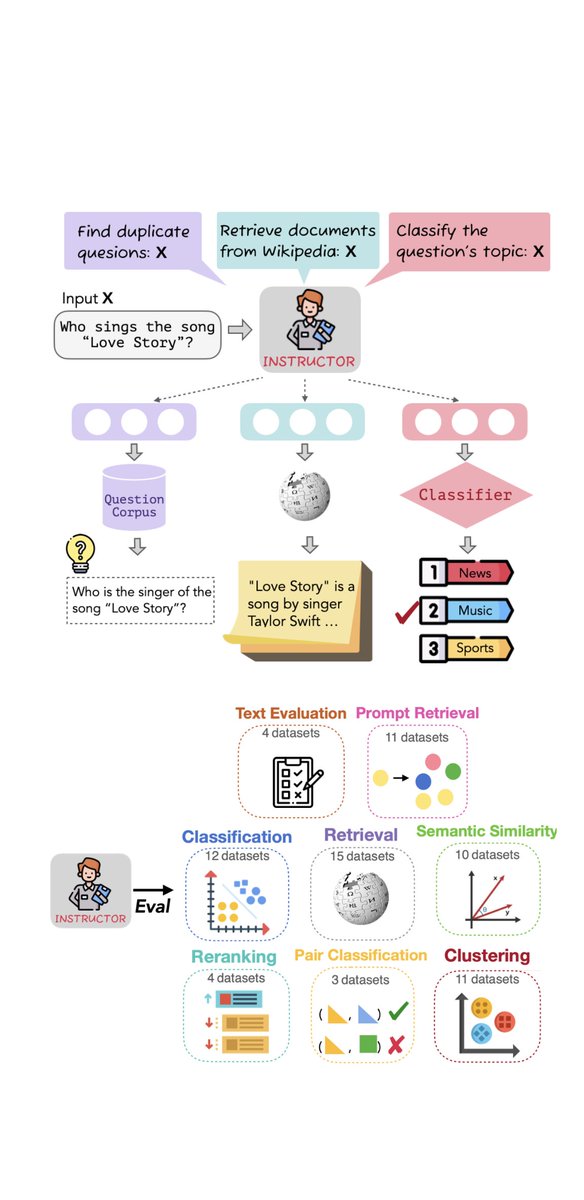

🙋♀️How to present the same text in diff. tasks/domains as diff. embeddings W/O training?

We introduce Instructor👨🏫, an instruction-finetuned embedder that can generate text embeddings tailored to any task given the task instruction➡️sota on 7⃣0⃣tasks👇!

instructor-embedding.github.io

English