@yoonholeee @StanfordAILab @chelseabfinn So cool idea! huge congrats Yoonho!

I'm pretty curious that how feasible text reward could be helping VLA in robotics.

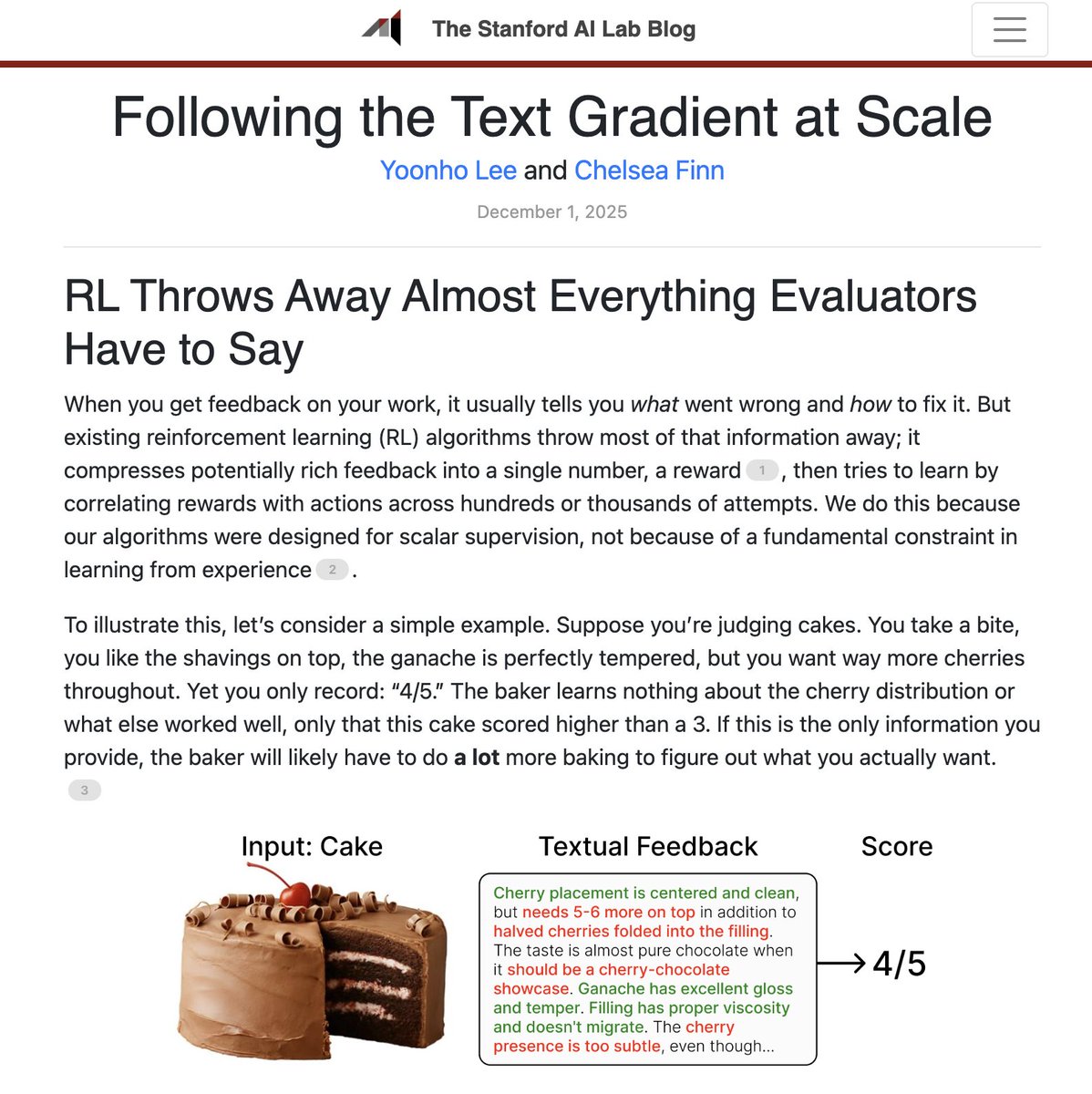

Sometimes there are more we care about than just success rates which could be quantified as a scalar value, what if hill climb on a holistic text reward

English